Official statement

Other statements from this video 4 ▾

- □ Comment SafeSearch filtre-t-il vraiment le contenu explicite dans les résultats de recherche ?

- □ Comment Google filtre-t-il les résultats explicites selon l'intention de recherche ?

- □ Le mode Flou SafeSearch va-t-il pénaliser le référencement de vos images ?

- □ SafeSearch filtre-t-il vos contenus pour les mineurs par défaut ?

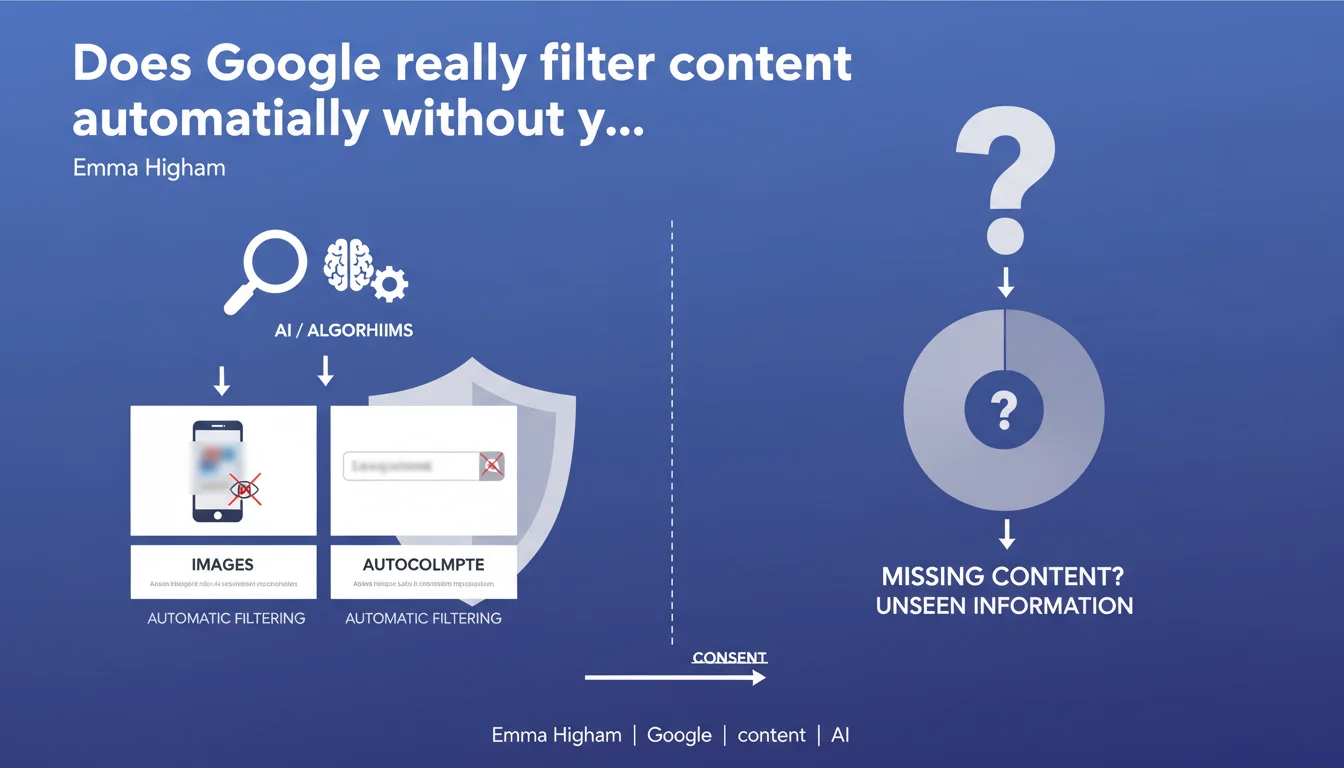

Google applies automatic filters to mature content in images and autocomplete suggestions, regardless of each user's SafeSearch settings. In practice, even with SafeSearch disabled, certain content remains hidden by default for everyone. This statement confirms the existence of an invisible filtering layer that applies universally to all search results.

What you need to understand

What exactly does Google filter without user intervention?

Google applies automatic filtering to two types of results: images and autocomplete suggestions. This system operates before individual SafeSearch settings even come into play.

In practical terms, certain content classified as "mature" disappears from image results and suggestions, regardless of your personal settings. It's a default censorship that applies universally.

Does this filtering bypass user preferences?

Yes, and that's the key point of this statement. SafeSearch settings theoretically allow users to choose their filtering level — strict, moderate, or disabled.

But this automatic layer operates upstream. The result: even with SafeSearch disabled, you don't see all indexed content. Google imposes its own definition of "mature" without letting you decide.

Why is Google communicating about this filtering now?

Transparency about filtering mechanisms has become a regulatory requirement in multiple jurisdictions. Google is likely anticipating questions about algorithmic moderation and search result neutrality.

This statement formalizes what's already been observed empirically: certain perfectly legal content remains invisible regardless of your settings. It's a form of non-optional filtering whose exact criteria remain opaque.

- Automatic filtering of mature content in images and autocomplete

- Universal application, independent of individual SafeSearch settings

- No way for users to disable this filtering layer

- Criteria for classifying content as "mature" are not publicly documented

- Potential impact on visibility of legitimate content that's misclassified

SEO Expert opinion

Is this statement consistent with real-world observations?

Absolutely. For years, SEO professionals have noticed unexplained discrepancies in image results, even with SafeSearch disabled. Artistic, medical, or educational content disappears without apparent reason.

This statement confirms what we suspected: there's an invisible filter that precedes user settings. The problem? The criteria for this filtering are never detailed. "Mature" is a catch-all label that can include perfectly legitimate content.

What gray areas remain in this announcement?

Google doesn't specify the exact criteria that push content into the "mature" category. [To verify] Is it only explicit nudity? Graphic violence? Terms flagged as sensitive by natural language processing algorithms?

Another unclear point: the exact scope of the filtering. Google mentions images and autocomplete, but what about regular web results? Videos? The lack of specificity suggests this filtering could extend to other surfaces without explicit announcement.

And that's where it gets problematic — this opacity makes any contestation or adjustment impossible for affected website publishers. You don't know why your content is filtered, or how to fix it.

Can you bypass or influence this filtering?

The honest answer: probably not directly. This automatic system sits outside traditional SEO levers. You can't audit it, fix it via tags, or control it through robots files.

However, some observations suggest that editorial context and domain reputation play a role. An established medical website might have fewer false positives than an amateur blog on the same topic. [To verify] But Google provides no guarantees on this.

Practical impact and recommendations

Which types of sites are most exposed to this filtering?

Sites covering reproductive health, sexual education, art (artistic nudity, body photography), wellness, or LGBTQ+ topics are on the front lines. Even with perfectly legal and editorially rigorous content, the risk of misclassification exists.

E-commerce sites selling lingerie, intimate wellness products, or art can see their product images filtered. Result: partial invisibility in Google Images, which is often a significant traffic source for these sectors.

How can you detect if your content is affected?

First step: check if your images appear in Google Images with SafeSearch both disabled AND enabled. Compare. If certain images disappear even with SafeSearch disabled, they're likely caught by this automatic filtering.

Also analyze your organic image traffic in Search Console. Unexplained drops in impressions on certain queries can signal recent filtering. Unfortunately, Google never explicitly notifies you of this type of classification.

What can you concretely do to minimize the risks?

- Add reinforced editorial context around sensitive images (descriptive captions, introductory paragraphs that clearly establish the educational/medical/artistic purpose)

- Use descriptive filenames and alt-text that anchor the image in an unambiguous context

- Segment your content: host potentially sensitive content on clearly identified sections of your site with coherent editorial navigation

- Strengthen your domain's E-E-A-T signals (recognized expertise, identified authors, cited sources) to improve your overall classification

- Diversify traffic sources — don't rely solely on Google Images if your business touches sensitive topics

- Regularly monitor your Search Console performance and note any unexplained drops unrelated to your actions

❓ Frequently Asked Questions

Ce filtrage automatique s'applique-t-il aussi aux résultats de recherche web classiques ?

Peut-on demander à Google de réévaluer un contenu filtré à tort ?

Les sites avec HTTPS ou des certificats de confiance sont-ils moins touchés ?

Ce filtrage est-il identique dans tous les pays ?

Un contenu filtré dans Google Images reste-t-il indexé et accessible via d'autres canaux ?

🎥 From the same video 4

Other SEO insights extracted from this same Google Search Central video · published on 24/10/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.