Official statement

What you need to understand

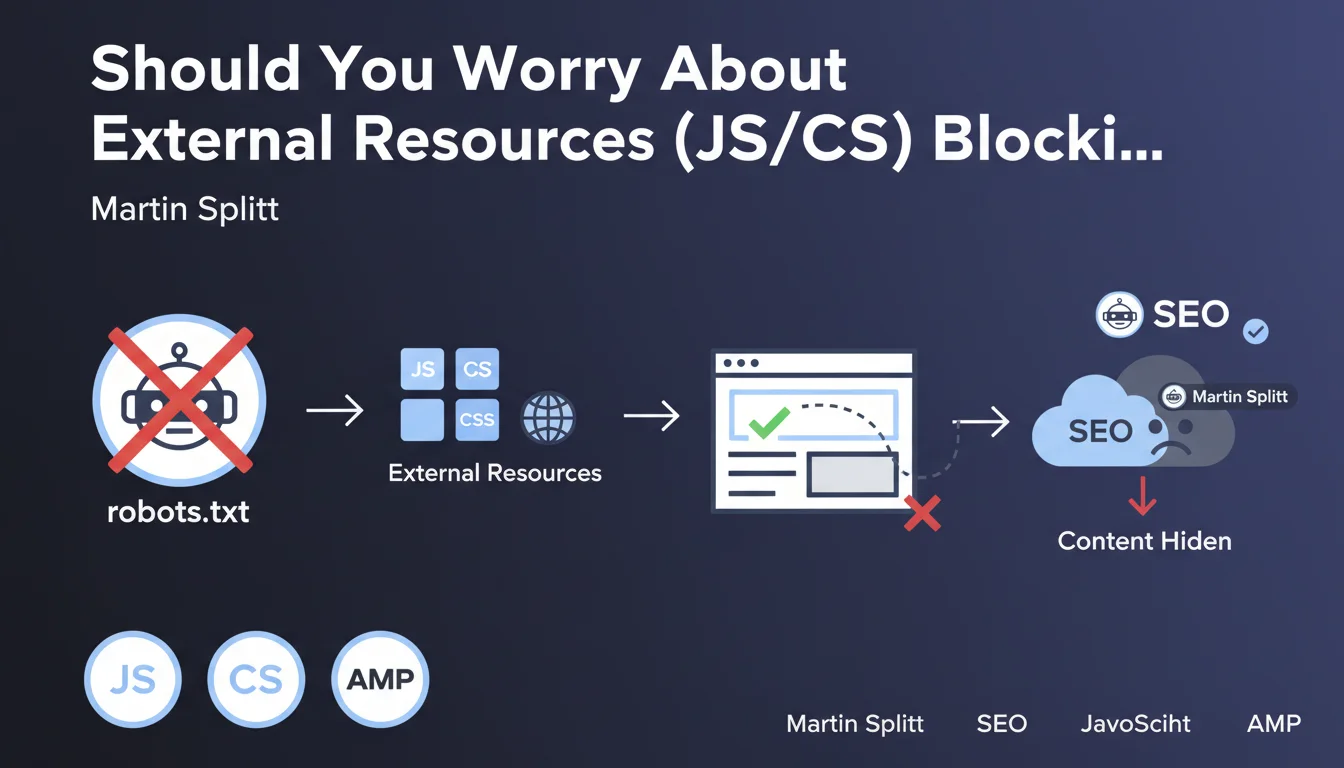

This statement addresses a common situation: the use of JavaScript or CSS files hosted on external domains (CDNs, third-party libraries, external services). The question is whether their blocking by robots.txt negatively impacts search engine optimization.

According to Martin Splitt, an engineer at Google, blocking these external resources generally does not pose a major problem. Google knows how to handle situations where certain resources are not accessible during crawling.

However, a risk exists: if these blocked resources are essential for displaying the main content, the robot might not see certain important elements. Fortunately, this situation remains relatively rare in practice.

- Blocking external resources (JS/CSS) via robots.txt is generally not problematic for SEO

- The real risk occurs when these blocked resources prevent content display to robots

- In most cases, you don't have control over the robots.txt file of external domains

- You need to verify that your content remains accessible even without these external resources

SEO Expert opinion

This position from Google is consistent with what we observe in the field. The search engine has considerably improved its ability to interpret pages, even with missing resources. It uses fallback mechanisms and can often deduce the structure despite the absence of certain files.

Nevertheless, an important nuance is necessary: the actual impact depends on the role of the blocked resource. If an external JavaScript file only manages decorative animations, no problem. However, if that same script dynamically loads all the textual content of your page, you have a serious issue.

The editorial comment raises a crucial point: you don't control external robots.txt files. This is why it's preferable to self-host critical resources or have a rendering strategy that doesn't entirely depend on third-party sources.

Practical impact and recommendations

- Use the URL Inspection tool in Search Console to verify how Googlebot actually sees your pages with current external resources

- Identify critical external resources: list all JS/CSS files hosted on third-party domains used by your site

- Self-host essential resources: download and host on your own server the JavaScript and CSS files that are indispensable for displaying main content

- Implement hybrid rendering: ensure that important textual content is present in the initial HTML, without relying solely on JavaScript to display

- Test with blocked resources: simulate the blocking of your external resources to verify that content remains visible

- Monitor performance: self-hosting can improve loading speed, but requires appropriate optimization (compression, caching, CDN)

- Document your dependencies: maintain an inventory of third-party libraries to anticipate potential issues

These technical optimizations touch on the very architecture of your site and require deep expertise in web development and technical SEO. Configuring rendering, auditing external dependencies, and implementing an optimal hosting strategy are complex tasks that require a comprehensive vision. For personalized support and professional implementation of these recommendations, the intervention of a specialized SEO agency can save you valuable time and avoid costly visibility mistakes.

💬 Comments (0)

Be the first to comment.