Official statement

Other statements from this video 8 ▾

- □ Does Google really rank pages based on how real users actually experience them?

- □ Why does Google rely on lab data to debug SEO issues—and should you?

- □ Is Lighthouse really the gold standard tool for diagnosing performance issues?

- □ Why can't Lighthouse actually measure how responsive your site really is to users?

- □ Why isn't Lighthouse catching all your Core Web Vitals issues?

- □ Why is Chrome DevTools Performance panel a game-changer for debugging Core Web Vitals?

- □ Can lab data really replace field data for optimizing your website's user experience?

- □ Should you really test Core Web Vitals in the lab before going live?

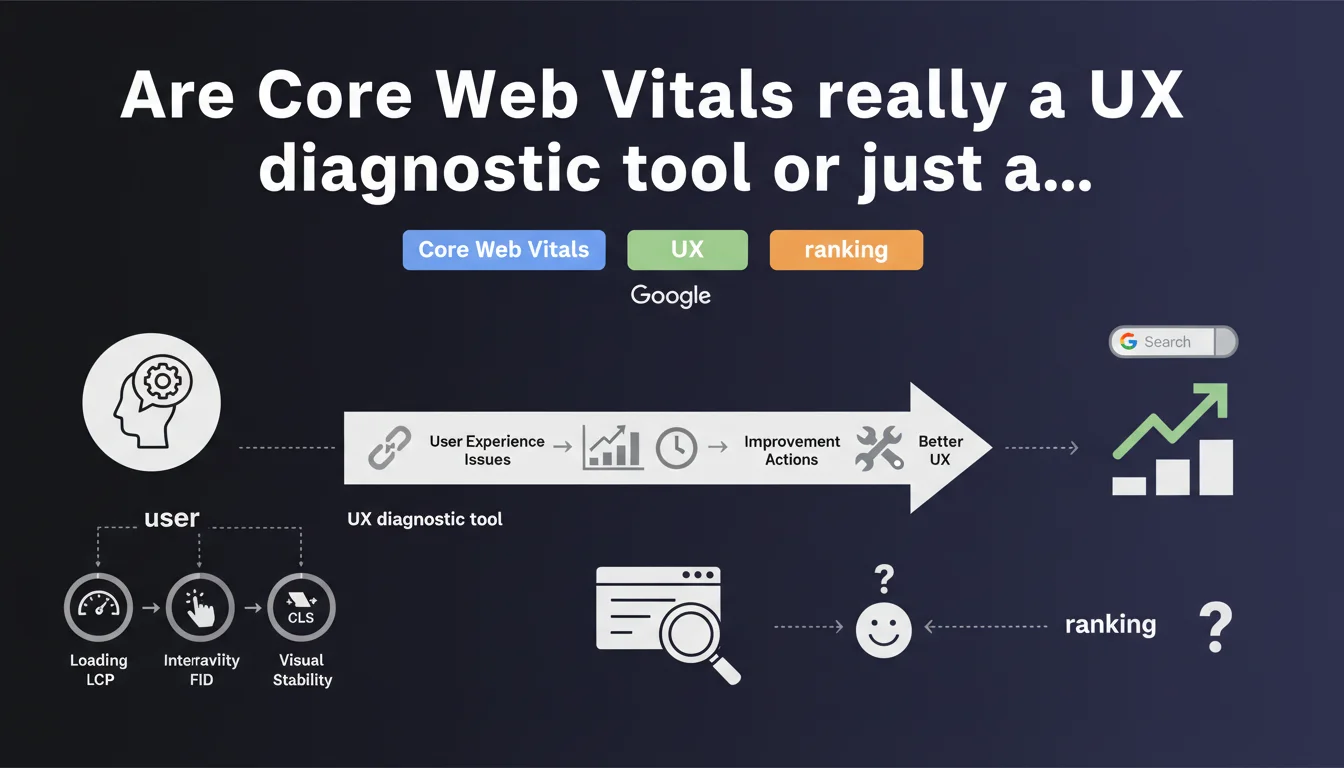

Google presents Core Web Vitals as a way to identify user experience problems on your pages. The message is clear: these metrics serve more than just ranking purposes—they reveal concrete friction points your visitors encounter. The question remains whether this 'altruistic' vision matches real-world reality.

What you need to understand

What does 'identifying user challenges' really mean?

Google positions Core Web Vitals as diagnostic indicators, not solely as ranking criteria. The idea: if your LCP is catastrophic, it's not just a signal for the algorithm—it's a symptom that your visitors are waiting too long to see content.

This approach aims to reframe the conversation. Rather than saying 'optimize for us,' Google says 'optimize for your users, we're giving you the tools.' Except in practice, many sites optimize CWV solely because they fear a ranking impact.

Why does Google insist on this 'user-first' angle?

Because performance metrics have long been perceived as obscure and technical. By directly linking them to user frustrations (slow loading, jumping layouts, unresponsive buttons), Google humanizes the narrative.

It's also a way to counter criticism: if you complain about CWV, you come across as someone who doesn't care about visitor experience. Clever.

Do CWV really capture all UX challenges?

No. Core Web Vitals measure three dimensions—perceived loading speed, visual stability, responsiveness. But UX is much broader: confusing navigation, invisible CTAs, unreadable content, aggressive pop-ups, and more.

Google knows this perfectly well. CWV are a proxy, not a complete snapshot of user experience. They detect certain technical symptoms, not the disease in its entirety.

- CWV identify technical friction points: slowness, instability, interaction latency

- They don't replace genuine qualitative UX analysis (user testing, heatmaps, session replay)

- Google presents them as diagnostic tools, but most sites treat them as ranking criteria to check off

- The thresholds (good/fair/poor) are arbitrary and don't necessarily reflect your actual audience's expectations

SEO Expert opinion

Is this statement consistent with observed practices?

On paper, yes. Core Web Vitals do measure real irritants: a 6-second LCP is objectively painful for a visitor. Chaotic CLS, same thing—text moving while you're reading is annoying.

But here's the catch: in practice, many sites optimize CWV solely for ranking, without questioning actual user impact. We see sites implementing aggressive lazy-loading to inflate LCP scores, at the expense of UX. So Google's well-intentioned message—'use this as a diagnostic'—collides with market reality.

What nuances should we add?

First nuance: CWV are measured using field data (CrUX), influenced by your visitors' connections and devices. A site may have poor CWV because its audience is mostly on mobile 3G in rural areas—not necessarily because the code is broken.

Second nuance: Google's thresholds (2.5s for LCP, 0.1 for CLS, etc.) are arbitrary. They don't correspond to UX studies specific to your industry or audience. A luxury e-commerce site and a news outlet don't have the same user tolerance standards. [To verify]: Google has never published detailed research justifying these specific thresholds.

Third nuance: CWV don't capture everything. A site can have excellent CWV and disastrous UX (incomprehensible navigation, poor copywriting, broken conversion flow). Conversely, a site with average CWV but exceptional content and thoughtful design will outperform.

When is this 'diagnostic' approach actually useful?

It's useful when you have fuzzy UX symptoms and CWV point to a specific technical culprit. For example: high bounce rate on mobile + catastrophic LCP = probably a loading problem.

It's less useful if you treat CWV as a checklist to tick off without correlating them to your business KPIs (conversion, engagement, time spent). I've seen sites move from 'fair' to 'good' on CWV with zero conversion impact—because the real problem was elsewhere.

Practical impact and recommendations

What should you do concretely with this statement?

First step: measure your CWV with the right tools (Search Console, PageSpeed Insights, CrUX Dashboard). Look at field data, not just lab tests—what matters is your visitors' real experience.

Second step: correlate CWV with business metrics. If your LCP is poor AND your mobile bounce rate is skyrocketing, you have a problem to solve. If your CWV are fair but conversions are stable, it might not be your top priority.

Third step: treat CWV as diagnosis, not as an end goal. A poor CLS tells you 'there's visual instability,' not 'remove all ads.' Dig deeper to understand the root cause—images without dimensions, late-loading fonts, dynamically injected elements—and fix it intelligently.

What mistakes must you absolutely avoid?

Mistake number one: optimizing for the score, not the user. I've seen sites artificially delay content display to improve LCP—technically it works, in practice it's absurd.

Mistake number two: ignoring your audience context. If 80% of your visitors are on desktop broadband, you don't face the same challenges as a site consumed mostly on fluctuating mobile 4G. Global CWV can mask critical disparities.

Mistake number three: neglecting other UX dimensions. CWV don't detect a confusing payment flow, anxiety-inducing copy, or incomprehensible navigation. Don't stop there.

How can you verify your approach is sound?

Compare before/after: improve your CWV on a segment of pages, and measure impact on your business KPIs (bounce rate, time spent, conversion, pages per session). If it moves positively, you're on the right track.

Use qualitative tools alongside: session replay, heatmaps, user testing. CWV tell you 'there's a problem,' these tools show you 'here's what your visitors actually experience.'

- Measure your CWV with field data (CrUX), not just lab tests

- Correlate CWV with business metrics to prioritize actions

- Identify root causes of poor scores (images, fonts, JS, ads) before fixing

- Avoid cosmetic optimizations that inflate the score without improving actual experience

- Segment by device and connection to detect real friction points

- Complement with qualitative UX tools (heatmaps, session replay, user testing)

- Test the impact of your optimizations on conversion and engagement, not just scores

❓ Frequently Asked Questions

Les Core Web Vitals sont-ils un facteur de ranking majeur ?

Faut-il viser le « bon » (vert) sur tous les CWV pour être bien classé ?

Les données CrUX reflètent-elles vraiment l'expérience de mes visiteurs ?

Peut-on avoir d'excellents CWV et une UX désastreuse ?

Les seuils Google (2,5s pour LCP, etc.) sont-ils basés sur des études scientifiques ?

🎥 From the same video 8

Other SEO insights extracted from this same Google Search Central video · published on 29/08/2023

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.