Official statement

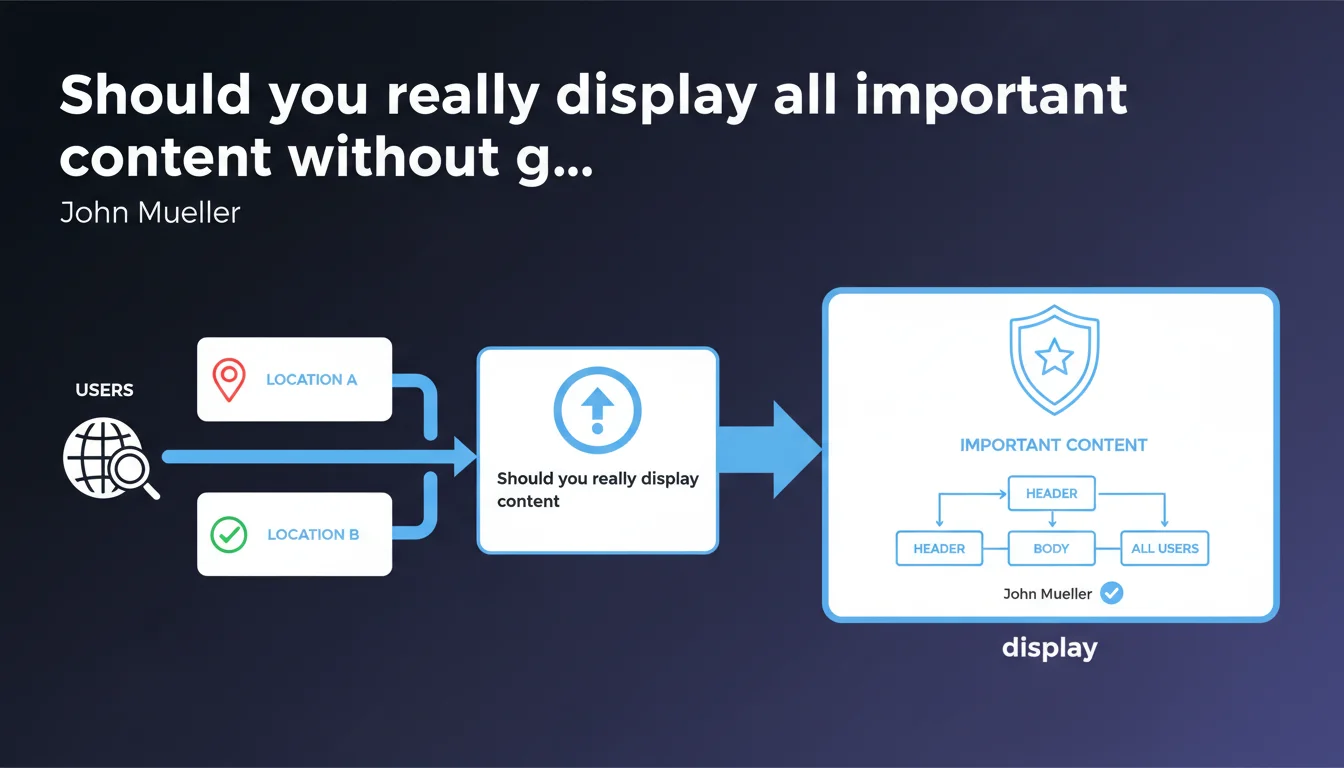

Google requires that all content deemed important be visible in the HTML by default, without requiring geolocation, JavaScript, or user interaction. This statement targets sites that hide strategic content behind IP detection or popups. Concretely: if it matters for SEO, it must be immediately accessible to everyone.

What you need to understand

Why does Google insist on default content visibility?

Google cannot always execute JavaScript correctly, and even when it does, dynamically loaded content or content conditioned on geolocation may not be indexed with the same priority. Mueller's message is clear: if an element influences your rankings — key text, internal links, structured data — it must appear in raw HTML.

This recommendation particularly targets e-commerce sites and multi-regional platforms that display different content based on detected IP. Google crawls from US datacenters in the majority of cases, so anything that requires a French or British IP to display risks going unnoticed.

What types of content are covered by this rule?

All elements that carry SEO value: title and meta tags in the DOM, product description text, internal linking, breadcrumbs, schema.org, Rich Snippets. If you hide these elements behind a script waiting for user action, you're playing with fire.

Secondary content — marketing popups, chatbots, advertising banners — do not fall into this category. Google only cares about information that defines your pages' thematic relevance and structure.

Does this directive concern only text content?

No. Critical images (main product, hero visual), embedded videos, price tables, customer reviews: anything that provides informational value must be accessible. If a product image loads only after infinite scroll or a click, it risks never being associated with your page by Google Images.

- Important content must be present in the source HTML, not injected afterward

- Geolocation should not condition the display of SEO-critical elements

- User interactions (scroll, click, hover) should not block access to strategic content

- Google crawls predominantly from American IPs: test your site under these conditions

- JavaScript rendering is not guaranteed, even as Google improves in this area

SEO Expert opinion

Is this statement really new or just a reminder?

It's a reminder. For years, Google has repeated that content must be accessible in initial HTML. What's changing is the context of application: with the rise of SPAs (Single Page Applications), React/Vue/Angular frameworks, and advanced personalization strategies, more and more sites are violating this rule without realizing it.

The problem is that Google has become better at crawling JavaScript — but not infallible. Result: some sites rely too heavily on this capability and end up with partially indexed pages. Mueller is setting the record straight.

In what cases can this rule be relaxed?

Let's be honest: if your important content is generated server-side (SSR) or pre-rendered (SSG), you're safe. The HTML delivered to Google already contains everything. But if you use pure client-side rendering (CSR), you're entirely dependent on Googlebot's ability to execute your scripts.

Sites serving different content by language or region can work around this with properly configured hreflang and dedicated URLs per market. But hiding entire sections behind IP detection without dedicated URLs? That's risky. [To verify]: Google has never published clear data on the success rate of JavaScript rendering by sector or site type.

What field errors contradict this recommendation?

We regularly see well-ranked sites that load their product descriptions in aggressive lazy loading, or display different content based on User-Agent. Some get away with it because their domain authority compensates, others because Google succeeds in crawling the JS — but it's Russian roulette.

Practical impact and recommendations

What should you check immediately on your site?

Test your site with the URL inspection tool in Google Search Console. Compare the HTML as rendered by Google with what a regular user sees. If crucial elements are missing in the Googlebot version, that's a red flag.

Also use tools like Screaming Frog in "rendered JavaScript" vs "raw HTML" mode to identify gaps. If your H1 tags, internal links, or schema.org only appear in the JS version, you have a problem.

How do you fix a site that hides important content?

Switch to Server-Side Rendering (SSR) or static pre-rendering if you're using React, Vue, or Angular. Next.js, Nuxt.js, and other modern frameworks make this transition easier. The goal: initial HTML must contain all SEO-critical content before even JavaScript execution.

For multi-regional sites, serve distinct URLs by market (/fr/, /en/, /de/) rather than modifying content dynamically based on IP. Configure hreflang properly and let Google index each version.

- Audit source HTML vs final render (Search Console, Screaming Frog, headless tools)

- Identify all strategic content loaded only in client-side JavaScript

- Migrate to SSR/SSG if your current stack relies on pure CSR

- Remove geolocation conditions for SEO-critical content

- Test your site from a US IP (via VPN) to simulate Googlebot crawl

- Verify that images, videos, and conversion elements are present in the initial DOM

- Configure hreflang and dedicated URLs for regional variants

❓ Frequently Asked Questions

Est-ce que Google crawle et indexe correctement le JavaScript en 2025 ?

Peut-on afficher du contenu différent selon la localisation sans risque SEO ?

Les popups et bannières de cookies doivent-ils aussi être visibles par défaut ?

Comment tester si Googlebot voit bien tout mon contenu important ?

Le lazy loading d'images pose-t-il problème pour le SEO ?

🎥 From the same video 3

Other SEO insights extracted from this same Google Search Central video · published on 12/10/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.