Official statement

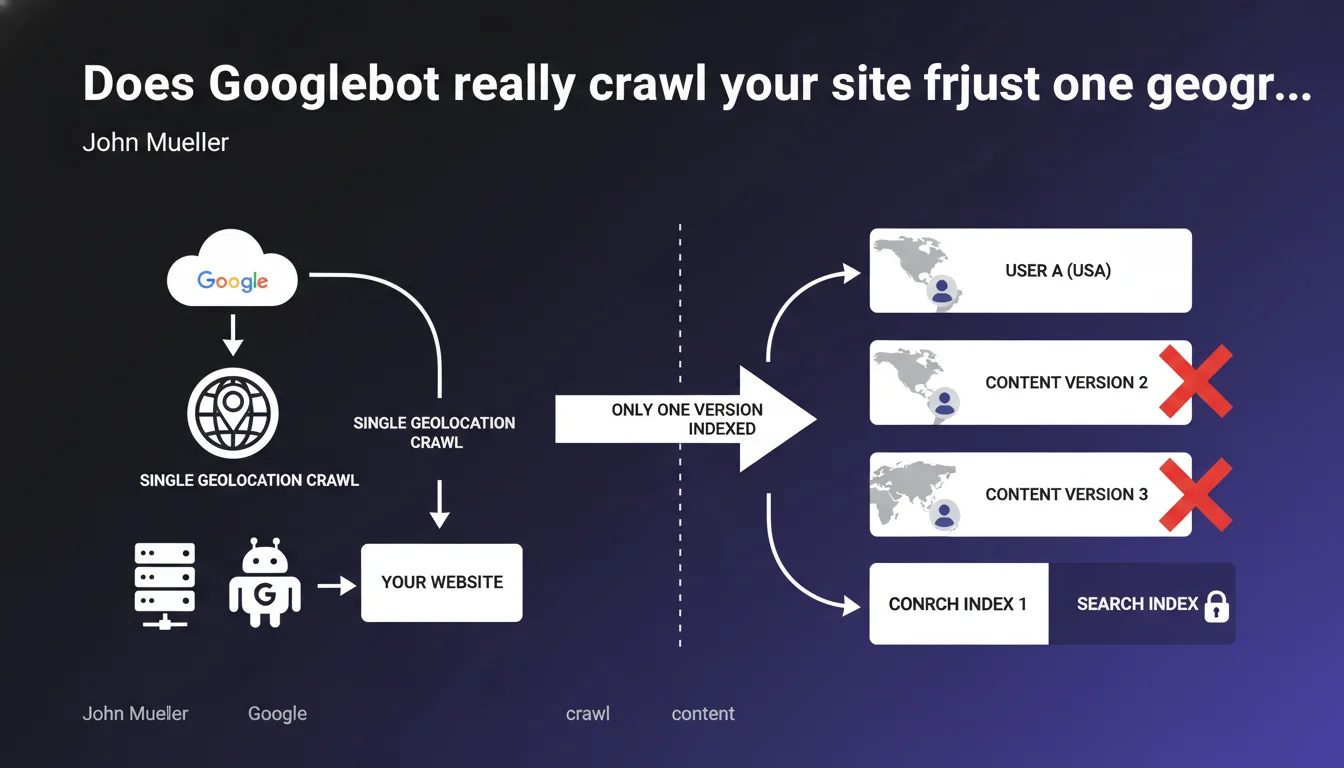

Googlebot crawls and indexes a website from a single geographic location. If your site displays different content based on user geolocation, only one version will be visible in search results. For multi-country or geo-targeted sites, this changes everything.

What you need to understand

What does this concretely mean for indexation?

When Mueller states that Googlebot crawls from a single geographic location, he clarifies a crucial point: Google will not systematically explore all geographic variants of your content. If your site uses IP geolocation to serve different content to a French visitor and an American visitor, Googlebot will only see one of these versions.

The direct consequence? If you're counting on geographic cloaking to index multiple versions of the same page, you're at an impasse. Google will index the version it sees from its default crawl location — typically the United States for most sites. The other versions will remain invisible in the index.

Why does Google operate this way?

The answer lies in crawl efficiency. Crawling a site from multiple locations for each URL would multiply the required crawl budget exponentially. For Google, it's a matter of resources: why crawl 5 geo-localized versions of the same URL when you can index just one?

This approach creates problems for sites that rely on geolocation as their only method of differentiation. Google prioritizes other signals — such as hreflang tags or distinct domains/subdomains — to understand geographic variants. The message is clear: do not rely on IP geolocation to index multiple versions.

What are the recommended alternatives?

Google has been pushing for explicit rather than implicit solutions for years. Hreflang tags allow you to signal different language or regional versions of a page while keeping distinct URLs. Subdomains or subdirectories by country (fr.example.com or example.com/fr/) provide a clear structure that Googlebot can explore without ambiguity.

- Googlebot generally crawls from a single geographic location (often the United States)

- Content displayed via IP geolocation will not be fully indexed

- Only the version visible from Google's crawl location will appear in the index

- Hreflang and distinct URL structures are the recommended solutions

- This limitation particularly affects e-commerce sites with geo-localized pricing

SEO Expert opinion

Is this statement consistent with real-world observations?

Yes and no. On paper, this claim corresponds to what we observe: Google indeed indexes one main version per URL. But reality is more nuanced. [To verify]: there are documented cases where Google appears to crawl certain pages from multiple geographically distributed IPs, particularly for major sites or during algorithmic testing phases.

Mueller's phrasing — "generally" — leaves room for interpretation. Does Google sometimes crawl from other locations to verify content consistency? Public data is lacking to definitively settle this. What is certain: you cannot rely on multi-location crawling. It's the exception, not the rule.

What are the gray areas of this statement?

Mueller doesn't specify how Google handles sites using a mix of signals — IP geolocation + hreflang + conditional 302 redirects. In practice, these hybrid configurations often create more problems than they solve, with versions cannibalizing indexation or misdirected redirects.

Another unclear point: what about Google Search Console by country (google.fr, google.de, etc.)? If you explicitly target a local market via GSC and hreflang tags, does that trigger a crawl from that region? Official documentation remains vague. Experience shows that geographic targeting in GSC influences ranking, not necessarily crawling.

In which cases does this rule really cause problems?

International e-commerce sites with different prices by market are hit hardest. If your site displays €99 in France and $120 in the United States via geolocation, Google will likely only index the American version. Result: your price snippets in French SERPs display incorrect data.

News or content sites legally restricted by region encounter the same issue. If you block certain articles due to copyright restrictions based on geolocation, Google might index an incomplete version or display 403/404s depending on its crawl location. Let's be honest: this Google approach simplifies its infrastructure, but it forces webmasters to adapt their architecture — not the other way around.

Practical impact and recommendations

What should you do if you serve geo-localized content?

First step: immediately stop relying on IP geolocation as your only method of content differentiation. If you have distinct versions for different countries, create separate URLs — subdomains (fr.site.com), subdirectories (/fr/), or ccTLDs (site.fr).

Next, correctly implement hreflang tags to signal Google the relationships between these versions. Each page should point to its language/regional equivalents, including itself. And verify in Search Console that Google detects these signals without errors.

How to verify that Google indexes the right version?

Use the URL Inspection tool in Search Console for each geographic version of your key pages. Look at the HTML code rendered by Googlebot: is it really the version you want indexed? If you spot inconsistencies, it's probably a poorly managed geolocation problem.

Also compare your snippets in SERPs from different countries (using VPNs or Google's region preview tool). If the prices, descriptions, or content displayed don't match the local market, you have a geo-indexed content problem.

What mistakes must you absolutely avoid?

Never mix IP geolocation and automatic 302 redirects to local versions. Google may interpret this as cloaking, especially if content varies significantly. If you must redirect, use suggestion banners ("It looks like you're in France, would you like to see our French site?") rather than forced redirects.

Also avoid serving radically different content without distinct URLs. If your /product page displays a smartphone at €699 for France and a laptop at $999 for the United States based on IP, you're outside the guidelines. That's disguised cloaking.

- Create distinct URLs for each geographic or language version

- Implement hreflang tags in a bidirectional and complete manner

- Verify in Search Console that Google indexes the correct versions

- Test snippets from different locations to detect inconsistencies

- Replace automatic redirects with user suggestions

- Regularly audit server logs to identify Googlebot's crawl location

- Clearly document your multi-country architecture in a technical specs file

❓ Frequently Asked Questions

Est-ce que Google crawle depuis les États-Unis pour tous les sites ?

Les balises hreflang suffisent-elles sans URLs distinctes ?

Que se passe-t-il si je bloque Googlebot américain par géolocalisation ?

Comment savoir depuis quelle localisation Google crawle mon site ?

Est-ce que le ciblage géographique dans la Search Console change la localisation de crawl ?

🎥 From the same video 3

Other SEO insights extracted from this same Google Search Central video · published on 12/10/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.