Official statement

What you need to understand

What exactly is rendering and why does it matter for SEO?

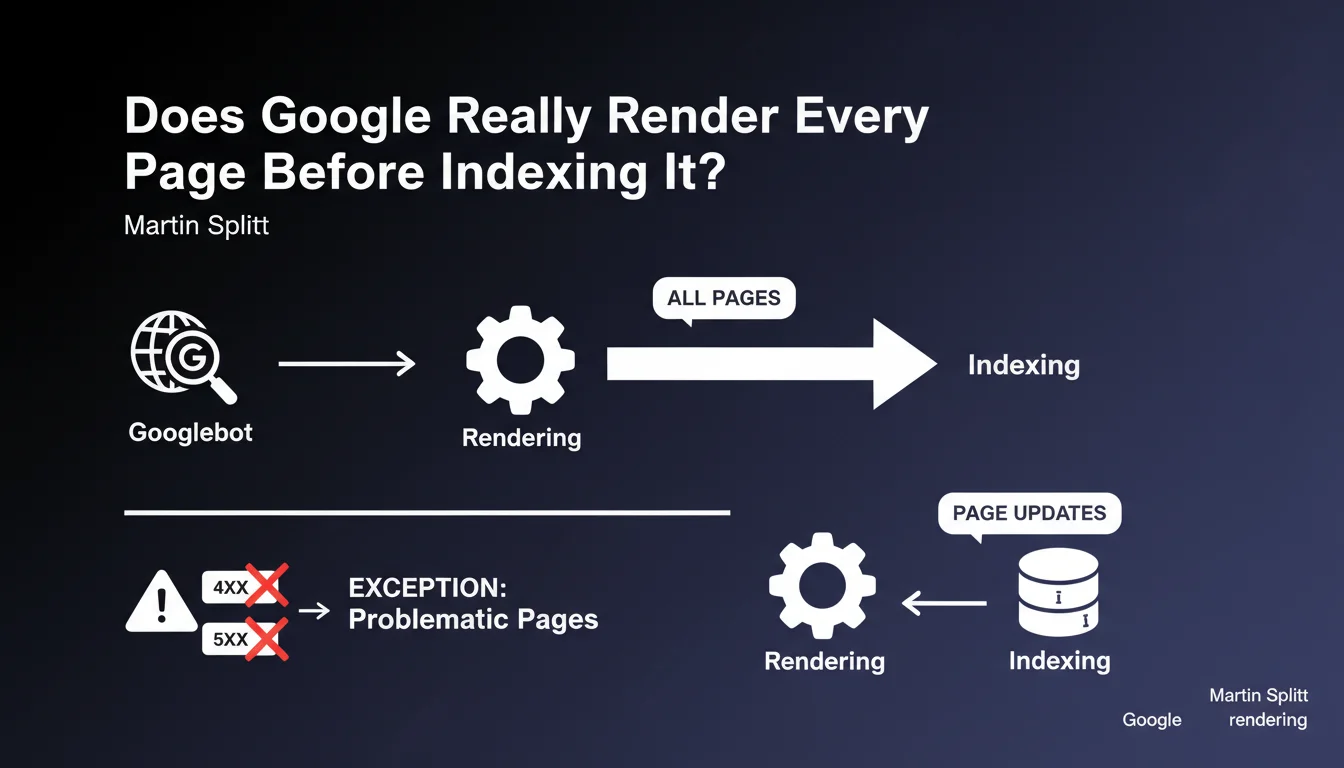

Rendering is the process by which Googlebot executes the JavaScript of a page to visualize the final content as a user would see it. This step is crucial because many modern websites use JavaScript frameworks (React, Vue, Angular) that generate content dynamically.

According to Martin Splitt, if a page appears in Google's index, it means it has necessarily been rendered. This statement confirms that Google no longer relies solely on raw HTML to index pages.

What are the exceptions to this general rule?

Martin Splitt specifies that this rule applies to almost all pages, with a few notable exceptions. Pages generating 4XX errors (pages not found) or 5XX errors (server errors) do not systematically benefit from complete rendering.

Gary Illyes had also mentioned another exception: news content can sometimes be indexed before rendering for speed reasons. This nuance is important for news sites requiring ultra-fast indexing.

How does this statement impact current SEO strategy?

This official confirmation means that JavaScript sites are now treated on equal footing with traditional HTML sites. The myth that Google would not index JavaScript content is therefore definitively outdated.

- Rendering is systematic for all indexed pages (except exceptions)

- 4XX/5XX errors can block the rendering process

- News content can benefit from accelerated indexing before rendering

- Updating pages in the index follows the same rendering process

- Modern JavaScript frameworks are now fully compatible with Google indexing

SEO Expert opinion

Is this statement consistent with practices observed in the field?

My 15 years of SEO experience partially confirm this statement. In the majority of cases, well-built JavaScript pages do indeed index without major problems. However, I still regularly observe differences between rendered content and indexed content.

Rendering delay remains a critical variable. Google can take several days or even weeks before proceeding with complete rendering of a page, particularly on sites with low authority. During this period, only raw HTML may be taken into account.

What important nuances should be added to this rule?

The statement "indexed = rendered" deserves several technical clarifications. First, the rendering budget does exist, even if Google doesn't officially formalize it. Large or slow sites may see certain pages partially indexed.

Second, rendering quality varies. Google uses a sometimes outdated version of Chrome, which can create incompatibilities with modern JavaScript. Polyfills and browser compatibility therefore remain essential.

In what cases can this rule be contradicted?

I have identified several scenarios where indexing actually precedes complete rendering. High-authority news sites benefit from near-instant indexing, even before JavaScript execution, to ensure result freshness.

Pages with critical JavaScript errors can also be indexed without complete rendering. Google will then index the basic HTML, ignoring the defective dynamic content. Finally, during massive crawl spikes, certain pages can be temporarily indexed in "raw HTML" mode before subsequent rendering.

Practical impact and recommendations

What should you do concretely to optimize your site's rendering?

The absolute priority is to ensure that critical content is available in the initial HTML, without relying solely on JavaScript. This hybrid approach (Server-Side Rendering or Static Generation) ensures optimal indexing even in case of rendering problems.

Use Search Console and particularly the "URL Inspection" tool to check how Google renders your pages. Systematically compare the rendered version with your actual page to identify discrepancies. Test particularly navigation elements, internal links, and main textual content.

Optimize JavaScript execution speed to facilitate Googlebot's work. Reduce JS bundle weight, eliminate dead code, and favor lazy loading for non-critical elements. Fast rendering promotes more frequent and comprehensive crawling.

What critical errors should you absolutely avoid?

Never block JavaScript and CSS resources in robots.txt. This classic error prevents Google from rendering and can seriously compromise your indexing. Regularly check your robots.txt directives to avoid this trap.

Avoid pure Single Page Applications (SPAs) without a server-side rendering strategy. Even if Google can theoretically index them, rendering delays are often prohibitive and user experience (Core Web Vitals) suffers.

How can you verify that your site follows rendering best practices?

- Audit your pages with the "URL Inspection" tool in Search Console for each major template

- Compare source HTML and rendered version to identify content generated in JavaScript

- Verify that critical internal links appear in the initial HTML, not only in JS

- Test your pages with JavaScript disabled in a browser to identify missing critical content

- Monitor JavaScript errors in Search Console (Settings section > Crawl report)

- Implement Server-Side Rendering (SSR) or static generation for priority content

- Optimize Core Web Vitals, particularly LCP which also impacts Google rendering

- Establish regular monitoring of delays between publication and complete indexing

💬 Comments (0)

Be the first to comment.