Official statement

What you need to understand

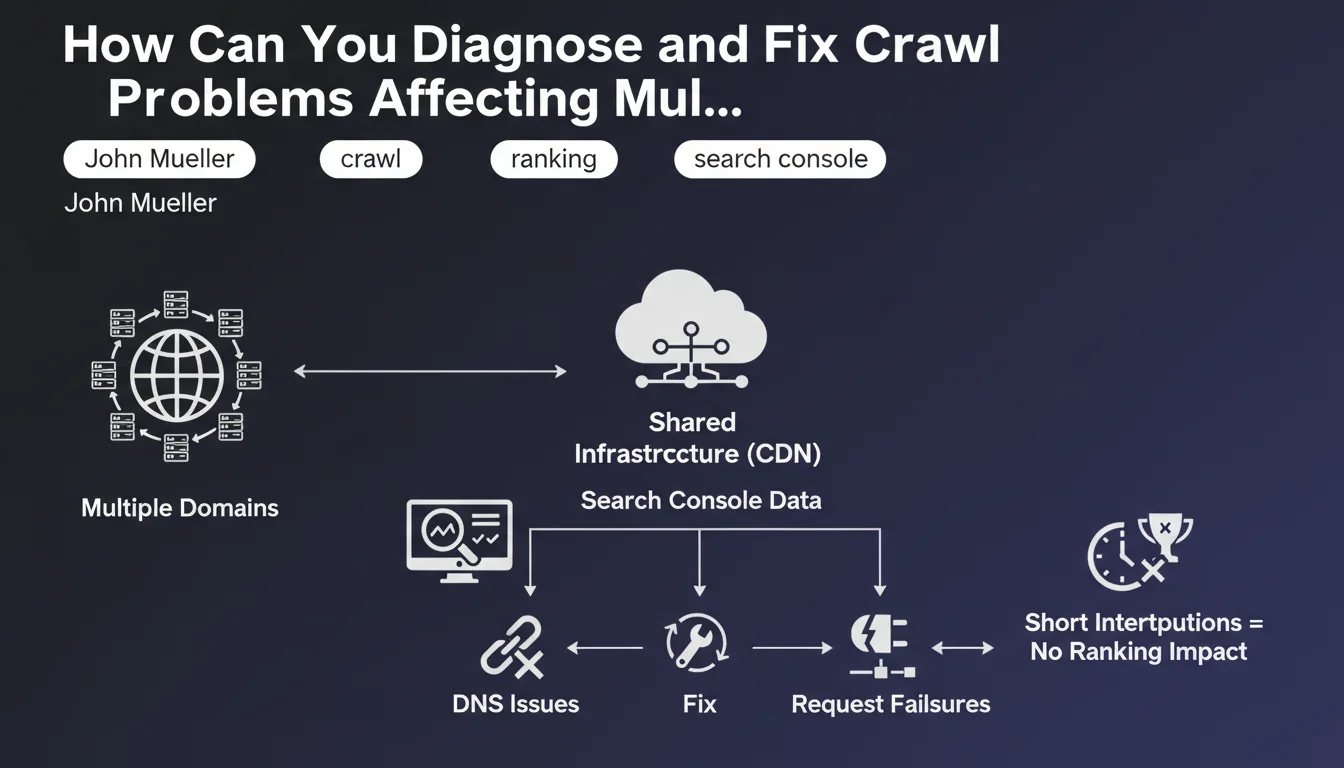

When multiple domains simultaneously experience crawl issues, this rarely indicates a coincidence. Google points to a common cause related to shared technical infrastructure.

CDNs (Content Delivery Networks), shared DNS servers, or shared hosting providers often constitute the common point of failure. These distributed infrastructures can experience localized or general outages that impact all sites depending on them.

John Mueller provides reassuring clarification: short interruptions generally do not affect rankings. Googlebot is designed to handle temporary unavailability and will automatically retry later.

- Analyze Search Console to identify patterns in crawl errors

- Check shared infrastructures (CDN, DNS, hosting) as a priority

- Examine DNS request failures that may block access to resources

- Short outages do not permanently penalize SEO

- Act quickly on persistent issues to avoid progressive deindexing

SEO Expert opinion

This statement is consistent with what is observed in practice. Multi-domain crawl issues indeed originate in 80% of cases from shared infrastructures, particularly during CDN migrations or system updates on the hosting side.

The important nuance concerns the definition of a "short interruption". Based on field observations, Google tolerates outages of a few hours quite well. However, recurring issues over several days, even brief ones, can trigger a progressive reduction in crawl budget and indirectly impact the positioning of new pages.

The advice to use Search Console is relevant, but you should also cross-reference with server logs for a complete view. Googlebot can fail without it being immediately visible in the console, particularly for timeout issues or bandwidth throttling.

Practical impact and recommendations

- Actively monitor Search Console: configure email alerts to be instantly notified of spikes in crawl errors

- Map your technical dependencies: list all third-party services (CDN, DNS, WAF) used by your domains

- Set up external monitoring: use tools like UptimeRobot or Pingdom to detect outages before Google does

- Analyze temporal patterns: check if errors coincide with scheduled maintenance from your providers

- Test DNS resolution: use tools like DNSChecker to verify worldwide propagation

- Examine server logs: cross-reference with Search Console to identify blocked or slowed Googlebot requests

- Configure fallbacks: plan automatic failover mechanisms in case of primary CDN failure

- Document incidents: maintain an outage log to identify problematic providers

- Avoid simultaneous changes: do not migrate multiple critical services at the same time

In summary: Multi-domain crawl issues require a systemic approach focused on shared infrastructures. Responsiveness is crucial, but prevention remains the best strategy.

Technical analysis of these issues requires specialized expertise in web architecture and monitoring. Implementing robust monitoring infrastructure, precise diagnosis of failures, and optimization of technical dependencies can prove complex to orchestrate alone. For businesses managing multiple strategic domains, support from an SEO agency specialized in technical aspects provides proactive monitoring, professional monitoring tools, and expertise in resolving critical incidents.

💬 Comments (0)

Be the first to comment.