Official statement

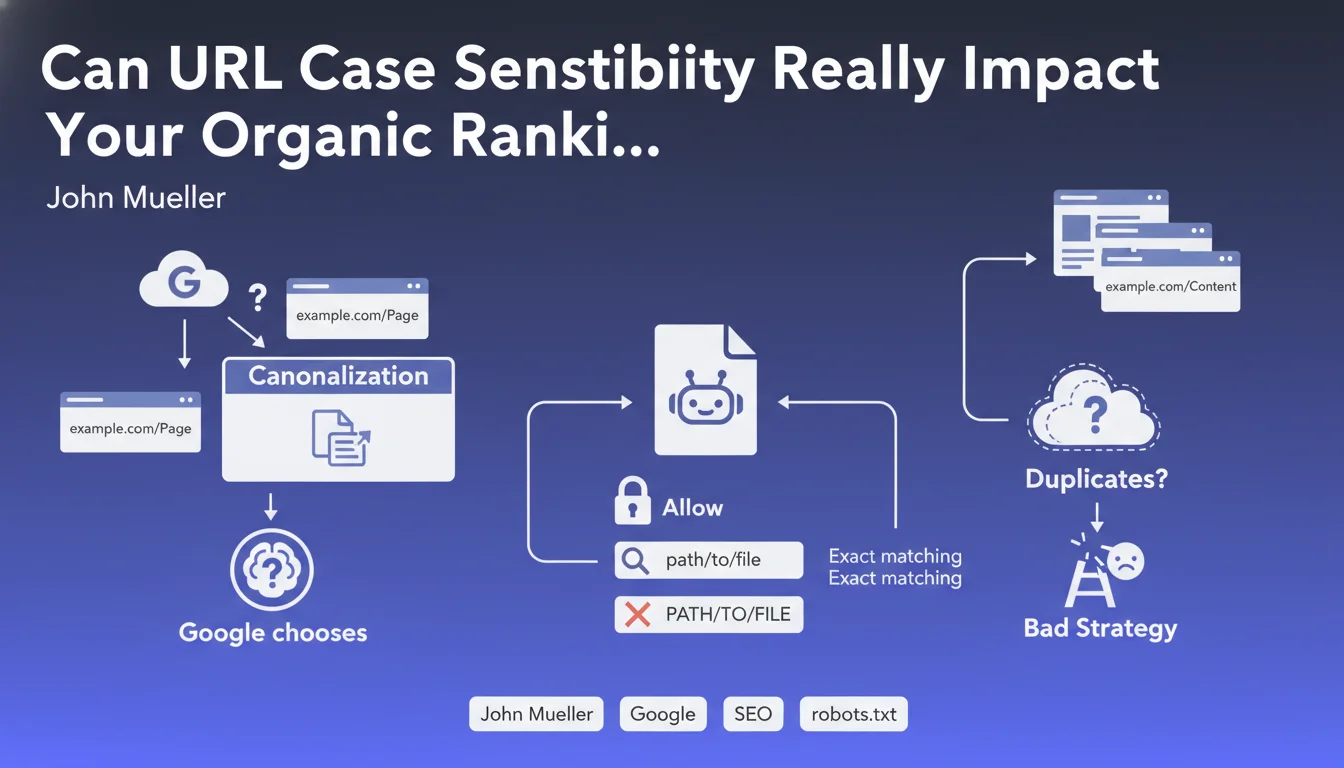

Consequently, if two URLs with different cases display the same content, Google will generally try to group them as duplicates. However, relying on this "hope" is not a viable SEO strategy. It is therefore highly recommended to be consistent in the use of uppercase/lowercase across all site URLs.

The same applies to the robots.txt file: rules only apply if the case matches exactly; you must account for all cases or standardize.

What you need to understand

John Mueller highlights a technical principle often overlooked: URL case sensitivity plays a decisive role in several aspects of SEO. Contrary to common belief, Google does distinguish between uppercase and lowercase letters in web addresses.

Concretely, this means that two identical URLs with different cases (example.com/Page vs example.com/page) are technically considered two distinct URLs by search engines. Google will certainly attempt to detect that it's duplicate content and group them, but this is not guaranteed.

This case sensitivity also applies to the robots.txt file, where rules must match exactly the case of the URLs to be blocked or allowed. A directive blocking /Admin/ will not block /admin/.

- URLs are case-sensitive for search engines

- Google may choose a different canonical version based on case variations

- The robots.txt file requires exact case matching

- Counting on Google to automatically correct is not a reliable strategy

- Consistency is essential to avoid canonicalization issues

SEO Expert opinion

This statement confirms what SEO professionals regularly observe during technical audits: case inconsistencies generate duplication issues that fragment page authority. In practice, Google often manages to consolidate these variations, but at the cost of wasted crawl budget and temporary dilution of ranking signals.

Context matters: Windows servers are historically case-insensitive, while Linux/Unix servers make the distinction. This difference sometimes creates surprises during migrations or hosting changes, where previously interchangeable URLs suddenly become distinct.

The robots.txt aspect is even more critical: I've encountered situations where entire sections remained accessible to bots simply because the blocking rule used a different case than the actually crawled URLs. This is a risk vector for security and indexation strategy.

Practical impact and recommendations

- Audit immediately all your site URLs to identify existing case variations

- Implement 301 redirects from all variants to a single canonical version (ideally lowercase)

- Configure your web server to automatically force URLs to lowercase via rewrite rules

- Verify the robots.txt file and ensure each rule matches exactly the case of crawled URLs

- Standardize internal links by systematically using the same case (lowercase recommended)

- Update the XML sitemap to include only lowercase canonical versions

- Monitor server logs to detect access with varied cases and correct them

- Train editorial teams to respect the chosen case convention when creating content

- Systematically test case variations after each deployment or migration

- Document the case policy in the project's technical guidelines

Rigorous case management represents a fundamental yet often underestimated technical challenge. The impacts can be considerable: PageRank dilution, content duplication, crawl budget waste, and potential gaps in robots.txt directives.

Compliance requires a systematic approach combining thorough technical audit, server configuration, strategic redirects, and editorial governance. These optimizations touch critical aspects of web infrastructure and require sharp expertise to avoid errors that could negatively impact visibility.

Given the complexity of these technical interventions and their potential ramifications, guidance from an experienced SEO agency helps secure the process, exhaustively identify all cases specific to your platform, and implement corrections with the rigor necessary to preserve and optimize your search rankings.

💬 Comments (0)

Be the first to comment.