Official statement

What you need to understand

What exactly do we mean by URL repetition?

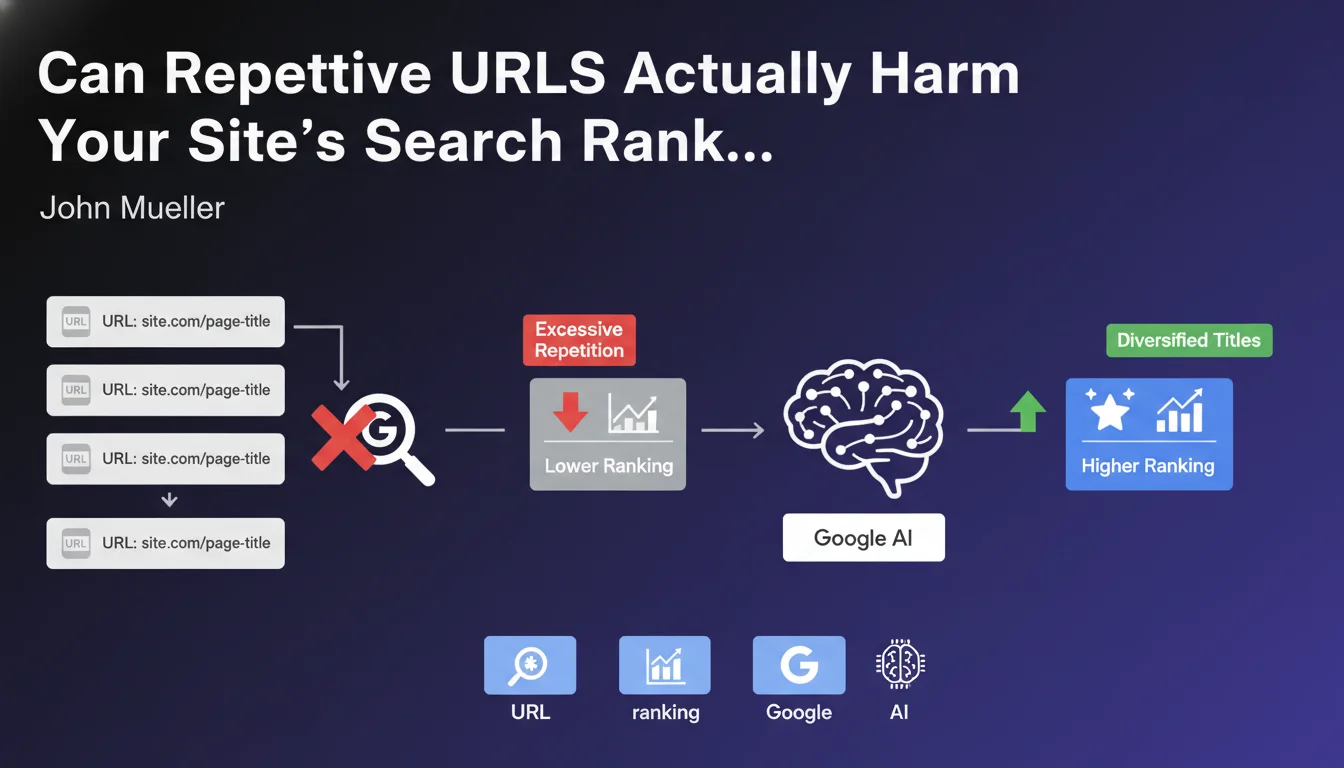

URL repetition refers to the excessive and recurrent use of the same patterns, keywords or structures in your web addresses. For example, if you create thousands of pages with URLs systematically containing the same terms like "blue-cheap-product" declined infinitely.

Google may then consider these pages as poorly differentiated and potentially problematic. The search engine might ignore some of these URLs during indexing, perceiving them as duplicate or low-value content.

Why would Google take this measure?

Google's objective is to provide diverse and relevant results to its users. When a site massively generates URLs with identical patterns, it resembles over-optimization techniques or automatic content generation.

The search engine seeks to detect potential manipulations of its index. Thousands of similar URLs can signal an attempt to artificially create volume without real added value for the user.

In what cases does this situation actually occur?

This issue particularly affects e-commerce sites with multiple filters, real estate sites generating infinite combinations, or platforms with poorly managed dynamic URL parameters.

Classified ad sites and content aggregators are also concerned if they automatically create pages for every possible variation of a criterion.

- Avoid overly repetitive URL patterns across thousands of pages

- Google can ignore URLs perceived as redundant or low-value

- Diversifying URL structures is essential for indexation

- Sites with massive automatic generation are particularly exposed

- This measure aims to combat over-optimization and spam

SEO Expert opinion

Is this statement consistent with practices observed in the field?

Absolutely. Numerous SEO audits indeed reveal massive indexation problems on sites with repetitive URL structures. E-commerce sites with thousands of filter combinations regularly see entire portions of their catalog ignored.

Search Console data often confirms this phenomenon with pages marked as "Discovered, currently not indexed" or "Crawled, currently not indexed". The correlation with repetitive URL patterns is striking.

What important nuances should be brought to this rule?

This doesn't mean prohibiting any structured logic in your URLs. Having a coherent architecture with clearly identified categories remains a best practice. The problem only arises with excessive and mechanical repetition.

The notion of "too many times" remains subjective and contextual. A 500-page site with some repetitions won't be impacted the same way as a 500,000-page site mechanically generating millions of similar URLs.

What are the complementary signals that Google analyzes?

Google doesn't base this decision solely on URL structure. The search engine also evaluates content quality, the similarity rate between pages, user engagement signals and real added value.

Similar URLs with genuinely unique and relevant content have a better chance of being indexed. Conversely, diversified URLs with duplicate or low-quality content won't escape the filters.

Practical impact and recommendations

How can you audit your site to detect this problem?

Start by extracting all your URLs from your XML sitemap or via a professional SEO crawler. Analyze recurring patterns by breaking down your URL structure by segments.

Use the Search Console to identify "Discovered, currently not indexed" pages. Examine whether these pages share similar URL patterns. An indexation rate below 70% can signal a problem.

Create a term frequency analysis in your URLs. If certain words appear in more than 30-40% of your addresses and your indexation is low, it's a warning signal.

What concrete actions should be implemented to correct the problem?

For e-commerce sites, implement intelligent facet management with canonical tags pointing to main pages. Block indexation of less relevant combinations via robots.txt or meta robots.

Rethink your URL architecture by creating more varied and semantically rich structures. Rather than "/red-product", "/blue-product", opt for variations like "/scarlet-collection", "/azure-range".

Use URL parameters in the Search Console to tell Google how to treat dynamic parameters and avoid indexing unnecessary variations.

- Audit all your site's URLs to identify repetitive patterns

- Analyze the indexation rate in Search Console and correlate with URL structures

- Implement canonical tags to consolidate similar pages

- Diversify URL nomenclature by enriching the vocabulary used

- Block indexation of less relevant filter combinations

- Properly configure URL parameters in Search Console

- Prioritize quality over quantity in page generation

- Regularly monitor the evolution of indexation rate after modifications

Should you modify your entire existing structure immediately?

No, proceed in progressive steps. Start by identifying the most problematic sections where the indexation rate is critical. Test your modifications on a limited sample before global deployment.

Keep a history of changes and measure the impact on indexation and traffic. Some 301 redirects will be necessary if you modify existing URLs, which can temporarily affect your search rankings.

💬 Comments (0)

Be the first to comment.