Official statement

What you need to understand

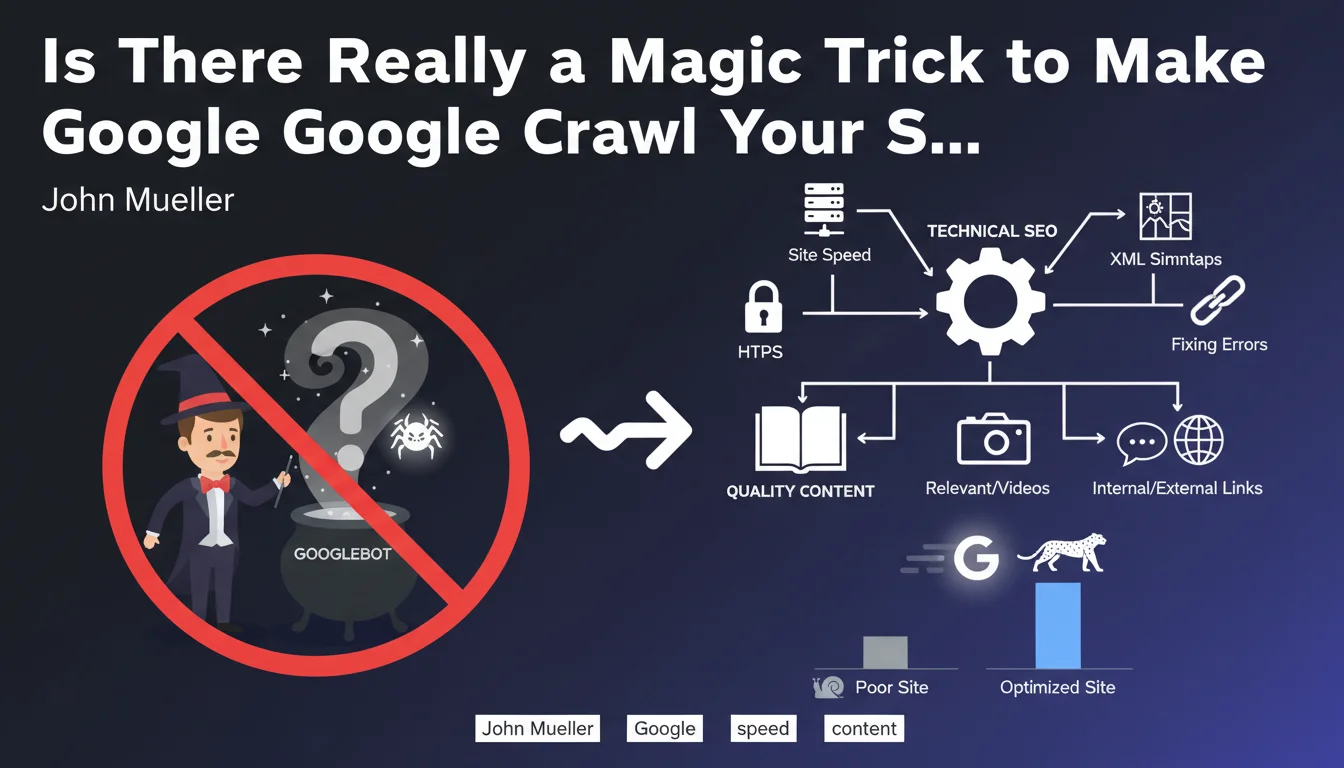

John Mueller clearly states that there is no magic solution to force Google to crawl your site faster on a permanent basis. This statement aims to debunk the myths surrounding "magical" techniques often sold by certain service providers.

The central message is that crawl acceleration is a consequence, not an isolated objective. Google allocates its crawl budget based on the perceived value of a site, not on demand.

To achieve more frequent and deeper crawling, you must work simultaneously on all aspects of the site: technical, content, authority, and user experience. Google must have objective reasons to invest more resources.

- No magic button exists to artificially accelerate crawling

- The crawl budget depends on the overall quality of the site

- Optimization must be holistic: technical AND content

- Google naturally increases its crawling of sites that truly deserve it

- Sustainable improvements require continuous foundational work

SEO Expert opinion

This position from Mueller is perfectly consistent with what we observe daily in the field. Sites that benefit from intensive crawling are systematically those that combine technical excellence, fresh and relevant content, and solid authority signals.

An important nuance, however: some sites may experience specific technical bottlenecks that drastically limit their crawl (catastrophic server response times, redirect loops, infinite pagination). In these specific cases, fixing the problem can indeed produce an immediate "miraculous" effect. But it's about removing a brake, not artificially accelerating.

Real-world reality confirms that news sites, active marketplaces, and platforms with high user engagement naturally obtain premium crawling. Google rewards freshness, real demand, and usefulness to users.

Practical impact and recommendations

- Audit technical health: loading times, server errors, correct HTTP codes, logical architecture

- Optimize your robots.txt file to avoid unnecessarily blocking important resources

- Structure your XML sitemap intelligently by prioritizing strategic and up-to-date content

- Improve server response times (TTFB) which directly impact crawl speed

- Publish fresh content regularly to signal that your site is alive and deserves frequent visits

- Eliminate low-quality or duplicate content that dilutes your crawl budget

- Work on your internal linking to facilitate discovery of important pages

- Monitor Search Console: crawl statistics, crawl errors, index coverage

- Avoid unnecessary URL parameters that create infinite URL variations

- Build your authority through natural, quality backlinks

- Don't spam submission tools (Search Console, IndexNow) which don't replace foundational work

In summary: Rather than looking for non-existent shortcuts, focus on continuous and comprehensive improvement of your site. A technically high-performing site with quality content that's regularly updated will naturally obtain better crawling.

These cross-optimizations require sharp technical expertise and a 360-degree strategic vision. Given the complexity of coordinating these multiple levers simultaneously, many sites benefit from structured support from a specialized SEO agency, capable of identifying priorities and deploying a coherent roadmap tailored to your specific context.

💬 Comments (0)

Be the first to comment.