Official statement

What you need to understand

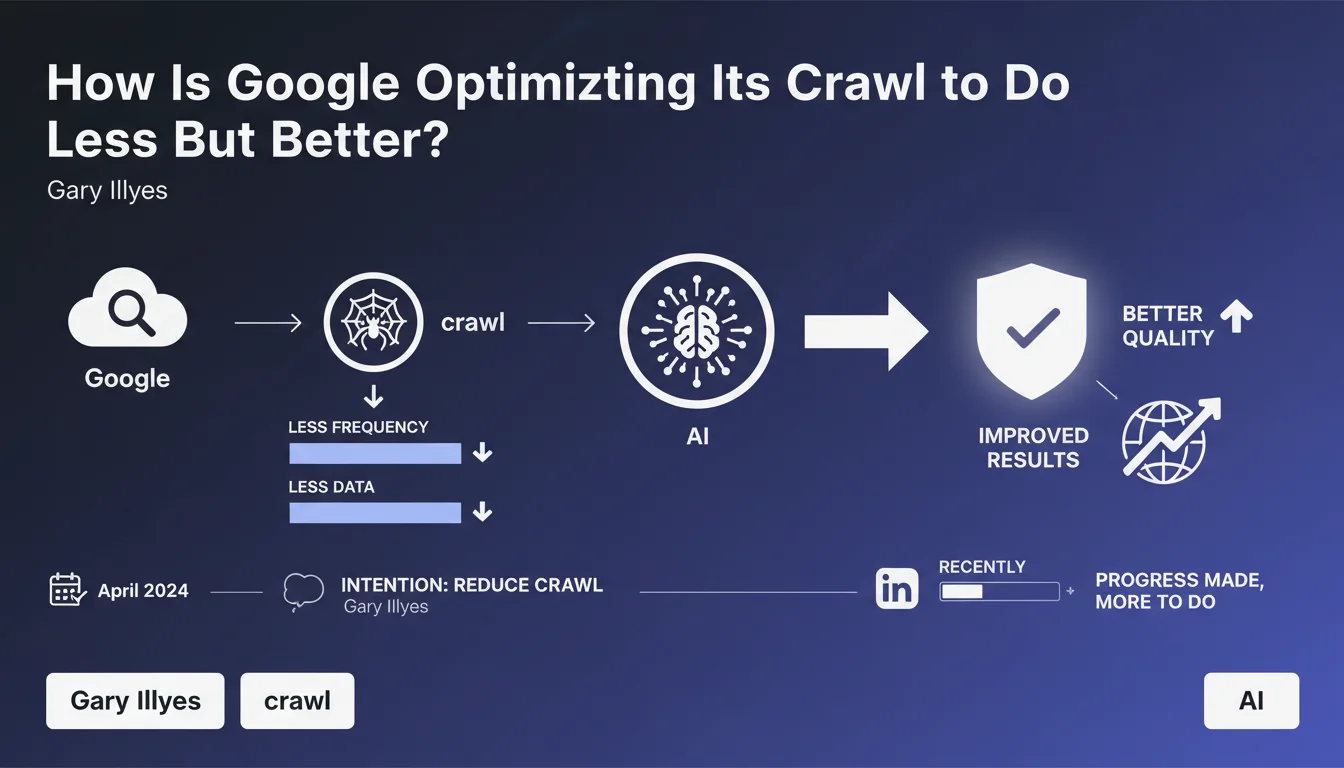

Google has been actively working to optimize its crawling process since April 2024. The stated objective is clear: reduce the frequency of bot visits and decrease the amount of data transferred, while maintaining equivalent indexing quality.

This approach is part of a logic of energy efficiency and performance. Google is seeking to be more selective in what it crawls, rather than massively scanning all available content. This involves changes in how the engine manages its internal cache and sharing between different user agents.

The recent update confirms that improvements have been made, without detailing their exact nature. This communication remains deliberately vague about the technical mechanisms implemented.

- Reduced crawl frequency: Google visits less often but in a more targeted manner

- Optimized data transfer: less bandwidth consumed

- Improved internal cache: better sharing between different bots

- Quality maintenance: indexing should not be negatively impacted

- Ongoing process: much still remains to be developed according to Google

SEO Expert opinion

This statement is consistent with field observations since mid-2024. Many sites have indeed noticed a decrease in crawl frequency in their logs, particularly on low-value pages or duplicate content. Google is clearly becoming more selective.

The announced approach aligns with the current ecological and economic challenges facing the American giant. Crawling the entire web is expensive in terms of energy and infrastructure. This optimization reduces costs while potentially improving the relevance of the index.

Caution is advised for sites with lots of dynamic or frequently updated content. If crawling becomes too spaced out, some important updates could take longer to be indexed. It may be necessary to compensate with more proactive strategies (optimized XML sitemaps, IndexNow, etc.).

Practical impact and recommendations

- Optimize your crawl budget: identify and block (robots.txt, noindex) useless pages that consume crawl without providing value (filter pages, archives, URL parameters)

- Improve technical performance: reduce loading times, eliminate 4xx/5xx errors, optimize server response time (TTFB)

- Structure your architecture clearly: facilitate discovery of important pages through logical internal linking and well-organized XML sitemaps

- Prioritize quality content: focus on high-value pages rather than multiplying weak or duplicate content

- Monitor your server logs: regularly analyze crawl frequency and distribution to detect any anomalies

- Use IndexNow or the Indexing API: proactively signal your important content or major updates to compensate for reduced crawl frequency

- Clean up regularly: delete or consolidate old obsolete pages that dilute your crawl budget

- Optimize heavy files: compress images and resources to reduce the amount of data transferred during crawling

Google's crawl optimization reinforces the importance of a rigorous technical approach. Well-structured, high-performing sites focused on quality will be favored, while those with technical weaknesses risk being penalized by an even further reduced crawl frequency.

Implementing these optimizations requires in-depth technical expertise and advanced analysis tools. Server log auditing, identifying crawl budget waste, and architectural restructuring are complex operations that require time and specialized skills.

For companies looking to maximize their visibility in light of these developments, support from an experienced SEO agency can prove valuable. A personalized diagnosis will precisely identify optimization opportunities suited to your specific context and effectively implement best practices.

💬 Comments (0)

Be the first to comment.