Official statement

Other statements from this video 4 ▾

- □ Les pages supprimées disparaissent-elles vraiment automatiquement de l'index Google ?

- □ Faut-il vraiment s'assurer que les pages supprimées renvoient le bon code HTTP ?

- □ Pourquoi faut-il attendre plusieurs semaines après la suppression d'une page pour voir l'index Google se mettre à jour ?

- □ L'outil de suppression urgente d'URL dans Search Console : vraiment nécessaire ou gadget surestimé ?

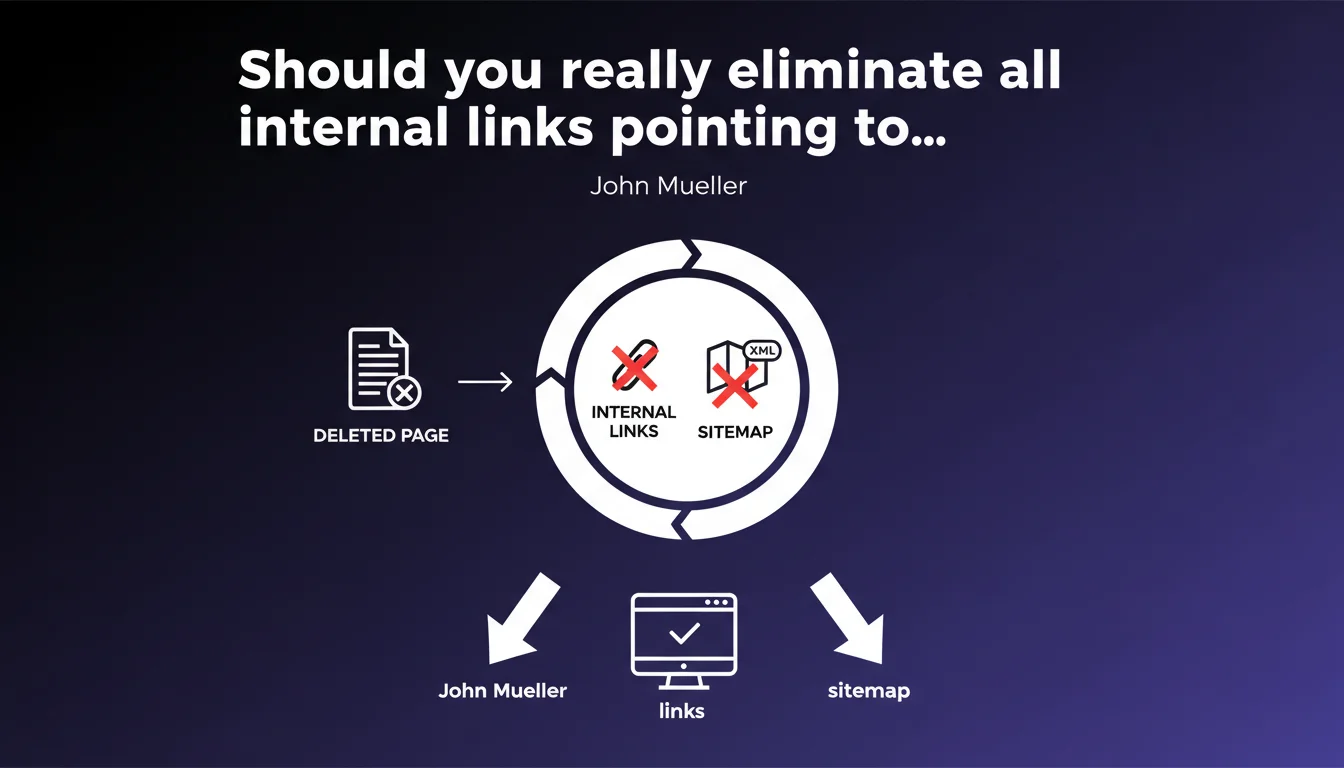

Google explicitly recommends removing all internal references to deleted pages: internal links and mentions in sitemaps. The goal: avoid wasting crawl budget on non-existent resources and limit negative signals for user experience. This directive may seem straightforward but conceals several structural issues for your site architecture.

What you need to understand

Why does Google insist on removing orphaned internal links?

When you delete a page but leave internal links pointing to it, Googlebot continues attempting to access it. This uselessly consumes your crawl budget — the allocation of time and resources that Google dedicates to exploring your site.

The more broken internal links your site contains, the more time the crawler wastes on dead ends. For a large site with thousands of pages, this waste becomes structural.

What does this directive reveal about sitemap management?

Mueller also mentions sitemap files. Many sites auto-generate sitemaps without regular audits. Result: obsolete URLs linger for months, signaling to Google pages that no longer exist.

Google treats the sitemap as a list of priority suggestions. If you include deleted pages in it, you send a conflicting signal: "Crawl this important page!" followed by a 404.

Does this recommendation apply only to 404s or also to redirects?

Mueller's wording targets primarily completely removed pages without redirection. If you've implemented a relevant 301 pointing to equivalent content, the internal link remains technically valid — but it's cleaner to update directly to the final destination.

Avoiding unnecessary redirect chains improves crawl speed and user experience.

- Crawl budget: each broken internal link wastes exploration resources

- Sitemap consistency: list only active and relevant URLs

- Redirects vs. deletions: if 301 redirect exists, update the source link to point directly

- UX signal: broken links = degraded experience, Google accounts for this indirectly

SEO Expert opinion

Is this directive truly a priority for all websites?

Let's be honest: for a small site with 50 pages, a few broken internal links will have zero measurable impact. Crawl budget isn't your concern.

However, once you exceed several thousand pages — e-commerce, media, directories — each inefficiency multiplies. A site with 500 dead internal links wastes crawl budget on nothing, delays discovery of new content, and dilutes internal authority.

What do you do when removing links is technically complex?

On poorly designed CMS platforms or legacy sites, tracking all internal links to a given URL can be a nightmare. Templates, dynamic widgets, reusable blocks — references hide everywhere.

If manual correction is impossible at scale, prioritize a temporary 301 redirect to a relevant page while you gradually clean up. [To verify]: Google tolerates a clean redirect better than repeated 404s across hundreds of crawls.

Are XML sitemaps really audited by Google as claimed?

Google claims it ignores error URLs in sitemaps after a few attempts. But in reality, we observe that polluted sitemaps generate persistent error reports in Search Console — which can hide real issues under the noise.

Cleaning your sitemaps primarily improves diagnostic readability. You identify structural problems faster.

Practical impact and recommendations

How do you quickly identify all internal links pointing to a deleted page?

Use an SEO crawler (Screaming Frog, Oncrawl, Botify) to map all internal links. Then filter by status code: all URLs returning 404 or 410 with internal incoming links must be addressed.

In Search Console, the "Coverage" section flags URLs excluded due to 404 errors. Cross-reference this list with your server logs to see if Google still attempts to crawl them.

What's the best cleanup strategy for a large site?

Prioritize by impact. Start with deleted pages that still received organic traffic or had external backlinks. These deserve a 301 redirect to equivalent content.

For pages without SEO value (old archives, obsolete content with no traffic), leave them as 404s, but systematically clean up internal links. Automate this cleanup via a rule in your CMS if possible.

Should you audit sitemaps with each major update?

Yes. Integrate an automatic check into your publishing process: before submitting a sitemap, verify that all listed URLs return a 200 status code. Tools like Sitebulb or simple Python scripts can validate this in minutes.

For dynamic sites, generate sitemaps from your database in real-time — excluding content marked as "deleted" or "draft" by default.

- Crawl your site to identify all internal links pointing to 404/410 errors

- Check Search Console for URLs excluded due to 404 errors

- Prioritize deleted pages still receiving traffic or backlinks for 301 redirect

- Update internal links in your templates, menus, dynamic widgets

- Clean up XML sitemaps: remove any non-200 URL

- Automate sitemap validation before submission to Search Console

- Audit regularly (quarterly minimum) for broken links via SEO crawler

❓ Frequently Asked Questions

Que se passe-t-il si je laisse des liens internes vers une page en 404 ?

Dois-je rediriger systématiquement toutes les pages supprimées en 301 ?

Les sitemaps XML avec des URLs en erreur pénalisent-ils le référencement ?

Comment automatiser la détection de liens internes cassés ?

Quelle différence entre un code 404 et un code 410 pour une page supprimée ?

🎥 From the same video 4

Other SEO insights extracted from this same Google Search Central video · published on 14/09/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.