Official statement

Other statements from this video 6 ▾

- □ La commande site: est-elle vraiment fiable pour vérifier l'indexation de vos pages ?

- □ L'indexation site: suffit-elle à confirmer que Google reconnaît vraiment votre site ?

- □ Comment vérifier que vos descriptions dans Google Search reflètent vraiment votre contenu ?

- □ Pourquoi l'absence de résultats avec la commande site: révèle-t-elle un problème critique d'indexation ?

- □ Faut-il utiliser l'outil d'inspection d'URL pour vérifier l'indexation de vos pages ?

- □ Pourquoi tester le classement sur des mots-clés pertinents ne suffit-il pas à valider votre stratégie SEO ?

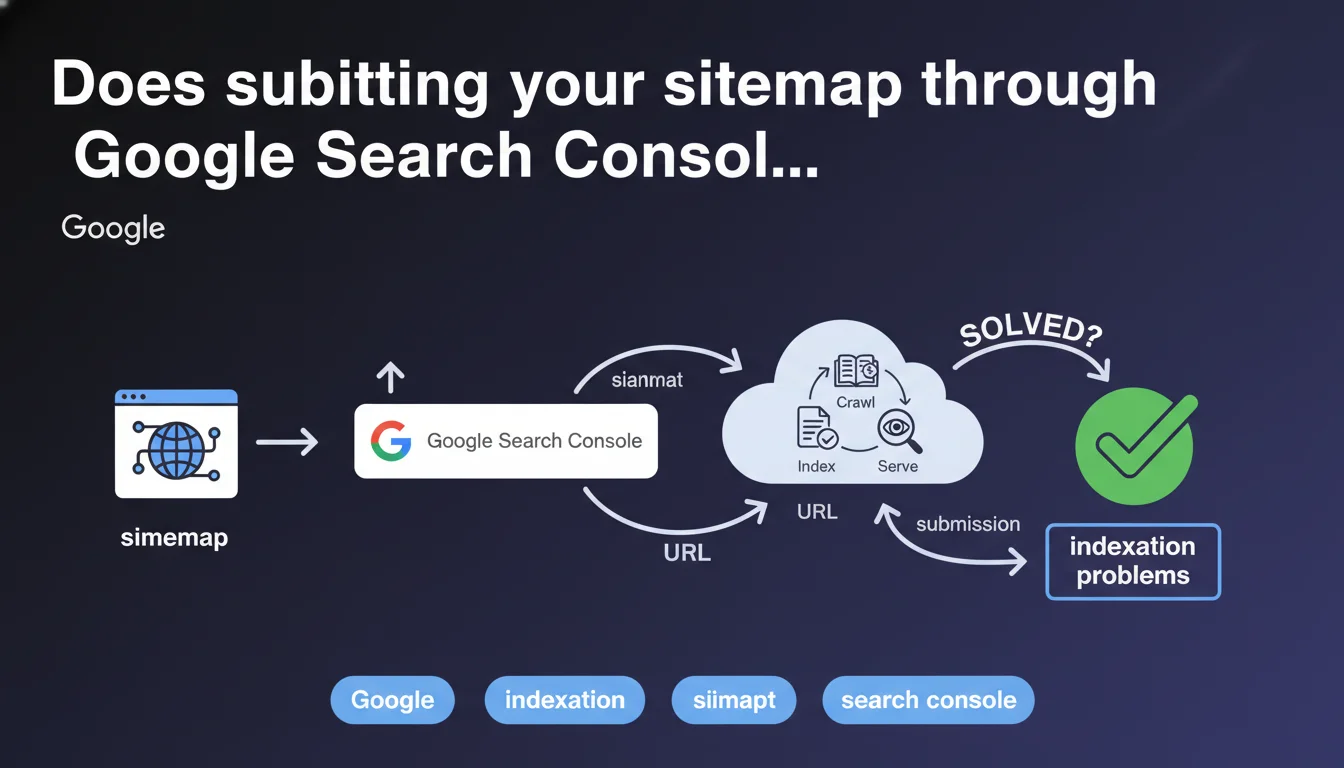

Google recommends submitting your sitemap and URLs via Search Console to resolve indexation issues. This statement positions GSC as the central tool for managing your online presence. The question remains whether this manual submission truly resolves complex indexation situations.

What you need to understand

Why does Google insist on submission through Search Console?

Google presents Search Console as the privileged interface for reporting unindexed content. By submitting an XML sitemap or individual URLs through the inspection tool, you explicitly tell Googlebot which pages deserve its attention.

This approach allows Google to prioritize crawling the URLs you consider strategic, rather than leaving the engine to discover your site solely through organic exploration. This is particularly useful for new sites, orphaned pages, or freshly published content that doesn't yet have incoming links.

What does Google mean by "resolving indexation problems"?

The wording remains vague. Google suggests that submitting a sitemap is a solution to indexation blockages, but doesn't specify which types of problems are involved.

In reality, a sitemap cannot fix a blocking robots.txt, a noindex tag, massive duplicate content, or technically inaccessible pages. It facilitates discovery, not the resolution of structural problems. This distinction is critical.

What are the key takeaways from this statement?

- Search Console centralizes Google presence management: sitemaps, URL inspection, coverage reports

- Submitting a sitemap accelerates discovery but doesn't guarantee actual indexation

- This approach complements natural exploration, it doesn't replace it

- True indexation blockages require in-depth technical diagnosis, not just a sitemap

- The statement intentionally oversimplifies a more complex reality

SEO Expert opinion

Is this recommendation consistent with field observations?

Yes and no. Submitting a sitemap via GSC does effectively accelerate initial crawling of a new site or quickly signal fresh content. Observations show that URLs submitted through the inspection tool are generally crawled within 24-48 hours.

However, presenting this action as THE solution to indexation problems amounts to excessive oversimplification. [To be verified] : in the majority of blocked indexation cases I handle, the sitemap had already been submitted for months. The problem lay elsewhere — insufficient crawl budget, content deemed low quality, poorly managed pagination, failing technical structure.

What nuances should be added to this statement?

Google doesn't say that submitting a sitemap resolves problems, but rather that it's a way to identify and flag them. This distinction is crucial. The coverage report will then tell you why certain URLs aren't indexed: excluded by robots.txt, marked noindex, redirects, server errors, etc.

A properly structured sitemap remains a weak signal. If your site suffers from crawl budget problems (millions of pages, flat architecture, infinite pagination), submitting 50,000 additional URLs won't change the situation. Googlebot will continue to prioritize pages it deems important according to its own criteria — popularity, freshness, authority.

In what cases does this approach show its limits?

Let's be honest — submitting a sitemap will be useless if your site presents structural negative signals. A site with massive duplicate content, catastrophic server response times, or a hermetically sealed silo architecture won't see its problems resolved by a simple XML file.

Similarly, sites victim to manual deindexation or algorithmic penalties won't recover visibility by resubmitting their pages. Diagnosis must target the root problem, not its symptoms.

Practical impact and recommendations

What should you do concretely to optimize your sitemap submission?

Generate a clean and up-to-date XML sitemap, containing only the URLs you want indexed. Systematically exclude pages with noindex tags, redirects, URLs canonicalized to another page, and low-value content.

Respect technical limits : 50,000 URLs maximum per file, size under 50 MB uncompressed. For large sites, use a sitemap index that groups multiple thematic or content-type files (products, categories, articles, static pages).

Declare your sitemap URL in robots.txt (line Sitemap: https://yoursite.com/sitemap.xml) and also submit it via GSC to benefit from detailed reporting. Regularly check the coverage report to identify errors and adjust your file.

What mistakes must you absolutely avoid?

- Include URLs blocked by robots.txt or marked noindex in the sitemap

- Submit a sitemap containing thousands of dead URLs (404, 410)

- Generate a static sitemap never updated after publishing new content

- Create redundant or poorly structured multiple sitemaps that confuse Googlebot

- Rely solely on the sitemap without working on internal linking and architecture

- Manually submit individual URLs in bulk through the inspection tool instead of fixing root causes

How can you verify your approach is actually working?

Check the coverage report in GSC to identify submitted URLs that aren't indexed. The specific reasons ("Crawled, currently not indexed", "Discovered, currently not indexed", "Excluded by noindex tag") will guide you toward the corrections to apply.

Also monitor the overall indexation rate : how many submitted pages are actually present in Google's index? A rate below 70-80% generally signals a deeper structural problem than a simple crawl deficit.

Cross-check this data with your server logs to verify whether Googlebot is actually crawling the priority URLs. If strategic pages remain ignored despite being in the sitemap, dig into crawl budget allocation, click depth, or perceived content quality.

❓ Frequently Asked Questions

Soumettre mon sitemap garantit-il que toutes mes pages seront indexées ?

Dois-je soumettre un nouveau sitemap à chaque publication de contenu ?

Que faire si mon sitemap est soumis mais que mes pages restent non indexées ?

Faut-il inclure toutes les pages du site dans le sitemap ?

L'outil d'inspection d'URL remplace-t-il le sitemap pour l'indexation rapide ?

🎥 From the same video 6

Other SEO insights extracted from this same Google Search Central video · published on 24/02/2022

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.