Official statement

What you need to understand

Why Would You Want to Noindex a Sitemap?

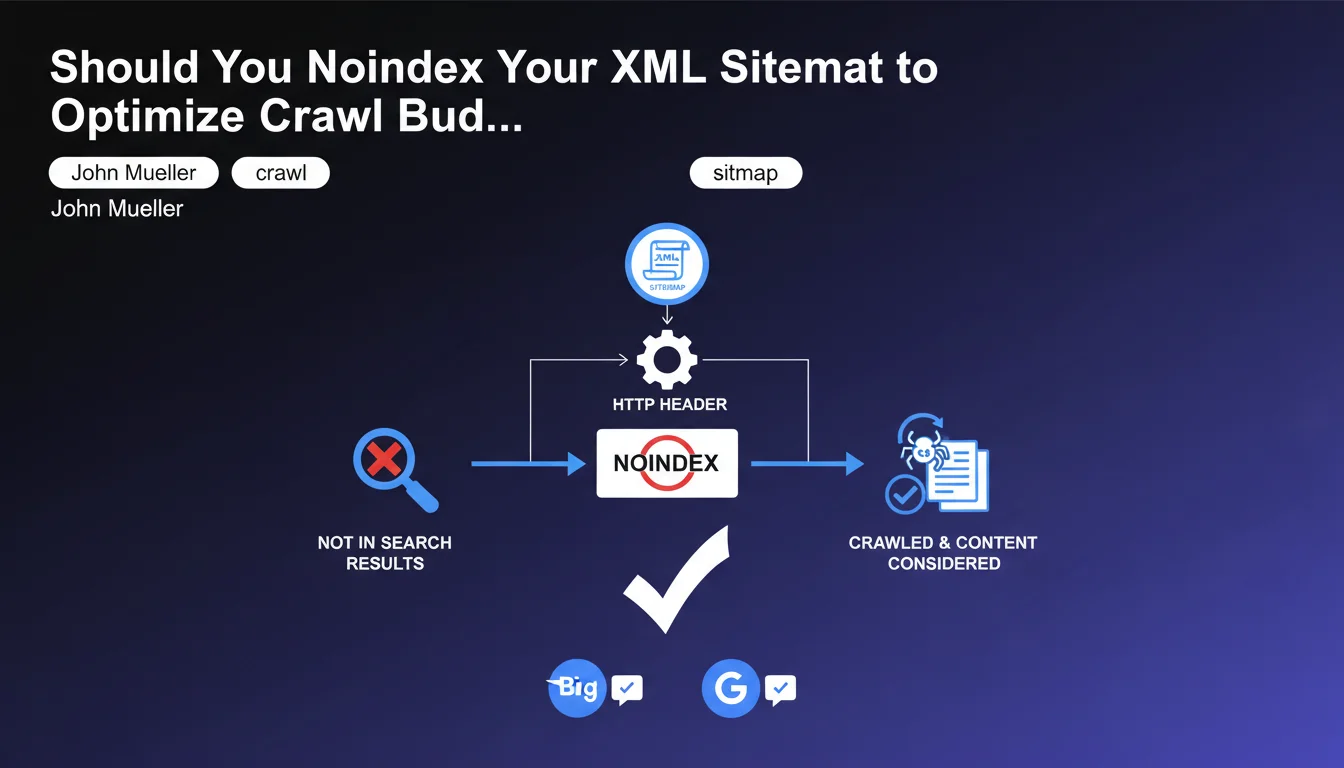

The XML sitemap file is a tool designed for search engine robots to discover and index important pages on a site. Paradoxically, this technical file can itself appear in search results, which provides no value to users.

By adding a noindex directive via HTTP header, you tell search engines not to display this file in their SERPs. This absolutely does not prevent robots from crawling it and using the URLs it contains.

How Does Google Handle a Noindexed Sitemap?

Google and Bing have confirmed that the noindex directive on a sitemap only affects the visibility of the file itself in search results. Robots continue to download it, analyze it, and discover the listed URLs.

This distinction is crucial: noindex prevents the indexation of the sitemap as a result page, but does not block its role as a guide for crawling. The contained URLs remain perfectly eligible for indexation.

What's the Difference Between Noindex and Robots.txt?

Blocking the sitemap via robots.txt would prevent Google from crawling it and therefore from exploiting its content. This is a common mistake that deprives the search engine of valuable information about the site structure.

The noindex via HTTP header, on the other hand, allows the robot to read the file while preventing it from polluting the index. This is the recommended approach for technical files.

- The XML sitemap can receive a noindex directive without impacting its usefulness

- URLs contained in the sitemap remain normally crawlable and indexable

- This practice prevents the sitemap from appearing in search results

- Never confuse noindex (HTTP header) with robots.txt blocking

- The noindex applies to the sitemap file, not to the URLs it references

SEO Expert opinion

Is This Statement Consistent with Practices Observed in the Field?

In my 15 years of experience, I have indeed noticed that sitemaps sometimes appear in the index, particularly for less authoritative sites or those with internal linking issues. This index pollution is not only unsightly but can also dilute domain authority.

John Mueller's confirmation validates a practice already adopted by some advanced SEOs. This approach is particularly relevant for e-commerce sites with dozens of sitemaps or platforms generating multiple dynamic sitemaps.

What Nuances and Precautions Should Be Taken?

It is crucial to understand that the noindex must be applied via HTTP header, not via a meta tag in the XML. Since the sitemap is an XML file and not HTML, a meta tag would have no effect and could even invalidate the format.

Also be careful not to confuse this practice with noindexing the referenced pages. Setting the sitemap to noindex does not affect the indexation status of the URLs listed inside, which follow their own directives.

In What Cases Is This Practice Really Necessary?

For the majority of sites, the appearance of the sitemap in the index is not a critical problem. Google is generally efficient enough not to display these technical files in standard results.

However, for large sites with complex architecture, platforms with numerous subdomains, or sites with indexation issues for low-quality pages, this practice can be beneficial. It provides an additional layer of control over what appears in the index.

Practical impact and recommendations

How Do You Concretely Noindex a Sitemap?

The recommended method consists of configuring your web server to add an HTTP header X-Robots-Tag with the value "noindex" specifically for sitemap.xml files. On Apache, this is done via the .htaccess file with a Header set directive.

On Nginx, you will add a configuration in the location block corresponding to the sitemap. On CMS like WordPress, some advanced SEO plugins allow you to manage these headers without touching the server configuration.

Then verify with the browser developer tools that the X-Robots-Tag: noindex header is indeed present in the HTTP response when accessing the sitemap.

What Mistakes Should You Avoid During Implementation?

The most common mistake is to block the sitemap via robots.txt thinking you will get the same result. This would prevent Google from crawling it and make the file completely useless.

Also do not attempt to add a meta robots tag in the XML: this would invalidate the sitemap format and could cause errors in Search Console. The noindex must imperatively go through the HTTP header.

Finally, avoid applying this noindex globally to all XML files on your site, as some might need to be indexed according to your content strategy.

What Should You Check After Implementation?

Check in Google Search Console that your sitemaps continue to be processed normally. The Sitemaps report should indicate that Google can still read them and is discovering the contained URLs.

Also monitor that the sitemap file itself progressively disappears from the index. A search for site:yourdomain.com/sitemap.xml should no longer return results after a few weeks.

- Configure the X-Robots-Tag: noindex HTTP header on sitemap files only

- Verify the presence of the header with browser developer tools

- Never block the sitemap via robots.txt

- Check that Search Console still processes sitemaps normally

- Monitor the progressive deindexation of the sitemap file in SERPs

- Maintain the URLs referenced in the sitemap with their own indexation directives

- Document this configuration for future migrations or server changes

The ability to noindex a sitemap without affecting its usefulness for crawling represents an advanced technical optimization that requires a fine understanding of indexation mechanisms. This configuration must be implemented at the server level with precision.

For complex sites with significant crawl budget and index cleanliness concerns, this type of optimization is part of an in-depth technical SEO approach. If these aspects seem complex or time-consuming to you, support from a specialized SEO agency can prove valuable for implementing these configurations securely and customized according to your specific infrastructure.

💬 Comments (0)

Be the first to comment.