Official statement

What you need to understand

What is Google's official stance on unavailable servers?

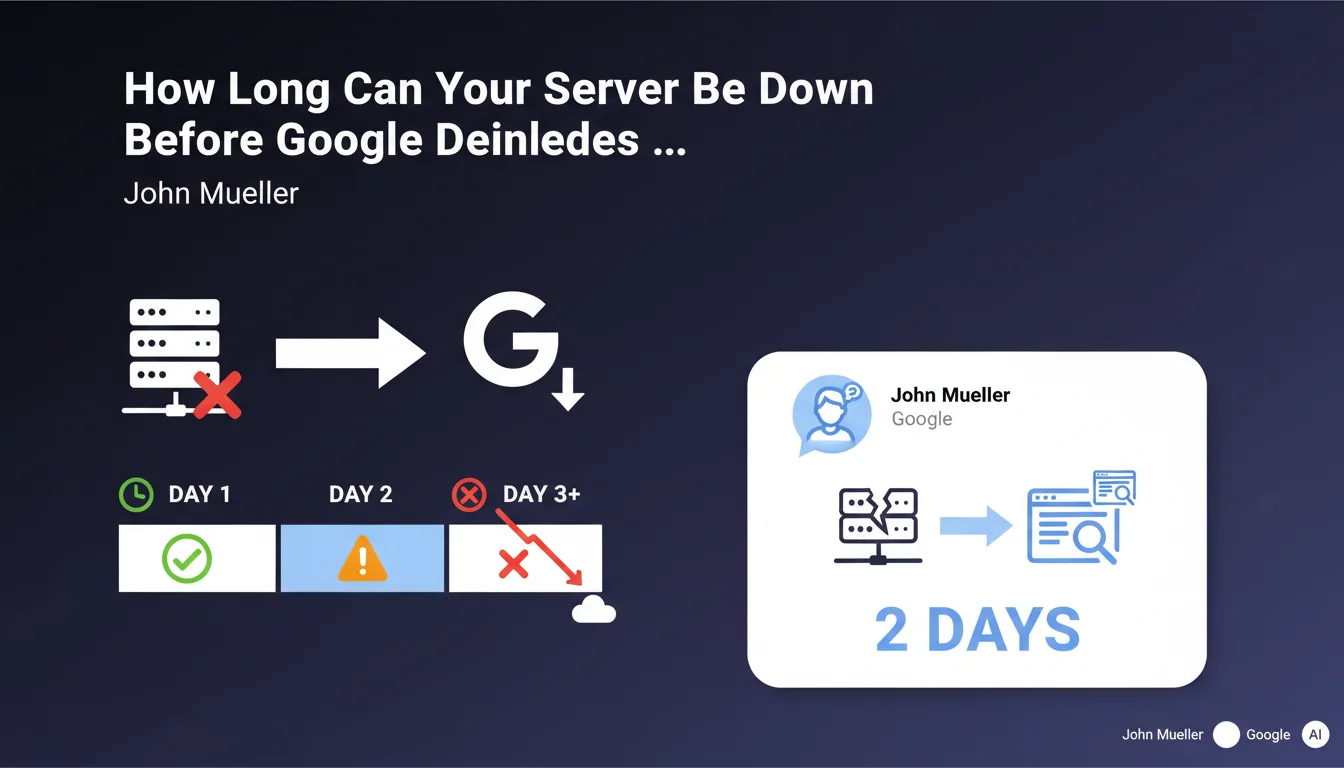

Google has clarified its policy regarding inaccessible websites due to a failing server. According to this official statement, a site can be deindexed if the unavailability lasts several days, specifically beyond two consecutive days.

This position marks an important evolution in how Google handles prolonged server errors. The search engine no longer simply waits indefinitely for a site to become accessible again.

Why is this two-day threshold so critical?

Google crawls the web continuously and allocates a crawl budget to each site. When a server is unavailable, Googlebot encounters repeated errors that consume this budget without results.

Beyond 48 hours of unavailability, Google interprets this situation as a major structural problem rather than a temporary incident. The engine then makes the decision to remove pages from its index to avoid offering inaccessible results to users.

How can the HTTP 503 code protect your indexing?

The HTTP status code 503 (Service Unavailable) plays a crucial role in this equation. It explicitly indicates to search engines that this is temporary maintenance and not a permanent disappearance.

When Google receives a 503 code, it understands that the unavailability is planned or accidental but temporary. This allows it to adapt its behavior and preserve indexing during the interruption period.

- Critical threshold: Possible deindexing after 2 days of continuous unavailability

- 503 code mandatory: Essential for signaling temporary maintenance to search engines

- Crawl budget: Repeated errors rapidly exhaust your exploration quota

- Reindexing possible: Return to normal can be relatively quick once the server is restored

- User impact: Google prioritizes search experience by removing inaccessible sites

SEO Expert opinion

Does this statement align with real-world observations?

This position from Google does indeed correspond to observations by SEO professionals over several years. Cases of rapid deindexing following prolonged server outages are documented and recurring.

However, the notion of "a couple of days" remains intentionally vague and contextual. In practice, certain sites with strong authority or a stable history may benefit from slightly greater tolerance, while newer or less established sites risk faster deindexing.

What nuances should be applied to this two-day rule?

The two-day deadline is not an absolute limit but rather an indicative threshold. Several factors influence Google's decision: the site's usual crawl frequency, its overall authority, and the nature of the errors encountered.

It's also necessary to distinguish total outages from slowdowns. A server that responds slowly (frequent timeouts) without being completely down can also suffer indexing penalties, but according to different logic related to Core Web Vitals and user experience.

In what cases is the return to normal truly fast?

The statement mentions that reindexing can be faster after restoration. This assertion must be nuanced: it mainly concerns sites with a good history and typically solid infrastructure.

In reality, if your site has been completely deindexed, the complete reindexing process can take several days to several weeks depending on the site's size. Google doesn't instantly reindex all pages as soon as the server becomes accessible again; it resumes crawling progressively.

Practical impact and recommendations

What should you implement immediately to protect yourself?

The first critical action is to configure a robust server monitoring system with real-time alerts. You must be notified within 5 minutes of an outage, not after several hours.

Next, ensure your infrastructure is capable of automatically serving a 503 code in case of planned maintenance or detection of a failure. This configuration must be tested regularly, ideally every quarter.

Finally, establish a documented disaster recovery plan with clear procedures and identified responsible parties. Response time is crucial: every hour counts when your site is inaccessible.

What critical mistakes must you absolutely avoid?

The most frequent error is letting the server return 500 codes or timeouts without corrective action. These errors are interpreted more negatively than a properly configured 503 code.

Another pitfall: using a JavaScript maintenance page that returns a 200 code. Google primarily crawls raw HTML and will consider the page accessible when it's not actually accessible for users without JavaScript.

Never neglect communication to Google Search Console. If an outage occurs, document it and monitor coverage reports to quickly detect any indexing issues.

How can you verify and maintain your infrastructure's compliance?

Implement regular automated tests that simulate outages and verify that the correct HTTP codes are being returned. These tests must cover different scenarios: server failure, planned maintenance, temporary overload.

Audit your hosting infrastructure at least quarterly: check your host's SLAs, test your backups, and ensure your failover procedures actually work.

- Configure monitoring with instant alerts (SMS/email) for any unavailability

- Implement automatic return of HTTP 503 code in case of maintenance or detected outage

- Test the 503 configuration every 3 months minimum in staging environment

- Document a disaster recovery plan with objective of restoration within 4 hours maximum

- Set up daily automated server availability tests

- Verify that your host guarantees a minimum uptime of 99.9% in their SLA

- Configure daily automatic backups with monthly restoration tests

- Monitor Google Search Console weekly to detect crawl errors

- Plan for failover infrastructure for critical sites

- Train multiple team members in server emergency procedures

💬 Comments (0)

Be the first to comment.