Official statement

What you need to understand

What exactly does Google consider as content scraping?

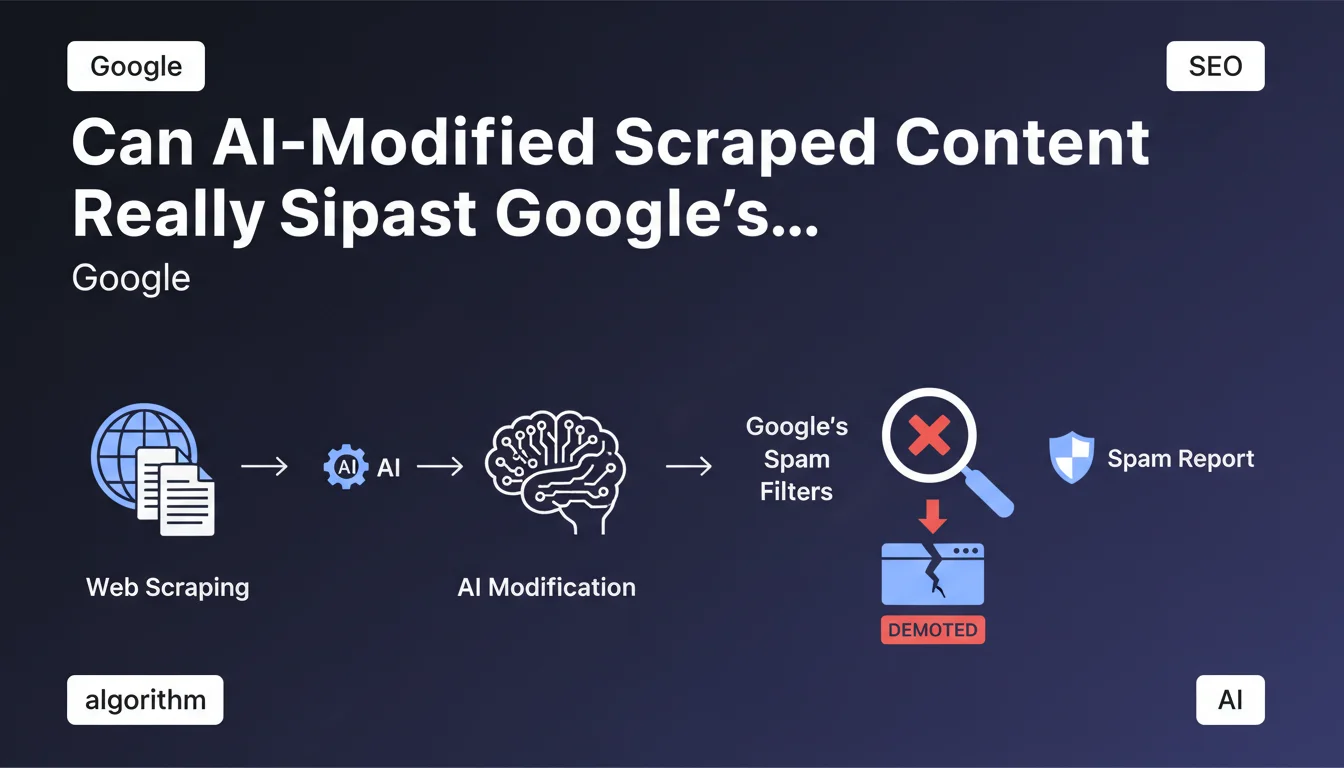

Content scraping consists of copying texts published on other websites to republish them on your own site. Google specifies that even if this content is modified by artificial intelligence algorithms, it still remains a violation of its anti-spam policies.

This position reflects Google's determination to penalize sites that do not create original value for users. The mere automated transformation of existing content is not enough to make it legitimate in the eyes of the search engine.

How does Google detect these scraping practices?

Google has numerous algorithms specifically designed to identify duplicated or weakly transformed content. These systems analyze semantic similarities and suspicious publication patterns.

When a site is identified as practicing scraping, it undergoes a demotion in search results. This penalty can affect the entire domain and permanently compromise its visibility.

Why does Google encourage spam reports despite their limited effectiveness?

Spam reports constitute an additional signal for Google, even though their processing is not immediate. They help enrich the training data for detection algorithms.

In practice, spamming sites can indeed continue their activities for several months before detection. This reality testifies to the current limitations of Google's automated systems.

- AI-modified scraping is still considered spam by Google

- Detection algorithms exist but are not instantaneous

- Penalties can affect the entire domain

- Creating original content remains the only viable long-term approach

SEO Expert opinion

Does this statement really reflect the current situation on the ground?

In reality, we observe daily that sites using scraped content transformed by AI are still performing very well in the SERPs. This contradiction between the official discourse and reality shows the limitations of Google's current algorithms.

The gap between detection and action can extend over 6 to 12 months, or even longer. During this period, spamming sites can generate considerable traffic and monetize their audience before being penalized.

What nuances should be brought to this official Google position?

There is a significant gray area between pure scraping and legitimately enriched curation. Some sites aggregate content from multiple sources and add substantial analysis, which can be acceptable.

The fundamental difference lies in the added value provided. If your human intervention and expertise create a unique and useful perspective, you move out of the category of condemnable scraping.

In what cases can you legitimately reuse existing content?

Citations with attribution, short excerpts in an analytical context, or authorized syndication are perfectly legitimate. The key point is the consent of the original creator and the proportion of original vs. reused content.

An article that quotes 3-4 sources with 200 words of excerpts in 2000 words of original content generally poses no problem. The ratio and editorial intent make all the difference.

Practical impact and recommendations

How can you verify that your content strategy complies with Google guidelines?

Use tools like Copyscape or Siteliner to analyze the similarity of your content with other web pages. A duplication rate above 30% should alert you.

Evaluate each page according to the principle of substantial added value. Ask yourself honestly: does this content bring something unique that the user wouldn't find elsewhere?

What actions should you take if you already have problematic content?

Conduct a comprehensive audit of your site to identify at-risk pages. Prioritize those that generate the most traffic or are strategically important.

For each problematic page, you have three options: completely rewrite it, substantially enrich it with original content, or delete it and implement a 301 redirect.

- Audit the entire site with duplication detection tools

- Identify pages with a similarity rate above 30%

- Thoroughly rewrite or remove at-risk content

- Establish an original content creation process with qualified writers

- Train your teams in editorial best practices

- Implement systematic quality review before publication

- Document your sources and citations transparently

- Regularly monitor your rankings to detect potential penalties

What content strategy should you adopt for the long term?

Invest in a strong editorial line based on your business expertise. Original content takes longer to produce but generates lasting results without risk of penalty.

Favor quality over quantity. A truly useful and unique 2000-word article will always outperform 10 mediocre scraped and transformed articles.

💬 Comments (0)

Be the first to comment.