Official statement

What you need to understand

What's the difference between Google's advertising bots and search bots?

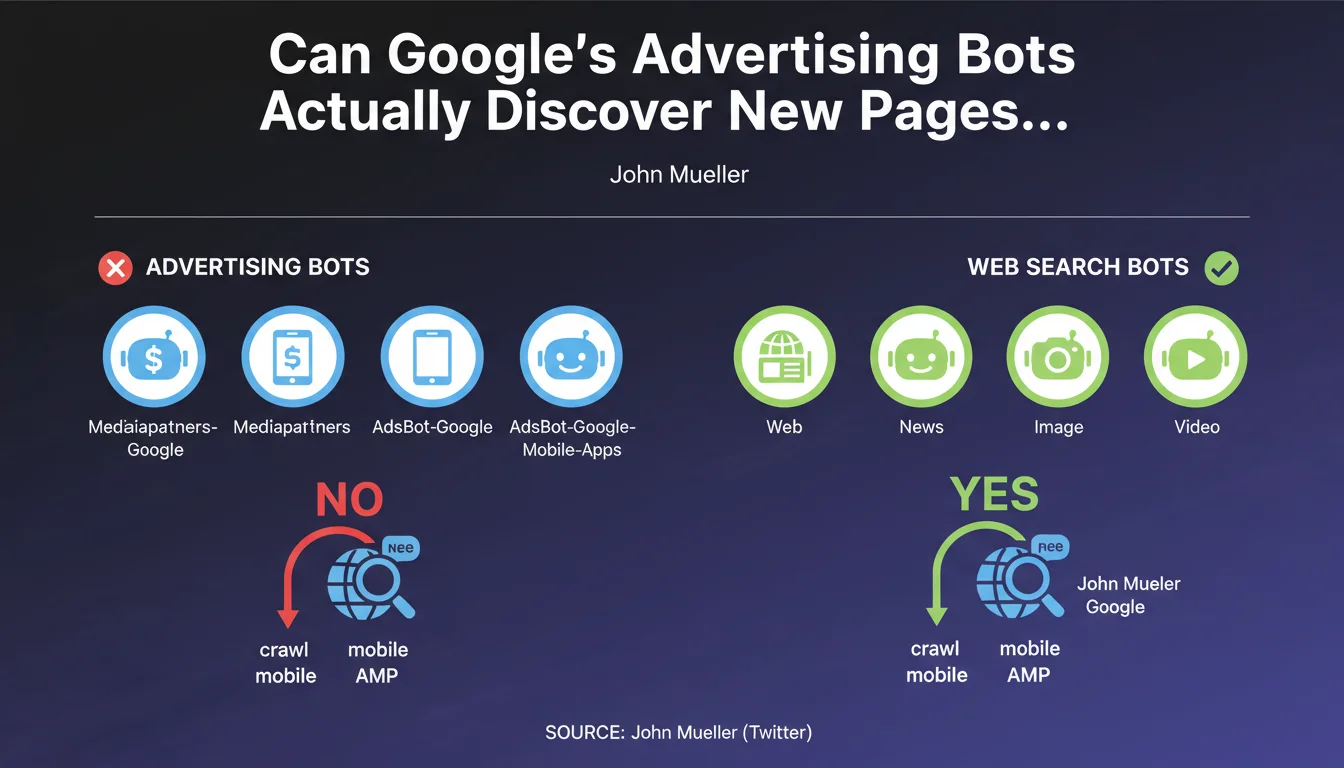

Google uses several distinct types of robots depending on their missions. Advertising bots like Mediapartners-Google and AdsBot-Google specialize in content analysis for AdWords and AdSense ad targeting.

In contrast, web search robots (Googlebot for web, images, videos or news) are responsible for crawling, discovering and indexing pages. This separation of roles is fundamental to understanding how Google's ecosystem works.

Why does this distinction matter for SEO?

This clarification means that allowing only advertising bots in your robots.txt file will never allow your pages to be indexed. Only Googlebot (the search bot) can discover and submit new URLs to Google's index.

If you block Googlebot while allowing advertising bots, your pages will remain invisible in search results, even if they can display properly targeted AdSense ads.

What are the exact names of the bots affected by this limitation?

The advertising bots mentioned include Mediapartners-Google, Mediapartners, AdsBot-Google and AdsBot-Google-Mobile-Apps. None of these user agents contribute to discovering new URLs.

The bots capable of discovering content are exclusively those dedicated to search: Googlebot, Googlebot-News, Googlebot-Image and Googlebot-Video.

- Advertising bots (Mediapartners, AdsBot) do not discover new pages

- Only search bots (Googlebot) can index content

- Blocking Googlebot prevents indexing, even if advertising bots are allowed

- This separation is intentional and structural at Google

SEO Expert opinion

Is this statement consistent with what we observe in the field?

Absolutely. This clarification from John Mueller confirms what SEO professionals have been observing for years. Sites that block Googlebot but allow advertising bots never see their new pages indexed, even after months.

This distinction also explains why some sites monetized with AdSense can function without advertising problems while having severe indexation issues. The two systems are truly independent in their operation.

What nuances should be applied to this rule?

It's important to understand that this limitation only concerns the initial discovery of new URLs. Once a page is known to Google via Googlebot, advertising bots can of course access it to analyze the content.

Furthermore, this rule doesn't mean that advertising bots are useless. Their role in contextual ad targeting remains crucial for publishers using AdSense, but this role is completely separate from the SEO indexation process.

In what cases does this information change our practices?

This statement is particularly critical for sites attempting to finely manage their robots.txt permissions. Some webmasters mistakenly think they can improve their advertising revenue by only allowing AdSense bots.

Practical impact and recommendations

How can I verify the robot configuration on my site?

Start by examining your robots.txt file to identify all User-agent directives and their associated rules. Verify that Googlebot is not blocked, even partially.

Then use the URL Inspection tool in Search Console to test Googlebot's access to your strategic pages. This tool will clearly indicate if any blocks are preventing crawling and indexation.

What configuration errors should absolutely be avoided?

The most common error is to block Googlebot while allowing advertising bots, thinking this will be sufficient for indexation. This is a configuration that guarantees the failure of your SEO strategy.

Another error: allowing only specific versions of Googlebot (like Googlebot-Mobile) while blocking the general version. For optimal indexation coverage, you must allow all of Google's search bots.

- Verify that Googlebot (general version) is allowed in robots.txt

- Also allow Googlebot-Image, Googlebot-Video and Googlebot-News depending on your content

- Never rely on advertising bots for indexation

- Regularly test the accessibility of your pages via Search Console

- Document all your robots.txt rules to avoid accidental modifications

- Monitor server logs to identify actual crawl patterns

What should you do if you have complex robot management needs?

Robots.txt configurations can quickly become complex, particularly on large-scale sites or e-commerce platforms with thousands of pages. A syntax error or poorly ordered rule can block the indexation of entire sections.

For sites with significant SEO stakes, it's often wise to rely on specialized SEO expertise capable of thoroughly auditing your configurations, identifying subtle blocks, and implementing an optimized crawl strategy. Personalized support helps avoid costly errors and ensures that your technical architecture truly supports your visibility objectives.

💬 Comments (0)

Be the first to comment.