Official statement

What you need to understand

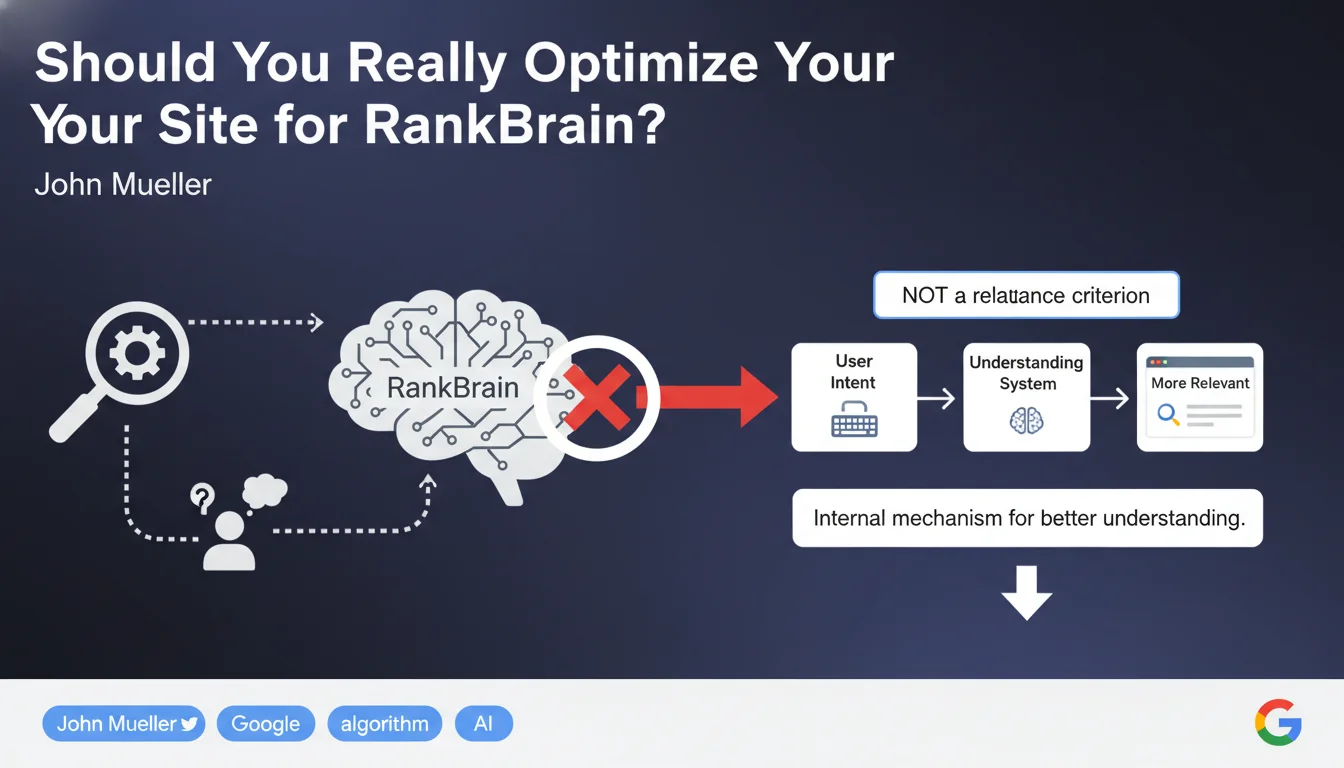

What exactly is RankBrain and how does it actually work?

RankBrain is an artificial intelligence component of Google that relies on historical search data to process unprecedented queries. Contrary to popular belief, it doesn't work in real-time on user signals.

The system predicts what users would likely click on based on similar past queries. This prediction is based on analyzing search results pages, not on user behavior after the click.

Why does Google specify that the data used is old?

The data used by RankBrain can be several months old. This crucial information demonstrates that the algorithm doesn't react instantly to variations in CTR or time spent on site.

RankBrain was designed to overcome the limitations of traditional algorithms, particularly in understanding complex queries containing negations or unusual phrasings.

Which user signals are therefore excluded from ranking?

The statement sweeps away several persistent SEO myths: pogosticking (quick return to results), dwell time (time spent on page), and CTR (click-through rate) would not be direct ranking factors.

These behavioral metrics, often presented as essential, are not part of the relevance algorithms according to this official position.

- RankBrain predicts potential clicks, it doesn't measure them in real-time

- The data used is historical and can be several months old

- The analysis focuses on SERPs, not on landing pages

- Pogosticking, dwell time, and CTR are not direct ranking factors

- User experience doesn't directly influence relevance algorithms

SEO Expert opinion

Is this statement completely consistent with field observations?

The position expressed here deserves a nuanced analysis. If RankBrain doesn't directly use behavioral signals for ranking, the latter can indirectly influence other Google systems.

Correlation studies regularly show links between CTR/dwell time and positioning. But correlation is not causation: these metrics may be consequences of good ranking rather than causes.

It's also possible that other components of Google's overall algorithm (beyond RankBrain) use this data, even if this isn't the case for relevance algorithms strictly speaking.

What essential nuances need to be added to this vision?

The distinction between ranking factors and learning signals is fundamental. RankBrain was trained on past behavioral data, even if it doesn't use them in real-time for each query.

Moreover, Google uses user signals for its quality tests and evaluations. This data feeds the continuous improvement of algorithms, even if they're not direct ranking factors.

In what context could this rule evolve?

Google regularly evolves its systems. This statement reflects the state of RankBrain at a specific moment, but the architecture can change with algorithmic updates.

Core Web Vitals and the page experience update show that Google is progressively integrating more user experience signals, even if it's within a framework distinct from pure relevance algorithms.

Practical impact and recommendations

What should you actually do as an SEO practitioner?

Focus on the fundamentals of relevance: content quality, semantic architecture, topical authority, and traditional signals. These elements remain the core of effective SEO.

Don't neglect user experience however. A site with excellent UX naturally generates indirect positive signals: more social shares, more incoming links, better brand awareness.

Stop optimizing specifically for metrics like dwell time or pogosticking. These efforts are probably futile if your only goal is to manipulate a ranking factor.

What strategic mistakes should you absolutely avoid?

Don't fall into the trap of over-complicating your SEO approach. Many professionals waste time on marginal optimizations based on unverified theories.

Avoid justifying poor user experience on the grounds that "Google doesn't measure it." Your visitors perceive it, and it impacts your business overall.

- Prioritize content quality and relevance for your real users

- Optimize your site's technical and semantic architecture

- Work on your topical authority through expert content

- Develop a natural, quality link strategy

- Improve UX for your visitors, not to manipulate metrics

- Simplify your approach: focus on what has proven effective

- Don't scatter your efforts optimizing unconfirmed signals

- Measure your success through business KPIs, not just technical ones

How should you adjust your SEO strategy in light of these revelations?

Revise your optimization priorities by focusing on confirmed signals: quality content, E-E-A-T, technical architecture, loading speed, mobile-friendliness, and natural backlinks.

Adopt a holistic approach where SEO serves your overall digital strategy, rather than seeking algorithmic shortcuts. This long-term vision better withstands the constant evolution of search engines.

Google's statement on RankBrain reminds us of the importance of returning to SEO fundamentals. Relevance algorithms focus on the match between query and content, not on real-time behavioral metrics.

However, user experience remains crucial for your business performance. The balance between technical optimization, content quality, and user experience requires sharp expertise and constant monitoring of algorithmic developments. Given the growing complexity of the SEO ecosystem and the many subtleties in interpreting official statements, support from a specialized SEO agency can prove valuable for developing a truly effective strategy and avoiding false leads that waste time and resources.

💬 Comments (0)

Be the first to comment.