Official statement

What you need to understand

Why Does Google Need to See the Full Content Behind a Paywall?

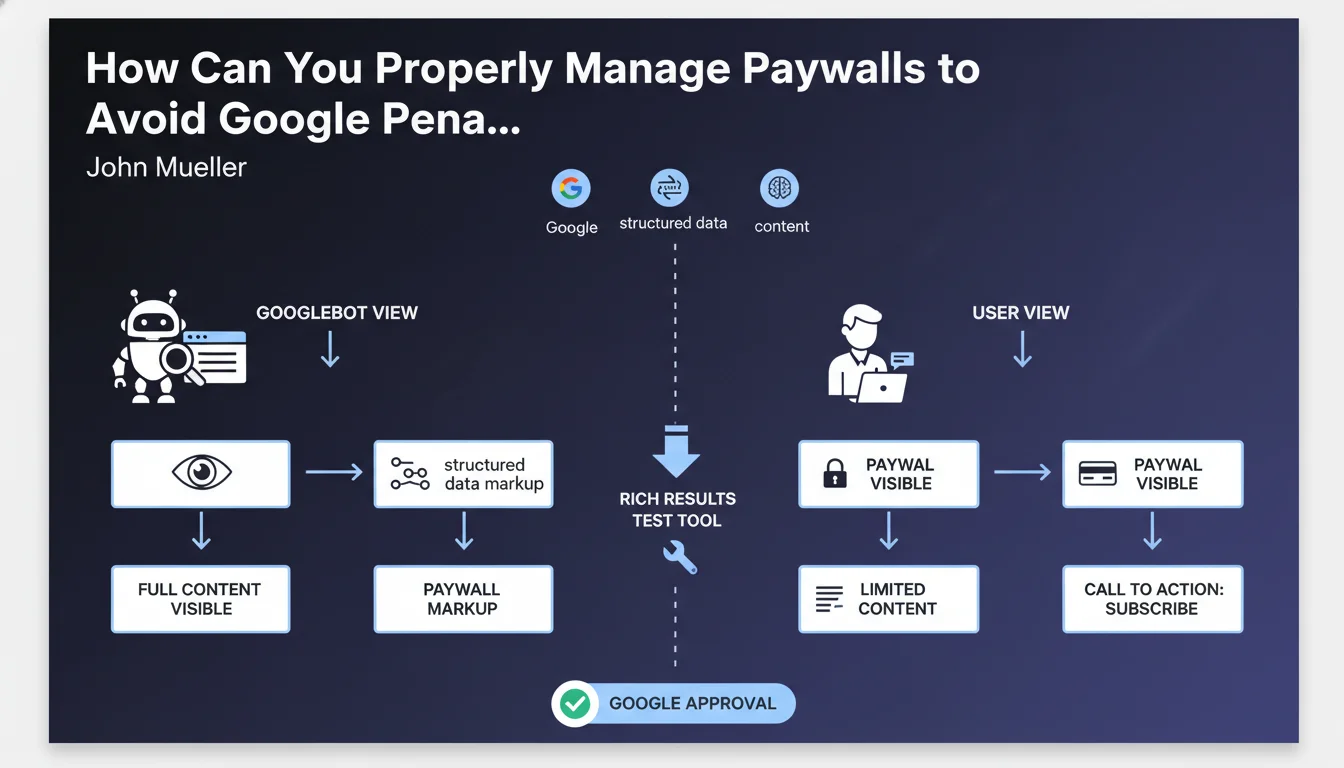

Google must understand the relevance and quality of your content to rank it in its search results. If Googlebot can only access a fraction of the content, it won't be able to properly evaluate the page.

This requirement forces publishers to find a delicate balance: showing the complete content to the search engine while preserving the paywall business model for users. The main risk is falling into cloaking, a practice penalized by Google.

What Is Structured Data Markup for Paywalls?

Paywall structured data allows you to explicitly signal to Google that content is partially or fully reserved for subscribers. This markup helps the search engine differentiate legitimate paywalled content from a cloaking attempt.

The correct use of this markup, combined with full access for Googlebot, constitutes an acceptable practice according to Google's guidelines. Users don't need to see this technical markup in the HTML code.

What Tools Should You Use to Verify Correct Implementation?

Google recommends two main tools to validate your configuration: the rich results test tool to check structured data, and the URL inspection tool in Search Console.

The latter is particularly crucial because it shows exactly how Googlebot perceives your page, allowing you to ensure there's no problematic divergence between the user version and the search engine version.

- Googlebot must access the entire paid content

- Paywall structured data markup is recommended

- Users should not see this technical markup

- Validation via the rich results test tool

- Verification via the URL inspection tool in Search Console

SEO Expert opinion

Is This Approach Consistent with Practices Observed in the Field?

The approach recommended by Google is indeed consistent with observations from SEO professionals. Premium news sites like the New York Times or Le Monde have been using this method successfully for years.

However, the technical reality is more nuanced. Some publishers use implementation variants: First Click Free sampling (now Flexible Sampling), visible content fragments, or enriched metadata compensating for limited access.

What Are the Real Risks of Poorly Controlled Cloaking?

Cloaking remains one of the major risks when implementing a paywall. The line is thin between showing content to Google and creating a different experience for users.

Problematic cases include: displaying completely different content to Google, hiding essential elements from users, or using conditional redirection techniques based on user-agent. These practices can result in severe manual penalties.

In Which Cases Should You Adapt This Recommendation?

For freemium content where only part is paid, the approach can be different. It's acceptable to mark only the premium sections with appropriate structured data.

Sites offering free trials or registrations must also adapt their strategy. In these cases, using alternative schemas like article structured data with the isAccessibleForFree property may be more appropriate.

Practical impact and recommendations

What Concrete Actions Should You Take for a Compliant Paywall?

The first step is to implement appropriate structured data using the schema.org vocabulary. Add the hasPart properties with typeCreativeWork and isAccessibleForFree set to false for paid sections.

Ensure that Googlebot can access the complete content by verifying that your robots.txt file doesn't block essential resources and that JavaScript rendering (if applicable) works correctly for the crawler.

Systematically test your implementation with official Google tools: the rich results test tool to validate the markup, and URL inspection to verify the actual rendering of the page by Googlebot.

What Critical Mistakes Must You Absolutely Avoid?

The most common mistake is to completely block content from Googlebot out of fear of cannibalizing subscriptions. This prevents any effective SEO and makes your content invisible in search results.

Also avoid content inconsistencies between the crawled version and the user version. If a subscribed user sees a different article from what Google indexes, you risk a cloaking penalty.

Don't neglect regular testing. Technical changes to your CMS or paywall system can inadvertently create problematic divergences between versions.

- Implement schema.org structured data for paywall with appropriate properties

- Verify Googlebot's full access to content via the robots.txt file

- Test the markup with the rich results test tool

- Use URL inspection in Search Console to validate rendering

- Ensure the content seen by Google matches that of subscribers

- Document the implementation to facilitate maintenance

- Set up monthly monitoring of structured data

- Check for absence of cloaking errors in Search Console

How Can You Ensure a Sustainable and Optimized Implementation?

Managing an SEO-friendly paywall requires continuous monitoring and regular adjustments. Algorithm changes or Google guideline updates can impact your existing strategy.

Detailed technical documentation of your implementation facilitates audits and knowledge transfer within your teams. Include decisions made, tests performed, and results observed.

💬 Comments (0)

Be the first to comment.