Official statement

What you need to understand

What is Google's principle of overall quality?

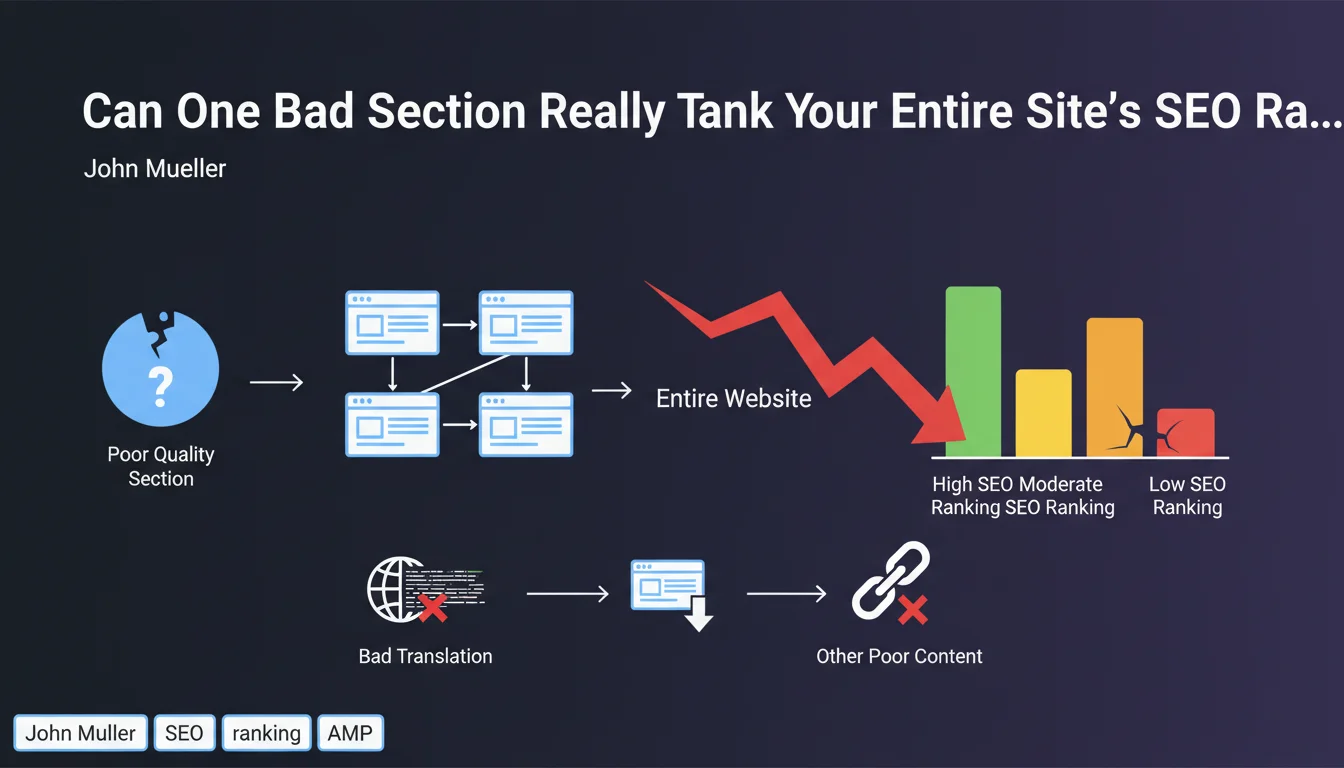

Google evaluates the quality of a website as a whole, not page by page in complete isolation. If a significant portion of your site presents low-quality content, this negatively affects the overall score assigned to the domain.

John Mueller illustrated this principle by mentioning poor-quality automated translations, but the concept applies to any form of deficient content: duplicate pages, thin content, abandoned or poorly maintained sections.

Why does Google adopt this holistic approach?

The algorithm seeks to evaluate the overall reliability and expertise of a site. A site that publishes content of varying quality sends contradictory signals about the level of rigor of its creators.

This approach aims to reward sites that maintain high standards across all their content, rather than those that rely on a few excellent pages buried in a mass of mediocre content.

What are the most damaging poor-quality elements?

- Unproofread automated translations that generate incomprehensible or approximate content

- Duplicate content internally or copied from other sites

- Thin pages without real added value for the user

- Obsolete sections with outdated or unmaintained information

- Automatically generated pages without editorial supervision

- Low-expertise content on sensitive topics (YMYL)

SEO Expert opinion

Is this statement consistent with field observations?

Absolutely. For years, we have observed that sites with excellent flagship pages suffer from overall visibility limitations when they also host low-quality sections. This is particularly visible on poorly translated multilingual sites or e-commerce platforms with generic product descriptions.

The concept of "site authority" or overall quality score is confirmed by numerous tests. Cleaning up weak content often improves the rankings of all pages, including those already performing well.

What nuances should be added to this rule?

Proportion matters enormously. A few average-quality pages on a site with 10,000 excellent pages will probably have no measurable impact. On the other hand, 30% mediocre content can be enough to significantly degrade overall performance.

The location of weak content also plays a role. Lower-quality content deeply buried in the site architecture (orphaned or poorly linked) will have less impact than weak content massively linked from the main navigation.

In which cases does this rule apply most strongly?

YMYL sites (Your Money Your Life) are particularly scrutinized. A medical site with a low-expertise blog section may see its entire domain downgraded, even if its main medical content is excellent.

Multilingual sites are also highly exposed. A poorly translated language version can negatively impact versions in other languages, although Google is increasingly trying to evaluate each language version independently.

Practical impact and recommendations

How do you identify content that's penalizing your site?

Start with a comprehensive quality audit by segmenting your content by category, page type, and age. Use Google Analytics to identify pages with zero organic traffic and high bounce rates.

Analyze your Core Web Vitals performance metrics by site sections. Manually examine a representative sample of each category to assess the actual content quality, its uniqueness, and added value.

What corrective actions should be implemented immediately?

Three strategies are available depending on the situation: outright deletion (with 410 or deindexing), consolidation of several weak pages into one strong page, or substantial improvement of existing content.

For poor-quality translations, prefer limiting your site to languages you truly master, or invest in professional translations with native proofreading. One quality language version is better than five approximate versions.

- Systematically audit all sections of your site, including forgotten old categories

- Delete or improve all pages with less than 200 words without clear added value

- Deindex language versions translated automatically without human proofreading

- Eliminate internal duplicates and overly similar content variations

- Consolidate weak content into more complete and in-depth pages

- Regularly update dated content to maintain freshness and relevance

- Define clear editorial standards that are systematic for any new publication

- Monitor Core Web Vitals by section to detect problematic areas

How do you maintain a consistent quality level over the long term?

Establish strict editorial governance with clear guidelines for all contributors. Conduct quarterly quality audits to quickly detect any drift.

Train your teams on E-E-A-T criteria (Experience, Expertise, Authoritativeness, Trustworthiness) and Google quality standards. Always prioritize quality over quantity in your content production strategy.

💬 Comments (0)

Be the first to comment.