Official statement

What you need to understand

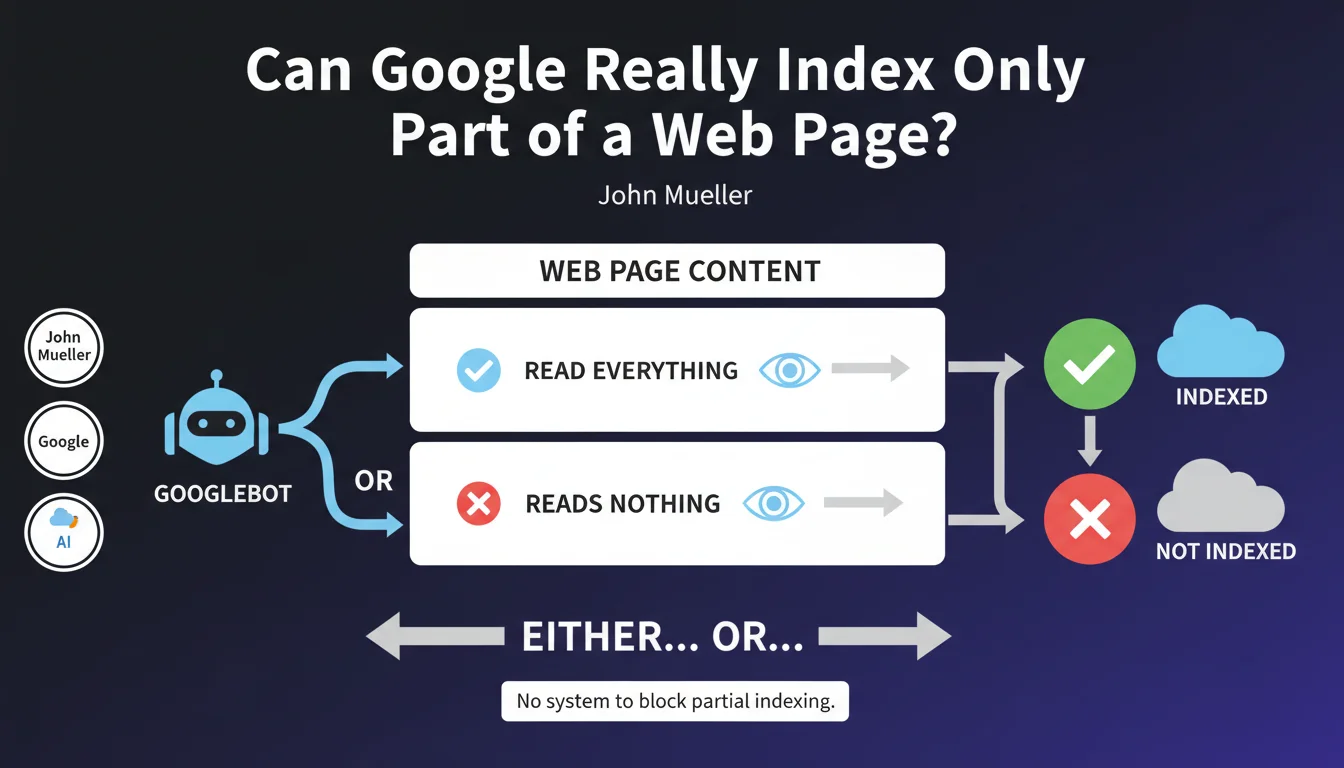

What is Google's official position on partial indexing?

Google is categorical on this point: there is no technical system that allows blocking the analysis or indexing of a specific portion of a web page. This statement confirms a fundamental rule of how Googlebot operates.

The crawler functions according to a binary principle: either it has access to the entire content of a page and analyzes it completely, or the entire page is blocked. There is no middle ground where certain sections would be excluded from indexing while others would be taken into account.

Why does this technical limitation exist?

Googlebot's architecture relies on a global and contextual analysis of content. To understand the relevance and meaning of a page, the algorithm must process all elements: text, HTML structure, internal links, and media.

Partial indexing would create semantic inconsistencies and prevent Google from correctly determining the main topic of the page. The search engine would also lose essential signals for evaluating content quality and authority.

What are the practical consequences for SEO professionals?

- Impossible to hide elements from Google while keeping the page indexed

- Techniques like HTML comments or data-* attributes don't block indexing

- Duplicate content present in sidebars or footers will be analyzed

- Any sensitive information on an indexable page will potentially be crawled

- Optimization strategies must consider the entire page

SEO Expert opinion

Is this statement consistent with practices observed in the field?

After 15 years of experience, I confirm that this statement perfectly reflects the observable technical reality. Attempts at selective indexing via JavaScript, CSS, or HTML attributes systematically fail.

Some practitioners still believe that using display:none or iframes would allow hiding content from Google. This is false: Googlebot executes modern JavaScript and analyzes the entire rendered DOM. Visually hidden content remains perfectly accessible to the crawler.

What important nuances should be added to this rule?

While Google indexes all content on a page, it doesn't weight all elements the same way. Main content (main, article tags) will always carry more weight than peripheral elements like sidebars or footers.

Furthermore, Google may choose not to display certain excerpts in search result snippets, even if the content is indexed. Indexing doesn't mean systematic display in SERPs.

In what cases does this rule pose concrete problems?

E-commerce sites are particularly affected with their mega navigation menus containing hundreds of links. These elements repeated on every page dilute internal PageRank and create semantic noise.

Platforms with user-generated content (comments, forums) face a dilemma: keeping this content visible improves engagement, but exposes to spam or low-quality content that Google will index entirely.

Practical impact and recommendations

What should be done concretely to optimize your pages?

Adopt a holistic optimization approach: every element on your pages must have a purpose and contribute positively to both user experience and SEO.

Ruthlessly eliminate superfluous or redundant content. If a text block adds no value, remove it rather than hoping Google will ignore it. Less content of better quality always surpasses a large diluted volume.

- Audit your templates to identify content unnecessarily repeated on every page

- Simplify your navigation: prioritize link quality over quantity

- Group important content in semantically clear zones (article, main tags)

- Use robots.txt and noindex to exclude entire pages, never portions

- Create dedicated pages for secondary content rather than embedding it everywhere

What critical mistakes must absolutely be avoided?

Never attempt to hide content from Google with cloaking techniques or hidden text. These practices are detected and can lead to severe manual penalties.

Also avoid overloading your pages with non-essential widgets and third-party content. Every added element will be crawled and analyzed, consuming your crawl budget and diluting your main message.

Be particularly wary of automatic content generators that produce generic text repeated across many pages. Google will index these repetitions and might consider your site as thin content.

How can you verify and maintain the overall quality of your pages?

Regularly use Search Console to analyze the snippets Google generates. If snippets display irrelevant content, it means your page contains too many parasitic elements.

Conduct quarterly content audits with tools like Screaming Frog to identify redundancies and duplicate content between your pages. Systematically streamline.

- Analyze the main content / peripheral content ratio on your primary templates

- Verify that your HTML5 semantic tags properly structure your content

- Test your pages with the URL inspection tool to see what Google actually renders

- Monitor Core Web Vitals metrics: too much content weighs down pages

- Document your best practices in an editorial guide for your teams

💬 Comments (0)

Be the first to comment.