Official statement

What you need to understand

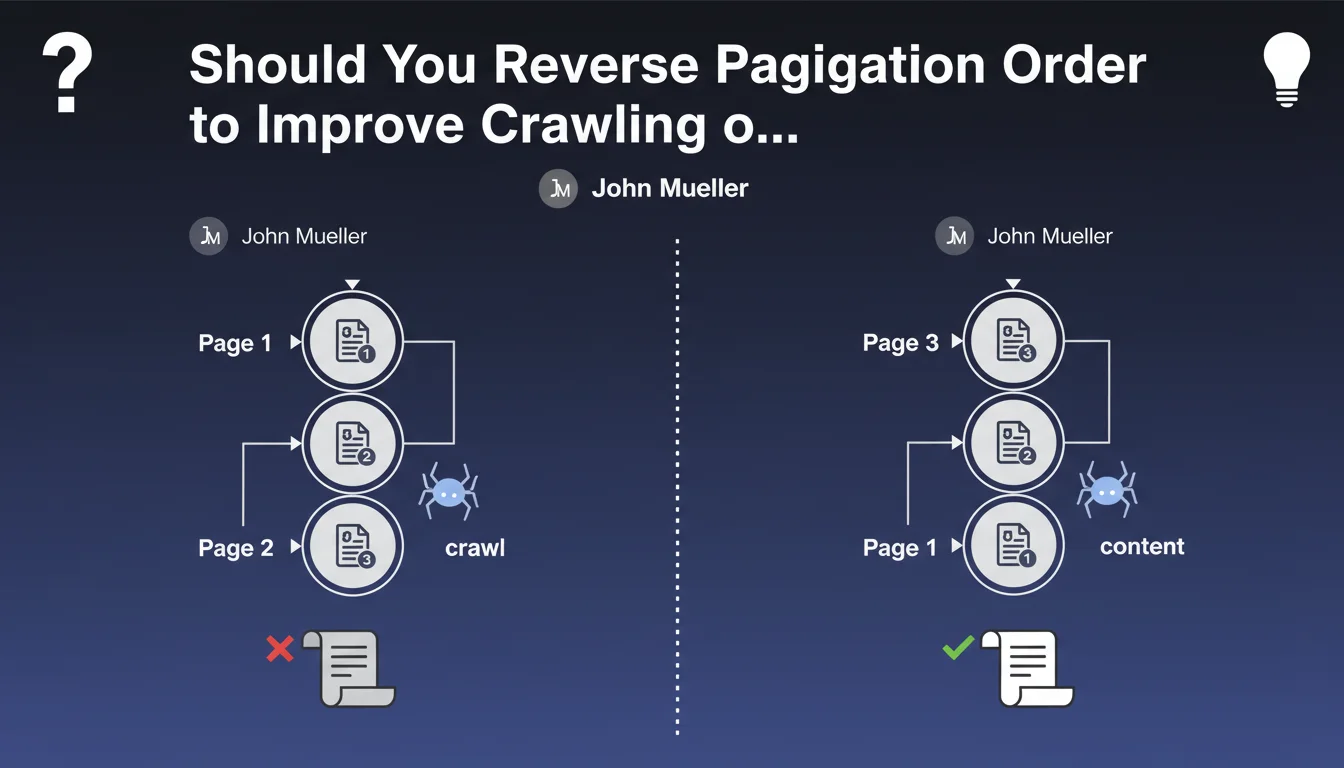

Why does pagination order impact Google's crawl?

Google's robots have a limited crawl budget for each website. When they explore pagination, they follow links from page to page, but may stop before reaching the last pages if the content seems low priority to them or if the allocated budget is exhausted.

If you place your most recent content at the end of pagination (page 10, 15, 20...), it risks never being discovered or being crawled with a considerable delay. This compromises their rapid indexing and their visibility in search results.

What's the logic behind this recommendation?

John Mueller is applying a fundamental SEO principle here: page depth (number of clicks from the homepage) directly influences crawl priority. The further away a page is, the less frequently it will be visited by Googlebot.

By placing recent items on the first page of your pagination, you guarantee they'll be discovered immediately during the robot's next visit. This is particularly crucial for news sites, blogs, e-commerce with new products, or forums.

In what contexts does this issue arise?

This recommendation primarily concerns sites with regular content flow: blogs, media outlets, online stores, classified sites, forums or community platforms. Any site where new content appears frequently is concerned.

- New blog articles must be accessible on page 1 of your archive

- Recently added products should appear at the beginning of the catalog

- Active discussions on a forum deserve immediate visibility

- Content freshness becomes a positive signal for Google

- Indexing time is considerably reduced

SEO Expert opinion

Is this statement consistent with practices observed in the field?

Absolutely. This recommendation aligns perfectly with what we've been observing for years in technical SEO. Sites that adopt a reverse chronological order (from newest to oldest) systematically benefit from faster indexing of their new content.

Crawl analytics data clearly shows that Google visits the first pagination pages much more frequently than the following ones. On some sites, pagination page 5 may only be visited once a month, while page 1 is visited daily.

What important nuances should be added to this recommendation?

Caution: this logic doesn't apply universally to all content types. For evergreen content (comprehensive guides, permanent resources), publication date isn't the most relevant sorting criterion. Thematic relevance or popularity may be preferable.

Moreover, user experience must take priority. If your visitors naturally expect reverse chronological order (like on a blog), perfect. But if your audience prefers to see the most popular or highest-rated products first, don't sacrifice UX solely for SEO.

How does this recommendation articulate with other SEO signals?

Content freshness is a ranking signal for certain queries, particularly informational and news-related ones. By facilitating rapid crawling of your recent content, you enable Google to better evaluate this freshness signal.

This approach combines effectively with other techniques like dynamic XML sitemap (listing the latest URLs), using the Indexing API for priority content, and an internal linking architecture that prioritizes recent content on the homepage.

Practical impact and recommendations

What should you do concretely to optimize pagination order?

Start by auditing your paginated sections: blog archives, product listings, categories, internal search results. Identify those where content freshness is an important criterion for your users and for Google.

Then implement reverse chronological sorting by default (from newest to oldest) on these sections. Technically, this often involves modifying the SQL queries of your CMS or e-commerce platform to add an ORDER BY date DESC.

Don't forget to update your rel="prev" and rel="next" tags if you're still using them (although Google no longer officially exploits them). Most importantly, verify that your XML sitemap properly reflects this new structure and prioritizes recent content.

What technical errors must be absolutely avoided?

Don't create duplicate content by offering multiple URLs for different sorting options (recent, popular, alphabetical). Use a default canonical URL and parameters with rel="canonical" for sorting variants.

Avoid infinite JavaScript pagination without distinct URLs, as they considerably complicate crawling. If you use infinite scroll, also implement a version with paginated URLs accessible to robots.

- Audit all your sections with active pagination

- Identify where freshness is a priority SEO criterion

- Modify the default sort order to reverse chronological

- Test the impact on user behavior (bounce rate, clicks)

- Verify canonical URLs to avoid duplication

- Update your XML sitemap with the new priorities

- Monitor indexing speed in Search Console

- Analyze logs to confirm crawl improvement

How can you measure the effectiveness of this optimization?

Use Google Search Console to track the indexing speed of your new content. Compare the average delay between publication and appearance in the index before and after the modification.

Server log file analysis is particularly revealing: observe whether Googlebot crawls your pagination pages more frequently and reaches deeper pages than before. The rate of crawled pages vs available pages is a key indicator.

💬 Comments (0)

Be the first to comment.