Official statement

What you need to understand

What is rendering and why is it crucial for SEO?

Rendering is the process by which Google analyzes a web page as if it were displayed in a real browser. Unlike simply reading raw HTML code, this technique allows the search engine to understand content dynamically generated by JavaScript.

This approach has become essential with the evolution of the modern web. Today, many sites use JavaScript frameworks to build their interfaces, making content invisible without this rendering phase.

What rendering delays has Google observed?

Google applies rendering to 98% of crawled web pages, which represents almost all of the indexable web. However, the execution time varies considerably depending on the technical complexity of the pages.

For some URLs, this process can take several weeks before being completed. This delay directly impacts how quickly your content becomes visible in search results.

Which technologies slow down the rendering process?

Sites built with complex JavaScript frameworks like Angular JS, or platforms like Wix, can significantly slow down rendering. The heavier and more difficult the JavaScript is to interpret, the longer Google will take to process the page.

- Rendering concerns 98% of current web pages

- The delay can vary from a few days to several weeks

- Complex JavaScript technologies significantly increase processing times

- Simple HTML pages are processed almost instantly

- This delay directly impacts the indexing speed of your content

SEO Expert opinion

Is this statement consistent with field observations?

After 15 years of experience, I completely confirm these observations. Sites in static HTML or classic PHP are indexed in a few hours, while Single Page Applications (SPAs) can indeed wait weeks before complete indexation.

I have particularly observed delays of 3 to 6 weeks on poorly configured React/Angular sites, versus 24-48 hours for traditional sites. The difference is measurable and directly impacts the time-to-market of your content.

What nuances should be added to this statement?

The 98% figure does not mean that Google perfectly succeeds in rendering in all cases. In reality, it attempts to render 98% of pages, but with varying degrees of success.

Some JavaScript sites generate silent errors that Google cannot resolve. Additionally, the crawl budget comes into play: a site with a low budget may never be fully rendered, even after weeks.

In which cases does this rule not apply?

Sites benefiting from strong domain authority and a high crawl budget can see their JavaScript pages rendered more quickly. Google allocates more resources to sites it considers important.

Similarly, using Server-Side Rendering (SSR) or Static Site Generation (SSG) completely bypasses the problem. These techniques serve pre-generated HTML to Google, eliminating the need for engine-side rendering.

Practical impact and recommendations

What should you do concretely to optimize rendering?

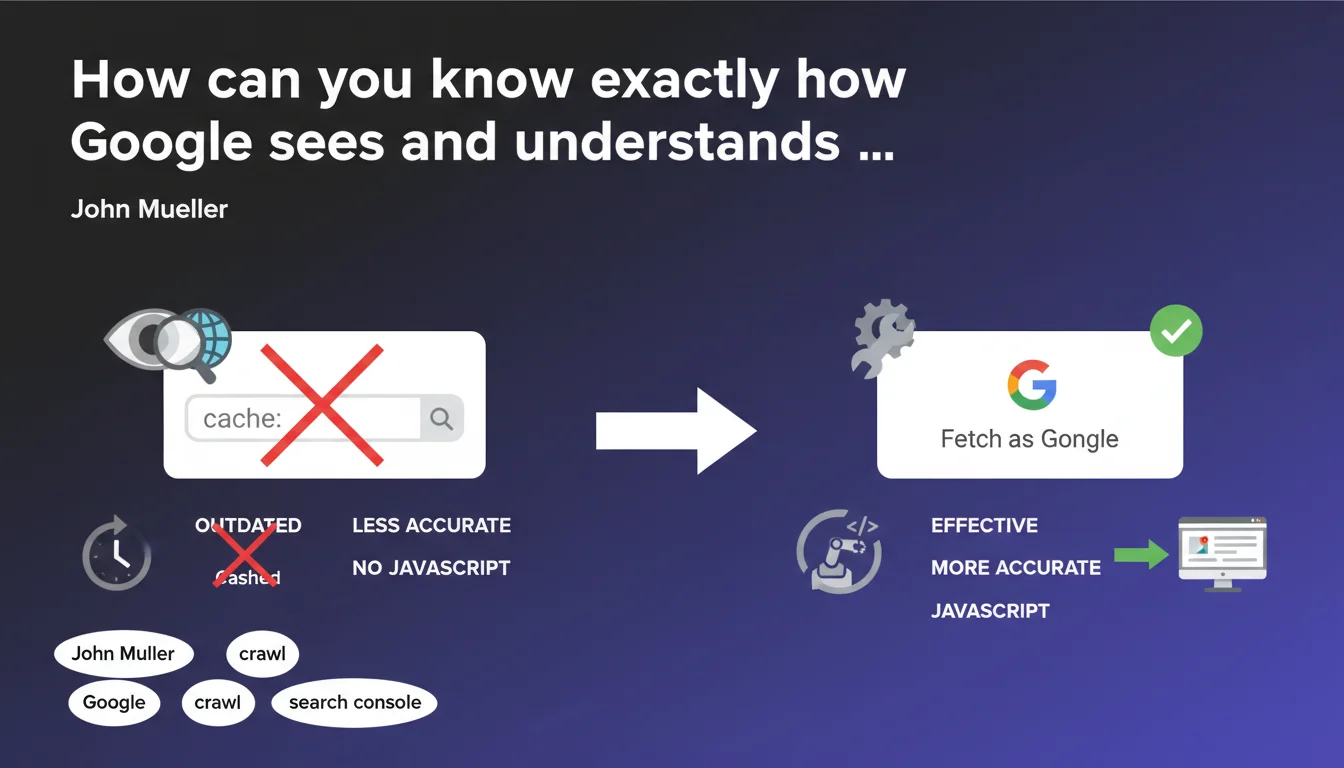

The first priority is to audit your current technical stack. Use Google Search Console to identify rendering errors and measure your actual indexing delays via URL inspection.

If you absolutely must use JavaScript, opt for pre-rendering or SSR solutions. Next.js for React, Nuxt for Vue, or Angular Universal are alternatives that serve HTML immediately exploitable by Google.

For existing sites, consider a hybrid architecture: critical content in static HTML, advanced features in progressive JavaScript. This approach guarantees fast indexing of essential elements.

What mistakes should you absolutely avoid?

Never build a new site in pure Angular JS or Wix if SEO is a strategic priority. The indexing handicap of several weeks is prohibitive compared to better-optimized competitors.

Also avoid blocking JavaScript/CSS resources in the robots.txt. Google needs access to them to perform rendering correctly. Check this point in the Search Console regularly.

- Audit your current indexing times via Search Console

- Test the rendering of your pages with the URL inspection tool

- Prioritize Server-Side Rendering if you use JavaScript

- Implement a hybrid HTML/progressive JavaScript architecture

- Avoid purely client-side frameworks for SEO-critical content

- Monitor your crawl budget and optimize it if necessary

- Never block JS/CSS resources necessary for rendering

- Measure the business impact of indexing delays on your traffic

How can you measure and track the effectiveness of your optimizations?

Implement systematic monitoring of your indexing delays. Create a dashboard that compares the time between publication and appearance in the index for different types of pages.

Use tools like Screaming Frog in JavaScript mode to simulate Googlebot behavior. Compare the differences between raw HTML crawling and crawling with rendering activated.

💬 Comments (0)

Be the first to comment.