Official statement

What you need to understand

What Exactly Is Rendering by Google Bots?

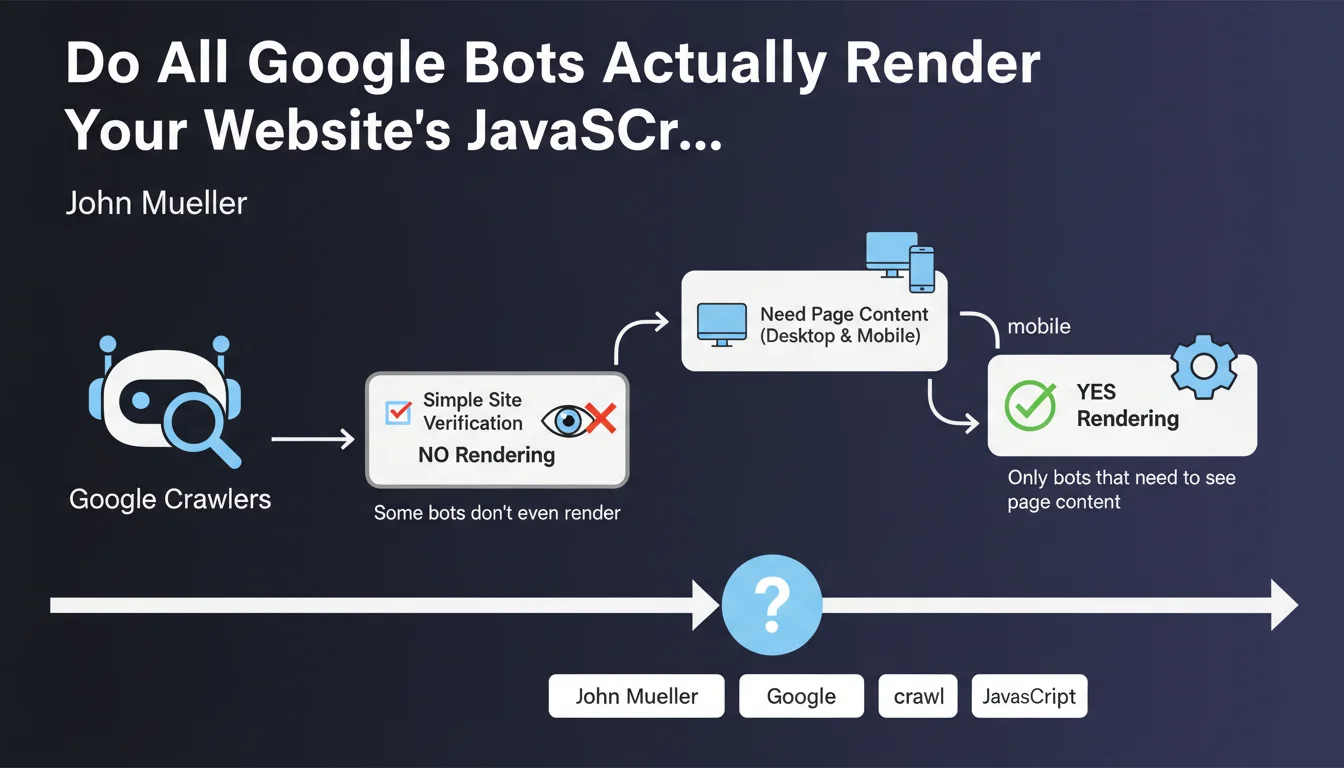

Rendering refers to the process by which a crawler executes JavaScript and displays the page as a web browser would. This step allows Google to see dynamically generated content created by JavaScript, which doesn't appear in the initial HTML code.

Contrary to popular belief, this resource-intensive operation is not systematic. Google must strategically choose when to invest in this rendering phase.

Why Don't All Google Bots Perform Rendering?

Google operates multiple specialized bots, each with different missions. The main Googlebot for desktop and mobile needs to see complete content for indexing purposes, so it performs rendering.

However, bots like Googlebot Site Verifier (which verifies site ownership) or Googlebot Favicon (which retrieves the site icon) don't need to execute JavaScript. They simply perform basic HTTP requests to accomplish their specific task.

Which Bots Do and Don't Perform Rendering?

The main indexing bots (Googlebot for desktop and mobile) perform rendering to access the complete content of your pages. This is essential for indexing and ranking in search results.

Utility and secondary bots generally settle for basic crawling without JavaScript execution.

- With rendering: Googlebot Desktop, Googlebot Mobile (main indexing)

- Without rendering: Googlebot Site Verifier, Googlebot Favicon, certain technical verification crawls

- Rendering is a costly operation reserved for situations where seeing the final content is necessary

- This differentiation allows Google to optimize its crawl resources

SEO Expert opinion

Does This Distinction Really Impact SEO Strategies?

This statement confirms what SEO experts have been observing for a long time: Google constantly optimizes its crawl resources. Since rendering is expensive, it makes sense to apply it only to bots that truly need it.

For SEO practitioners, this means focusing efforts on the main indexing bots. Other bots accomplish peripheral tasks that don't directly affect rankings.

What Important Nuances Should Be Added to This Statement?

Even the main indexing bots don't always perform immediate rendering. Google uses a two-phase crawl system: first the raw HTML, then JavaScript rendering if necessary and if resources allow.

This delay between the two phases can reach several days, even weeks on sites with a limited crawl budget. This is why SSR (Server-Side Rendering) or static generation remain preferable to pure client-side JavaScript.

In Which Cases Does This Architecture Cause SEO Problems?

Sites entirely based on JavaScript frameworks (React, Vue, Angular) without pre-rendering remain vulnerable. If essential content only appears after JavaScript execution, it may not be indexed immediately.

E-commerce sites with thousands of product pages generated in JavaScript are particularly affected. A new product could take weeks to be properly indexed if Google waits for the rendering phase.

Practical impact and recommendations

How Can You Optimize Your Site for This Google Crawl Reality?

The optimal strategy consists of not relying solely on JavaScript rendering. Your critical content must be present in the initial HTML, accessible during the first crawl without code execution.

Favor hybrid approaches: SSR (Server-Side Rendering), static generation, or progressive hydration. These techniques ensure that bots see your content immediately, even without rendering.

What Technical Mistakes Should You Absolutely Avoid?

Never use JavaScript to display critical SEO elements like H1 titles, product descriptions, or main navigation links. These elements must be present in the source HTML.

Also avoid blocking JavaScript or CSS resources in robots.txt, as this would prevent Google from properly rendering even when it wants to.

- Verify that your main content appears in the source HTML (view-source in the browser)

- Test your pages with the URL Inspection tool in Search Console to see what Google actually indexes

- Compare raw HTML and rendered HTML to identify content gaps

- Implement SSR or static generation for strategic pages (product pages, categories, articles)

- Monitor indexing delays for your new pages in Search Console

- Properly configure your robots.txt to not block resources necessary for rendering

- Regularly audit your server logs to understand which bots crawl your site and how frequently

Should You Call on Experts for This Optimization?

The technical architecture of a modern website requires specialized skills in front-end development and technical SEO. Migrating from a client-side JavaScript architecture to SSR or static generation represents a major project.

These optimizations touch the heart of your technical stack and can be complex to implement correctly. They require coordination between SEO and development teams, as well as a thorough understanding of crawling and indexing mechanisms.

💬 Comments (0)

Be the first to comment.