Official statement

What you need to understand

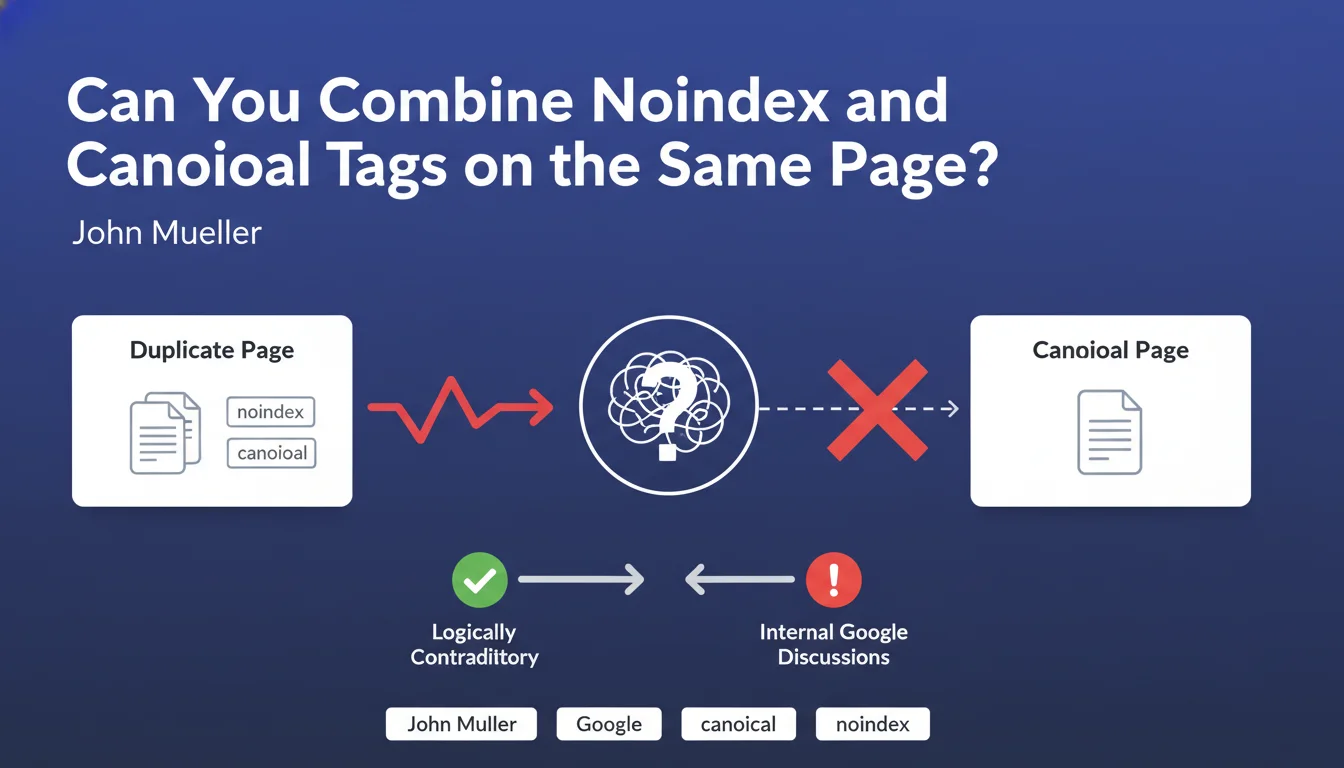

Google officially confirms that the simultaneous use of canonical tags and meta robots noindex creates contradictory signals for the search engine. The first directive indicates "this page is a duplicate, here is the original to index", while the second states "do not index this page". This contradiction generates an ambiguity of interpretation for Googlebot.

The situation becomes even more complicated when you add a Disallow directive in robots.txt. Technically, if a URL is blocked by robots.txt, the crawler cannot access the page and therefore cannot read the canonical tag present in the HTML code. However, Google sometimes retains previously crawled information in memory, which can create unpredictable behaviors.

These contradictory directives are particularly problematic on large-scale sites where SEO task automation does not always allow granular page-by-page management. Content management systems can apply global rules that unintentionally overlap.

- Canonical + noindex = contradictory signals to avoid

- Disallow blocks crawling therefore prevents reading of the canonical

- These duplicate directives frequently occur on large sites

- Google may interpret these signals inconsistently

- Manual page-by-page management is not always possible at scale

SEO Expert opinion

This recommendation from Google is technically correct but pragmatically nuanced. In an ideal world, each page would have a single clear directive. In the reality of e-commerce sites with thousands of pages, multilingual platforms, or dynamically generated sites, these overlapping directives are almost inevitable and sometimes even desirable as a safety net.

Field experience shows that Google is more tolerant than this statement suggests. The search engine has developed a certain ability to prioritize these signals: generally, noindex takes precedence over canonical, and Disallow effectively blocks everything else. Real problems mainly occur during frequent changes to these directives or when they alternate over time on the same URL.

Practical impact and recommendations

Following this official clarification, here are the concrete actions to implement to optimize the management of your indexing directives:

- Audit your directive combinations: use Screaming Frog or Sitebulb to identify pages that have both canonical + noindex simultaneously

- Establish a clear decision hierarchy: document which directive to use depending on the case (duplicate = canonical, private content = noindex, unnecessary resources = disallow)

- For pages to deindex: use only noindex, don't add a canonical to another page

- For true duplicates: use only canonical, don't add noindex

- To block entire sections: prefer robots.txt Disallow, but be aware that this prevents reading of any other directive

- Case of parameterized URLs: prefer management via Google Search Console (URL parameters) rather than multiplying directives

- Document exceptions: if your CMS imposes duplicate directives, document them and monitor their behavior in Search Console

- Test before deployment: on large sites, test your rules on a sample before mass deployment

💬 Comments (0)

Be the first to comment.