Official statement

What you need to understand

What does this Google statement actually mean?

Google claims there's no major problem with creating internal links to deindexed pages. This position may seem counterintuitive for SEO practitioners accustomed to optimizing every aspect of their internal linking.

The important nuance lies in the fact that Google distinguishes between deindexed pages (voluntarily excluded from the index) and low-quality pages. According to this statement, pointing to noindex pages would not directly penalize the site.

Why does Google take this position?

Google seeks to simplify site management for website owners. The goal is to prevent them from worrying excessively about every internal link, particularly to functional but non-indexable pages.

This approach reflects Google's desire to focus on overall content quality rather than very granular technical aspects of internal linking.

What are the key takeaways from this statement?

- Internal links to pages in noindex do not create a direct penalty according to Google

- This position specifically concerns voluntarily deindexed pages, not poor quality pages

- Google tolerates this practice for reasons of user navigation and site functionality

- The statement does not take into account all possible indirect impacts on SEO

SEO Expert opinion

Is this statement complete from an SEO perspective?

Google's response is technically accurate but incomplete. While no direct penalty is applied, the indirect consequences are very real and measurable on many sites.

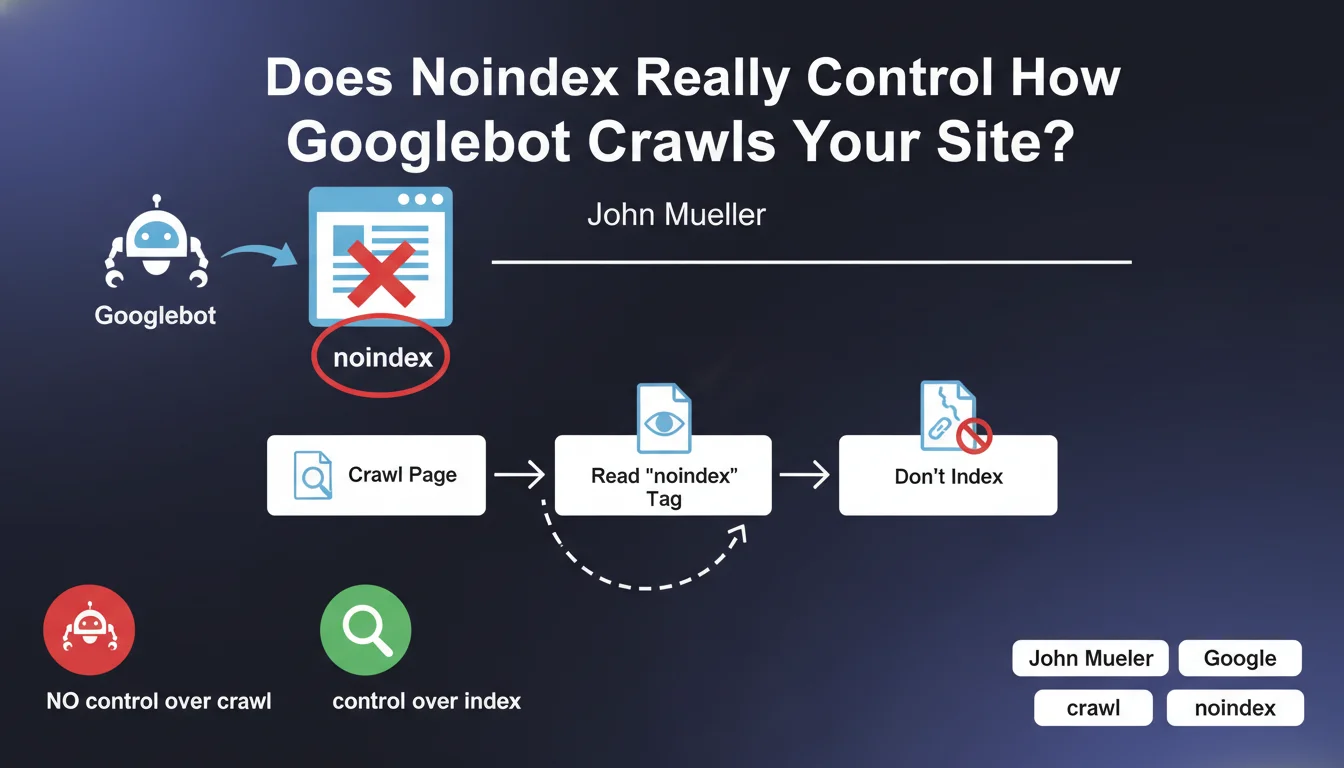

In practice, linking massively to noindex pages creates several concrete problems. Crawl budget is impacted because Googlebot follows these links and must process the noindex tags. Internal PageRank is also affected since link juice no longer circulates efficiently.

What important nuances should be considered?

The deindexation method changes everything. With a meta noindex tag, Googlebot must crawl the page to read the directive, unnecessarily consuming crawl budget. The PageRank transmitted to this page is then permanently lost, without redistribution to other pages.

On the other hand, deindexation via robots.txt blocks crawling upstream, but also prevents Google from seeing outgoing links from that page. Each method therefore presents tradeoffs that must be evaluated according to context.

When does this practice actually become problematic?

The problem becomes critical on large-volume e-commerce sites that deindex thousands of product variants or filters. If these pages receive numerous links from strategic pages, that's wasted PageRank.

Similarly, media sites or blogs that noindex categories, tags or archives while linking to them from their main navigation lose an opportunity to optimize their SEO juice circulation.

Practical impact and recommendations

What should you actually do on your site?

Start by auditing your internal linking to identify noindex pages that receive many internal links. Use Screaming Frog or Semrush to extract this data quickly.

Then evaluate whether these pages should really remain deindexed. Sometimes, a page deemed without SEO value could be optimized and indexed to capture additional traffic.

For pages that must remain in noindex, minimize links from your strategic pages with high PageRank. Prioritize links from the footer or less important sections of your architecture.

What critical mistakes should you absolutely avoid?

Never noindex pages that constitute mandatory passage points in your architecture. This creates black holes that absorb PageRank without redistributing it.

Also avoid massively deindexing pages to "save crawl budget" without analyzing the impact on overall internal linking. This strategy can be counterproductive.

Don't use robots.txt to deindex pages to which you want to transmit PageRank, because Google won't be able to follow the outgoing links from these pages.

How can you optimize your deindexation strategy?

- Map all your noindex pages and analyze their inbound link profile

- Prioritize indexation of pages naturally receiving many quality internal links

- Use noindex only for truly valueless pages (internal search results, thank you pages, etc.)

- Restructure your navigation to avoid linking to noindex pages from your main menu

- Regularly monitor your crawl budget in Search Console to detect anomalies

- Test the impact of your modifications by tracking the evolution of crawling and indexation over several weeks

- Document your deindexation strategy to maintain consistency over time

💬 Comments (0)

Be the first to comment.