Official statement

What you need to understand

What does massive deindexing mean according to Google?

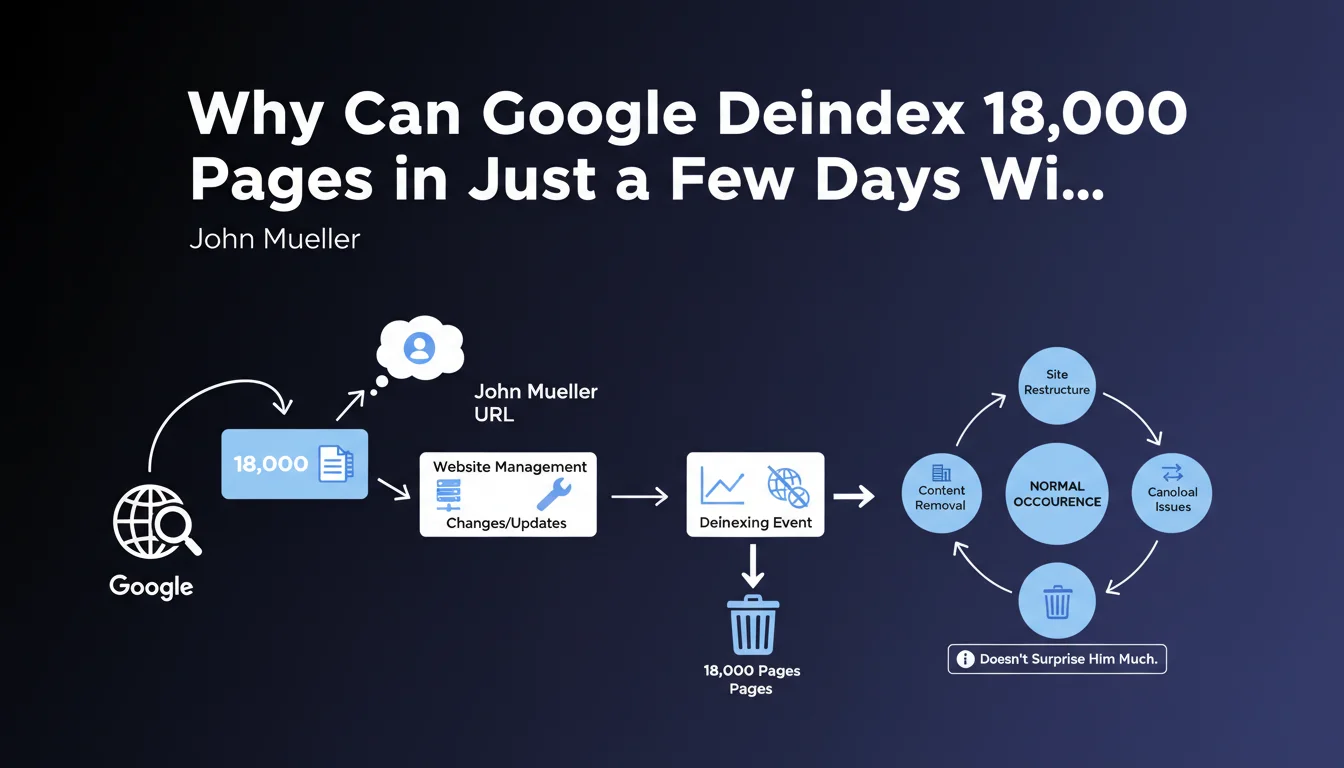

John Mueller, Google spokesperson, confirmed that a massive deindexing of thousands of URLs in just a few days can occur normally. In the case mentioned, 18,000 pages disappeared from the index in very little time.

This official statement shows that Google considers this phenomenon as acceptable under certain circumstances, without it necessarily being a penalty or a major technical issue. Google's reaction strongly depends on the context and the size of the site concerned.

Why is site size so determinant?

The editorial comment highlights a crucial point: the impact of a deindexing is measured proportionally to the total size of the site. 18,000 URLs removed from the index represent a very different impact depending on the context.

On a site with several million pages, this deindexing represents less than 1% of the total content. On the other hand, on a site with 19,000 pages, this means the loss of 95% of visibility, which is catastrophic.

What are the possible scenarios for rapid deindexing?

Several factors can explain a massive and rapid deindexing. Google regularly makes adjustments to its index to eliminate low-quality or duplicate content.

- Algorithmic detection of low-quality content (thin content, duplication)

- Technical problems: robots.txt errors, accidental noindex tags, unstable server

- Bugs on Google's side during algorithm updates (unofficially acknowledged)

- Poorly managed site restructuring with missing redirects

- Algorithmic penalty targeting certain sections of the site

SEO Expert opinion

Does this statement align with real-world observations?

With 15 years of experience, I confirm that massive deindexings are more frequent than we think. I have personally observed brutal drops of thousands of indexed pages on e-commerce and news sites.

Mueller's response, while reassuring in appearance, hides a more nuanced reality. Google never deindexes massively "by chance." There is always an underlying reason, even if it's not immediately obvious to the webmaster. The algorithm detects patterns that we don't always see.

What important nuances should be considered?

Mueller's statement lacks critical details. Saying that "it can happen" without providing actionable context can be dangerous for less experienced SEOs who might trivialize the phenomenon.

A deindexing of this magnitude always deserves thorough investigation, regardless of site size. Even on a site with millions of URLs, losing 18,000 pages can indicate a structural problem that will progressively affect other sections.

When should you really worry?

Certain signals should trigger maximum alert. If the deindexing affects your highest-performing traffic pages or your main categories, this is a critical emergency.

Similarly, if the deindexing is accompanied by a brutal drop in organic traffic (more than 30% in one week), a decline in positions on your strategic keywords, or error messages in Search Console, you're facing a major problem requiring immediate intervention.

Practical impact and recommendations

How can you quickly diagnose a massive deindexing?

The first step is to precisely quantify the extent of the deindexing. Use the "site:yourdomain.com" command in Google and compare with your historical data in Google Search Console.

Then examine Search Console in the "Coverage" section to identify error types. Look particularly at exclusions: pages with noindex tags, blocked by robots.txt, 404/5xx errors, or detected duplicate content.

Also verify your robots.txt file and your XML sitemap. An accidental modification can block the crawling of entire sections of your site. Also check your server logs to detect a decrease in Googlebot activity.

What corrective actions should be implemented immediately?

If you identify a technical problem (erroneous robots.txt, involuntary noindex tags), correct it immediately and request reindexing via Search Console. Quality pages will generally return to the index within 2-4 weeks.

In case of deindexing related to content quality, adopt a more radical strategy: identify low-value pages, decide to substantially improve them or delete them with 301 redirects to relevant content.

- Monitor daily the number of indexed pages for a minimum of 15 days

- Analyze server logs to detect changes in Googlebot behavior

- Audit the quality of deindexed pages (content, duplication, added value)

- Correct technical errors identified in Search Console

- Submit an updated sitemap containing only quality URLs

- Improve or delete low-quality content

- Document all changes to understand what worked

- Set up automatic alerts on indexation variations

How can you prevent future massive deindexings?

Prevention relies on continuous monitoring and rigorous SEO hygiene. Set up automatic alerts as soon as a variation of more than 5% in the number of indexed pages is detected.

Regularly audit your content quality with tools like Screaming Frog or Oncrawl. Proactively identify duplicate content, pages that are too short, or rarely visited sections that could be deindexed.

💬 Comments (0)

Be the first to comment.