Official statement

What you need to understand

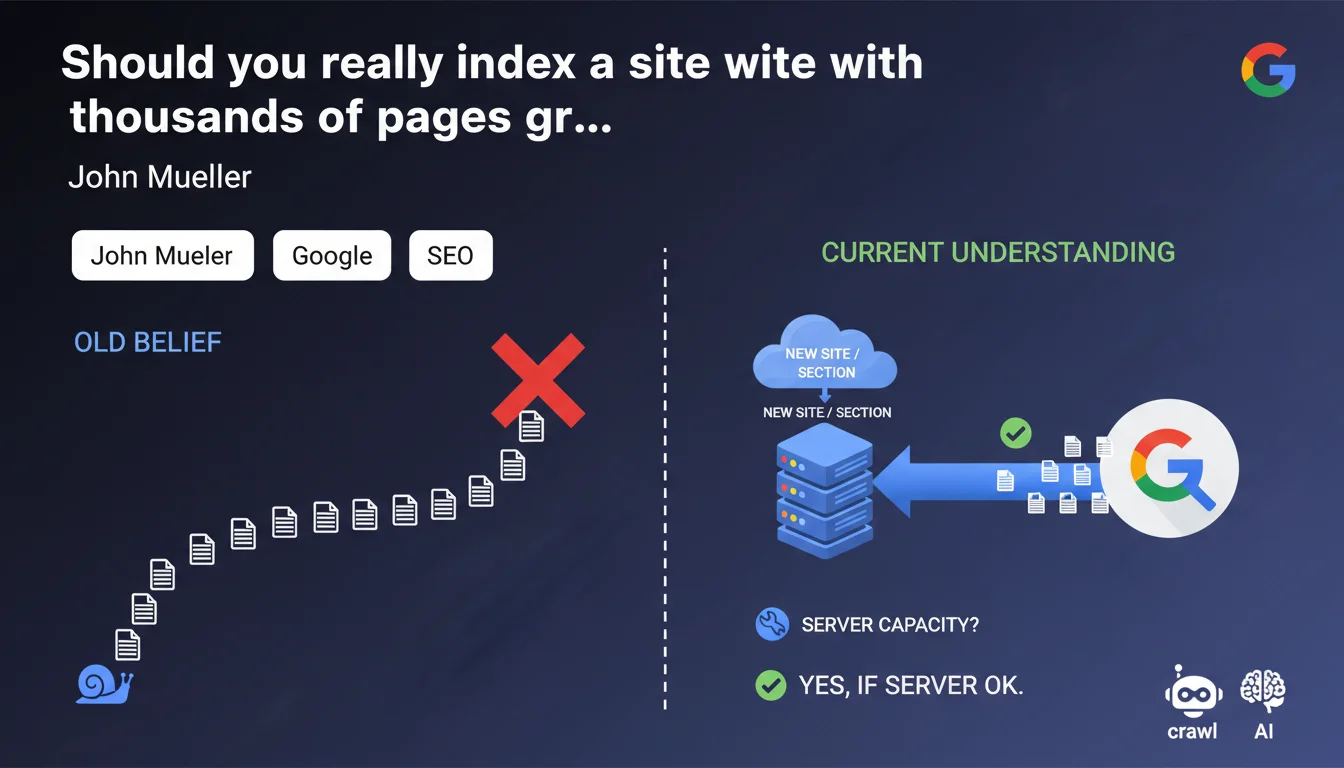

What was the traditional SEO belief about mass indexing?

For years, the SEO community recommended a gradual approach for indexing large sites. This practice consisted of opening the floodgates little by little, allowing Google to discover a few thousand pages at a time.

This strategy was based on the observation that search engines seemed to have difficulties processing massive volumes of new URLs simultaneously. SEOs also feared crawl budget issues and potential penalties.

What exactly does John Mueller say on this subject?

John Mueller clearly states that Google has no problem crawling and indexing hundreds of thousands of pages at once. This statement marks a significant evolution in the technical understanding of the engine's capabilities.

The only constraint mentioned concerns the server's capacity to support such an influx of crawl requests. The bottleneck is therefore on the hosting side, not on Google's side.

Why has Google's capacity evolved this way?

Google's technical infrastructure has evolved considerably in recent years. Massive investments in server farms and parallel processing algorithms now allow for handling much larger volumes.

- Google can index massively without technical problems on its end

- The main limitation lies in the server's ability to respond to requests

- The gradual approach is no longer necessary for reasons related to the engine

- This evolution simplifies the launch of large sites or new sections

SEO Expert opinion

Is this statement consistent with field observations?

In practice, this assertion by Mueller indeed corresponds to recent observations on large launches. Sites with thousands of pages can be indexed in a few days without particular difficulty, contrary to previous years.

However, it must be nuanced: rapid indexing does not automatically mean immediate positioning. Google can crawl and index massively, but qualitative evaluation and ranking take time.

In which cases should you still be careful?

Even if Google accepts massive crawling, certain situations require particular caution. Sites with limited authority or fragile technical infrastructure must anticipate the impacts.

Mass indexing of low-quality content remains problematic, regardless of indexing speed. Google could quickly identify a site as having low added value.

What are the implications for crawl budget?

This statement does not challenge the concept of crawl budget. Google can index massively, but that doesn't mean it will systematically do so if the site doesn't present enough quality signals.

Crawl budget remains determined by domain authority, update frequency, and overall quality. A new or low-authority site won't necessarily obtain immediate massive crawling.

Practical impact and recommendations

What should you concretely do to prepare for a massive launch?

Technical preparation becomes the critical element. Before any volume launch, you must ensure that your infrastructure can support an influx of Googlebot requests without performance degradation.

Test your server with load testing tools to simulate 50 to 100 simultaneous requests. Verify that response times remain under 500ms and that the server doesn't saturate.

- Properly dimension your hosting (CPU, RAM, bandwidth)

- Activate a performant cache system (Varnish, Redis, CDN)

- Optimize database queries to avoid bottlenecks

- Configure real-time monitoring to detect problems

- Prepare a clean XML sitemap with all URLs to be indexed

- Verify that robots.txt doesn't block important sections

- Ensure that internal linking allows for easy discovery

What mistakes should you avoid during mass indexing?

The main error would be to focus solely on indexing volume without considering quality. A massively indexed site with weak content will send lasting negative signals.

Don't confuse technical capacity with optimal strategy. Just because Google can index everything at once doesn't mean you should necessarily proceed that way, especially if your content isn't yet optimal.

How can you verify that your indexing strategy is working?

Use Search Console to track daily the number of indexed pages and any crawl errors. The coverage report will give you a clear vision of the progression.

Also monitor your server logs to understand Googlebot's actual behavior: frequency, pages visited, response codes. This data is essential to adjust your strategy.

💬 Comments (0)

Be the first to comment.