Official statement

What you need to understand

What is a grouped manual action on a site network?

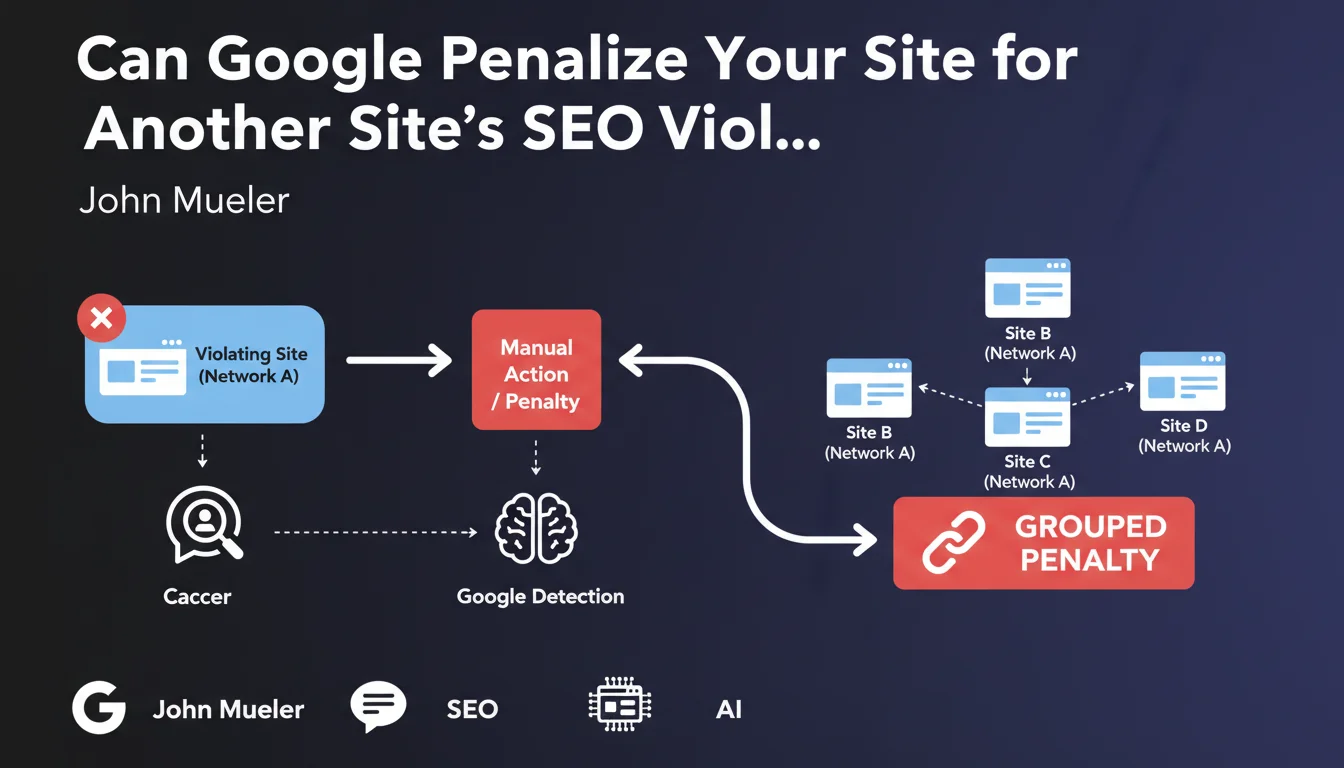

Google applies manual penalties when a site violates its quality guidelines. These actions are reviewed by human analysts, unlike automatic algorithmic filters.

When Google teams identify a violating site, they can extend the investigation to other properties in the same network. If these sites exhibit similar characteristics or identical questionable practices, a grouped penalty can be applied simultaneously.

How does Google detect that a site network exists?

Google uses several fingerprint signals to identify networks: same owner in WHOIS data, same hosting servers, cross-linking patterns, content or template similarities.

Common technical identifiers such as Google Analytics, Google Tag Manager, or even certain code signatures can also reveal connections between sites.

What types of networks are particularly targeted?

Private blog networks (PBNs) are the primary target of this approach. These sets of sites created solely to manipulate rankings through artificial links represent a direct violation of the guidelines.

Networks of satellite sites or doorway pages, created to capture traffic and redirect to a main site, are also affected. Any network whose objective is to manipulate the algorithm rather than provide value to users is at risk.

- A manual penalty can extend to an entire detected network

- Google uses technical and behavioral fingerprints to identify connections

- PBNs and satellite sites are the main targets of these grouped actions

- The notification appears in the Search Console of each penalized site

SEO Expert opinion

Is this statement consistent with practices observed in the field?

This Google position indeed corresponds to empirical observations over several years. Numerous documented cases show simultaneous penalties on entire networks, particularly during PBN cleanup waves.

The consistency is complete with Google's anti-manipulation philosophy. The grouped approach is economically logical: once an analyst has identified a pattern, it would be inefficient to treat only one site in the network.

What important nuances should be brought to this statement?

It's important to distinguish a manipulative network from a legitimate portfolio of sites. Owning multiple independent thematic sites, with unique content and no artificial links between them, does not constitute a violation.

The grouped penalty applies when sites share the same abusive practices, not simply because they have the same owner. An entrepreneur with five legitimate sites has nothing to fear if one is penalized for a specific reason.

In what cases can this rule pose problems for legitimate SEOs?

SEO agencies and consultants who host multiple clients on the same infrastructure can be unfairly impacted. If a client adopts black hat practices without the agency's knowledge, it could theoretically contaminate other sites.

Multi-site platforms (marketplaces, franchise networks) can also be affected if a network member violates the guidelines. This is why governance and common quality rules are essential.

Practical impact and recommendations

How can you protect a legitimate site network against this risk?

The first rule is to maintain technical independence between your legitimate sites. Use different hosting, separate Analytics accounts, and avoid common signatures in the code.

Ensure that each site offers real unique value to its own audience. Content must be original, the objective clear, and the user experience optimal. Avoid excessive cross-linking between your properties.

If your sites need to reference each other for legitimate reasons, use natural contextual links with varied and non-over-optimized anchors. Transparency about common ownership can even be a plus.

What should you do if a grouped manual action hits your network?

Immediately check the Search Console of each property to understand the exact nature of the penalties. Manual notifications are generally detailed and indicate specific problems.

Treat each site individually by correcting the identified issues, then submit separate reconsideration requests. Document precisely the corrective actions taken for each property.

What strategies should you adopt to develop multiple sites without risk?

Favor an approach where each site has its own editorial identity, its distinct audience and specific business objectives. Avoid identical or too visually similar templates.

Invest in differentiated expert content rather than multiplying generic sites on related niches. A single authoritative site is often worth more than a network of average sites on connected niches.

- Verify the technical independence of your different web properties

- Audit all cross-links between your sites to identify suspicious patterns

- Ensure that each site offers a unique and distinct value proposition

- Document the business legitimacy of each property and their reason for being

- Set up separate Search Consoles and regularly monitor notifications

- Avoid common technical signatures (same GA, same server, same template)

- Train teams on Google guidelines to prevent unintentional violations

💬 Comments (0)

Be the first to comment.