Official statement

What you need to understand

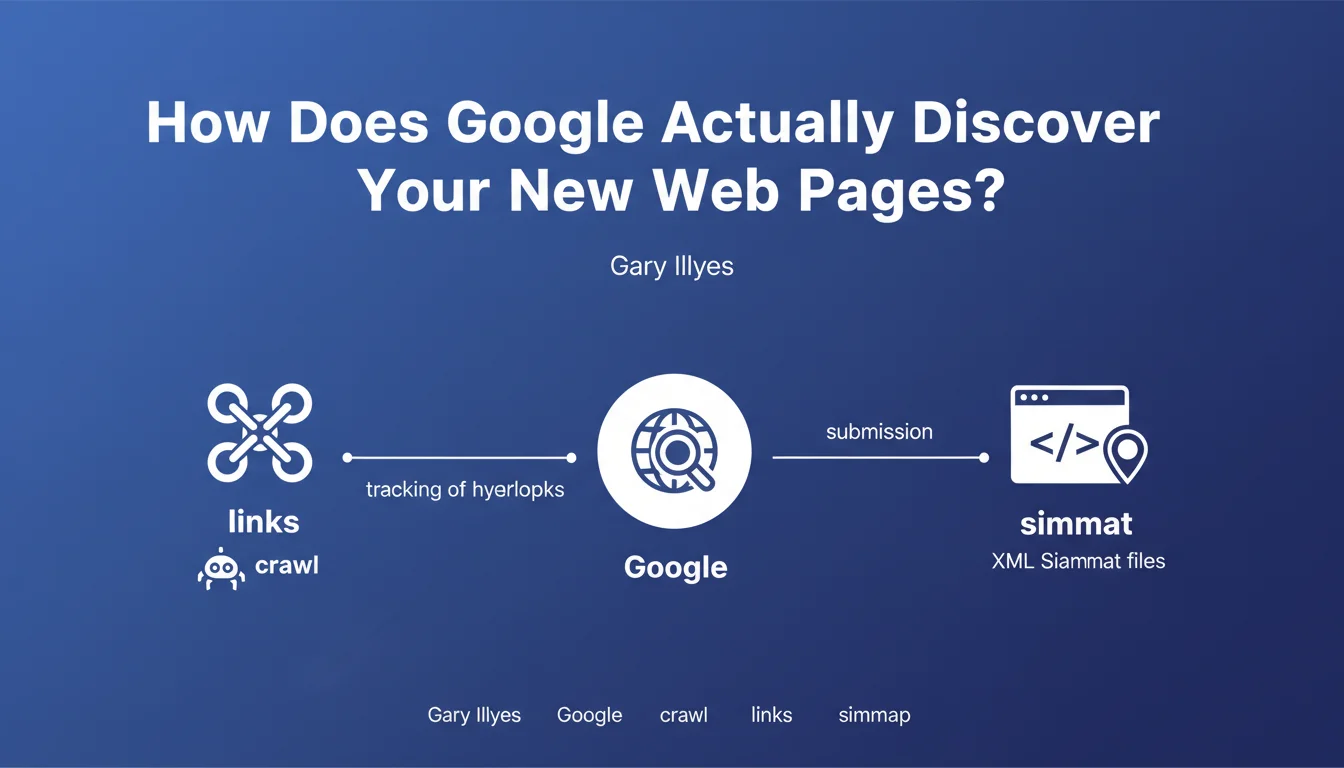

Gary Illyes confirmed at a conference that Google uses two main methods to discover new URLs to crawl. The first and most important remains the tracking of hyperlinks by crawling bots.

The second method goes through XML Sitemap files, which constitute a complementary but essential channel. This hierarchy is not insignificant: it reveals that link architecture remains the foundation of content discovery by Google.

Other mechanisms certainly exist, such as data exchange between Google bots or information reported by Chrome. But their contribution remains marginal compared to the two main channels.

- Hyperlinks constitute the priority method for URL discovery

- XML Sitemaps serve as a complement to facilitate indexing

- Internal and external link architecture remains crucial for visibility

- Other discovery channels represent a negligible volume

SEO Expert opinion

This statement confirms what field observation has demonstrated for years. Sites with a solid link architecture see their new pages discovered within hours, while orphan pages remain invisible even with a Sitemap.

The important nuance lies in the fact that discovery does not mean indexing. A Sitemap can accelerate discovery, but only content quality and authority transmitted through internal links guarantee actual indexing. I regularly observe sites where 80% of the URLs in the Sitemap are never indexed.

For large-scale sites, the intelligent combination of both methods remains essential. Links ensure PageRank transmission, while the Sitemap secures the discovery of deep content within the site structure.

Practical impact and recommendations

- Audit your internal linking: ensure that every important page is accessible within a maximum of 3 clicks from the homepage

- Eliminate orphan pages by creating contextual links from your high-authority content

- Optimize your XML Sitemap by including only indexable, up-to-date and strategic URLs (no noindex pages, 404s or pages blocked by robots.txt)

- Segment your Sitemaps by content type for large sites (products, categories, articles)

- Monitor Search Console to identify URLs discovered but not crawled or not indexed

- Strengthen internal linking to your new pages as soon as they are published to accelerate their discovery

- Create a recent content hub accessible from your main navigation to facilitate crawling of new content

Implementing an optimal discovery strategy requires a comprehensive view of your technical and editorial ecosystem. Between crawl analysis, internal linking optimization, Sitemap management and continuous monitoring, these optimizations require specialized expertise.

For complex or fast-growing sites, support from a specialized SEO agency allows you to quickly identify structural bottlenecks and deploy a high-performance discovery architecture tailored to your specific challenges.

💬 Comments (0)

Be the first to comment.