Official statement

What you need to understand

What does the date displayed in Google cache really represent?

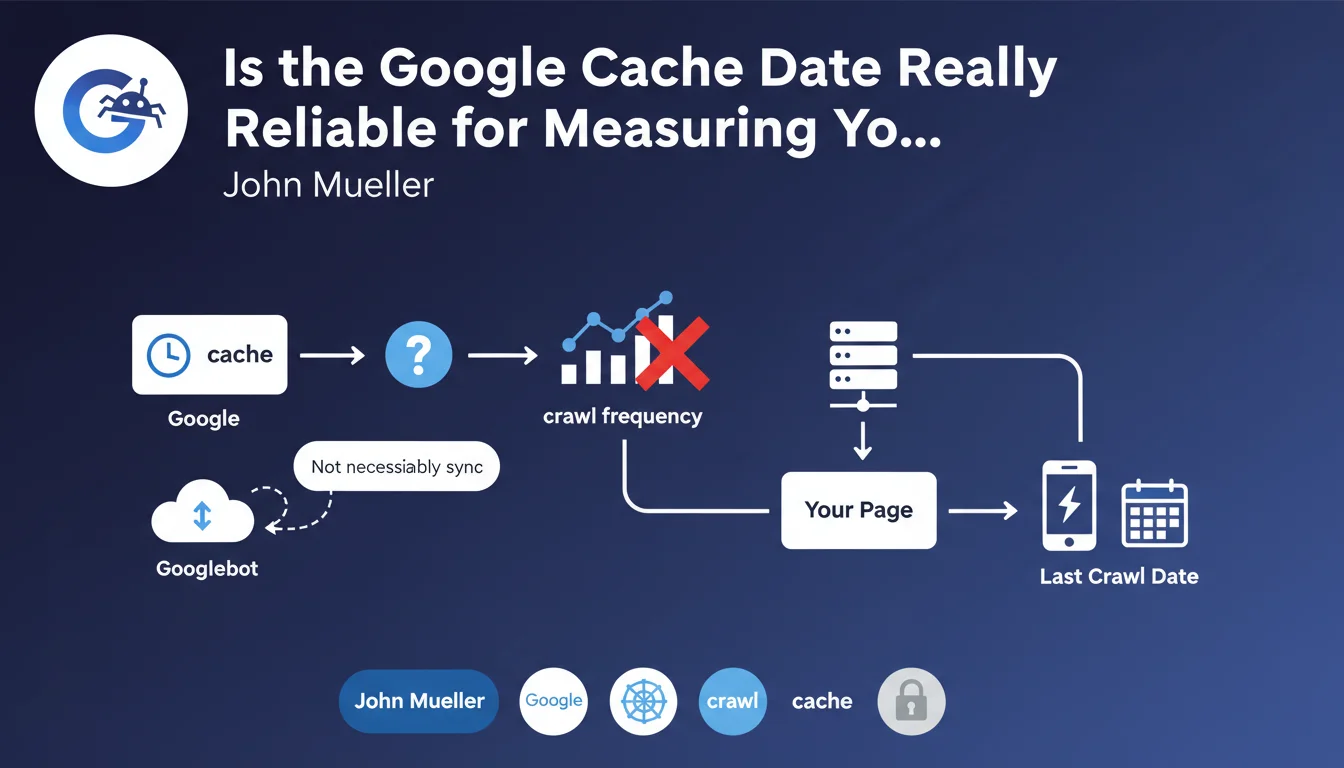

When an SEO practitioner uses the cache: command to view a page's cached version, Google displays a date at the top of the page. This information has long been considered an indicator of Googlebot's last visit to the page.

According to John Mueller's statement, this date is not necessarily representative of the actual last crawl. It could correspond to other technical events in Google's indexing process, without faithfully reflecting the crawler's activity.

Why does this clarification change our understanding of crawling?

This clarification disrupts a common SEO practice: using the cache date as a crawl freshness metric. Many professionals relied on this indicator to assess the health of their crawl budget.

Google actually maintains multiple versions of the same page in its infrastructure. The displayed date may correspond to a specific version cached for display to users, but not necessarily to the last complete analysis of the page.

- The cache date is not a reliable indicator of actual crawl frequency

- Google can crawl a page without updating the visible cached version

- Multiple versions of a page can coexist in Google's infrastructure

- This date may reflect other technical events besides crawling

What are the alternatives for measuring crawl effectively?

Faced with this limitation, SEO practitioners must turn to more reliable sources of information. Search Console remains the most accurate tool for analyzing crawl activity.

Server logs also constitute an essential primary source for directly observing Googlebot's visits, with precise timestamps and information about accessed resources.

SEO Expert opinion

Is this revelation consistent with field observations?

As an SEO expert, this statement confirms what many of us were already observing: blatant inconsistencies between the cache date and crawl data in Search Console or server logs.

It's not uncommon to find that a page displays an old cache date while logs show daily Googlebot visits. Conversely, some pages with a recent cache date show no significant activity in exploration statistics.

What are the implications for indexing analysis?

This clarification highlights the complexity of Google's indexing system. The search engine doesn't function linearly: crawling, indexing, and caching are distinct and asynchronous processes.

Content can be crawled, analyzed, and taken into account in rankings without the public cache being updated. This dissociation explains why content modifications can impact positioning before the cache date even changes.

In which cases can this information still be useful?

Despite its limitations, the cache date retains some usefulness for detecting major indexing problems. If no cached version exists or if the date goes back several months on an active site, it's generally a sign of a malfunction.

It can also serve as a complementary indicator in a broader analysis, but never as a single or primary metric for assessing a site's crawl health.

Practical impact and recommendations

How can you effectively measure crawl frequency now?

The first concrete action is to prioritize Search Console as your primary source of information. The "Crawl Stats" report provides accurate data on the number of requests, pages explored, and errors encountered.

Analyzing server log files should become a reflex for any professional site. This method offers a comprehensive and unfiltered view of Googlebot's actual activity, with all necessary technical details.

- Configure regular access to Search Console's "Crawl Stats" report

- Set up a server log analysis solution (Oncrawl, Botify, or custom scripts)

- Cross-reference data from multiple sources to get a complete picture

- Stop using cache date as a crawl performance metric

- Document observed crawl patterns to identify anomalies quickly

What interpretation errors should you absolutely avoid?

The most common mistake would be to continue basing strategic decisions on the cache date. Don't panic if this date seems old while your other indicators are positive.

Also avoid over-interpreting variations in this date. A cache update doesn't necessarily mean improved crawling, and vice versa. Focus on reliable and actionable metrics.

What strategy should you adopt to optimize your site's crawl?

Beyond measurement, the goal remains to optimize your site's technical crawlability. Work on internal link structure, server response speed, and the quality of your robots.txt file and XML sitemap.

Invest in a clear information architecture that facilitates the discovery of your important content. Internal linking remains one of the most powerful levers for guiding Googlebot to your strategic pages.

💬 Comments (0)

Be the first to comment.