Official statement

What you need to understand

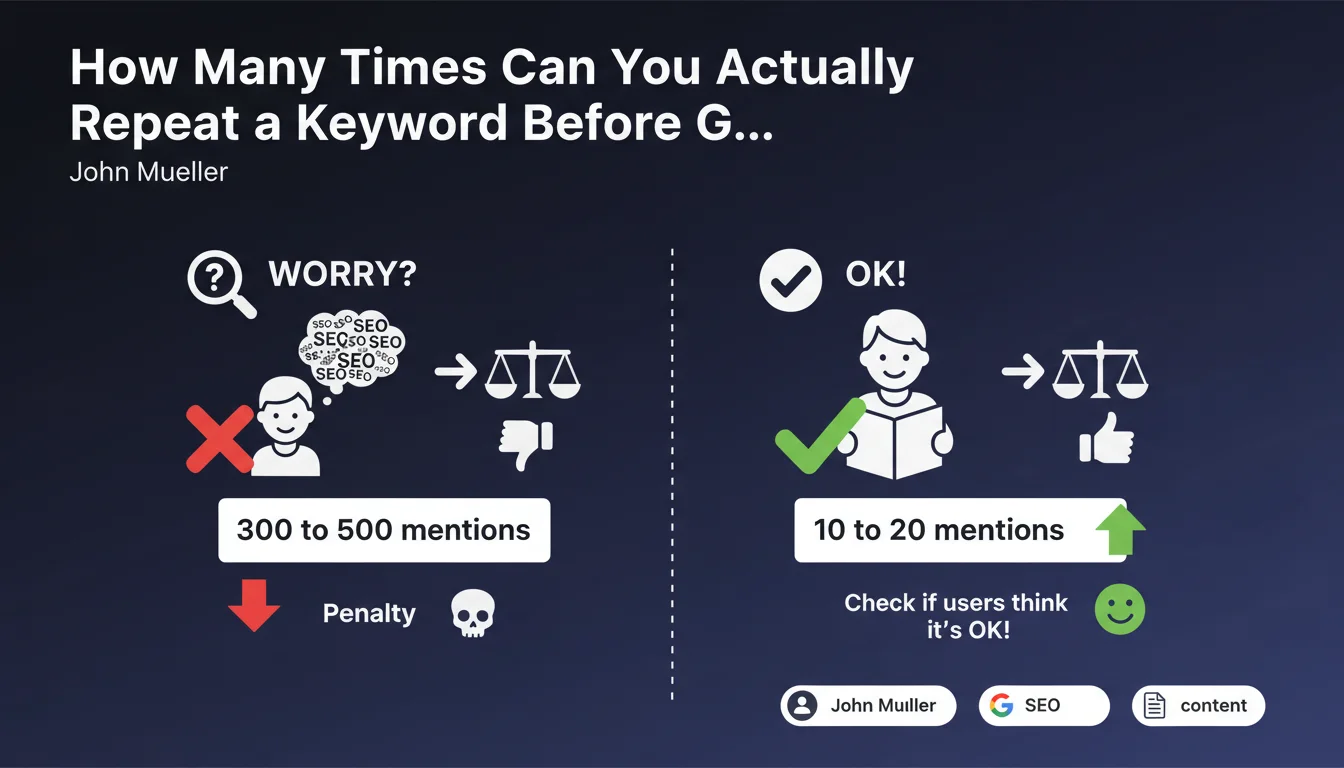

Keyword stuffing is a practice historically penalized by Google. It involves excessively repeating terms to manipulate rankings in search results.

John Mueller provides here a rare quantitative benchmark: Google starts to worry when a term appears between 300 and 500 times on a single page. Below 10 to 20 occurrences, there would be no particular reason to be alarmed from an SEO perspective.

This statement emphasizes that Google does not set a strict and universal limit. The algorithm analyzes context, content length, and especially user experience. A technical or specific term can naturally appear frequently without being considered spam.

- The alert threshold is between 300 and 500 mentions of the same term on a page

- 10 to 20 occurrences are generally considered acceptable

- Context and user experience take precedence over raw numbers

- Certain terms that are impossible to replace can naturally appear more often

- Google evaluates overall relevance, not just keyword frequency

SEO Expert opinion

This position from Google is consistent with field observations. Pages penalized for keyword stuffing indeed present absurd repetitions and clearly harm readability. A 2,000-word article mentioning its main subject 30 times remains within natural density.

However, important nuances must be noted. For short content (less than 500 words), 20 occurrences may already seem excessive. Conversely, a 5,000-word technical guide on specific software can legitimately mention its name 50 to 80 times without issue.

Warning: This tolerance does not apply to old spam techniques such as hidden text, keyword lists without added value, or abusive repetition in meta tags. Semantic context and user value remain determining factors.

The important thing is to prioritize natural reading. If your proofreaders find the repetition annoying, you've probably gone too far, even with only 15 occurrences. Google also analyzes synonyms and semantic fields, not just exact repetition.

Practical impact and recommendations

- Audit your existing content: identify pages with more than 50 repetitions of the same term in short content (less than 1,500 words)

- Use analysis tools: measure keyword density with plugins or SEO analyzers to stay within a 1-3% range

- Vary your vocabulary: use synonyms, related expressions, and enrich the semantic field around your topic

- Test readability: have your content proofread by external people to detect annoying repetitions

- Favor length: in long content (3,000+ words), a higher frequency becomes natural and acceptable

- Analyze context: certain technical terms, brand names, or specific concepts justify more frequent repetition

- Don't sacrifice clarity: if a precise term is the only appropriate one, use it rather than forcing artificial synonyms

- Monitor user signals: high bounce rate and low reading time may indicate over-optimized content

The golden rule remains to prioritize user experience over pure optimization. Natural density, fluid writing, and genuinely informative content effectively protect against any suspicion of keyword stuffing.

These balances between SEO optimization and editorial quality often require deep expertise and continuous analysis. Algorithms are constantly evolving and each sector has its own specificities. For companies looking to maximize their visibility without risking drift, support from a specialized SEO agency provides personalized audits, recommendations tailored to your context, and strategic monitoring of changes in Google's quality criteria.

💬 Comments (0)

Be the first to comment.