Official statement

What you need to understand

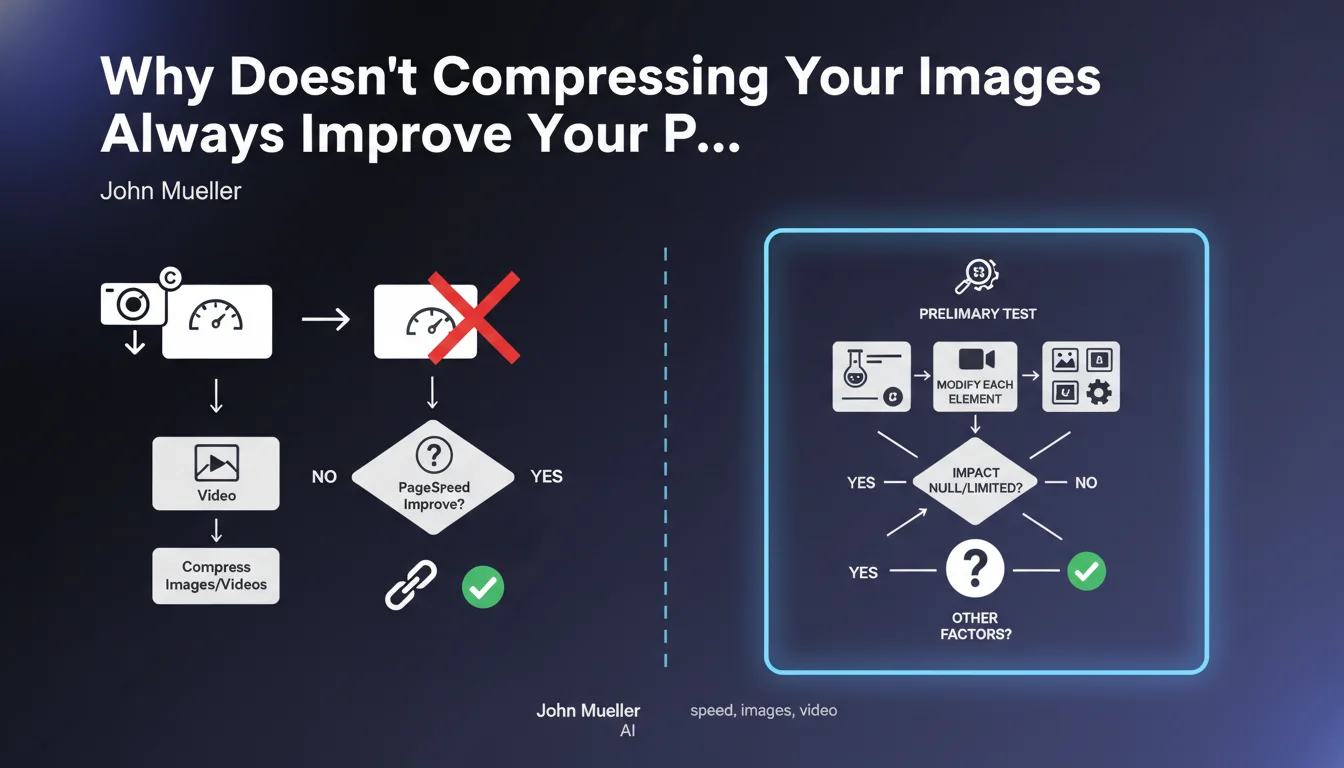

John Mueller addresses a common problem encountered by SEO practitioners: massive compression of images and videos doesn't always deliver the expected performance gains on PageSpeed Insights.

This situation reveals a fundamental principle of web optimization: a page's performance depends on multiple interconnected factors. An isolated improvement can be masked by other bottlenecks present on the page.

Mueller recommends a rigorous methodological approach: test optimizations in isolation before deploying them on the actual page. This method allows you to precisely identify which elements are actually limiting performance.

- Optimizing images alone isn't always sufficient if other factors are blocking performance

- A process-of-elimination testing methodology is necessary to identify the real bottlenecks

- Isolated tests must be followed by tests on the complete page to validate the actual impact

- A "reasonable" speed may be sufficient: excessive optimization isn't always necessary

SEO Expert opinion

This recommendation from Mueller is perfectly consistent with field observations. In SEO audits, we regularly see sites that have massively compressed their media stagnating due to render-blocking JavaScript, poorly optimized web fonts, or a slow server.

The notion of "reasonable speed" deserves attention. Google doesn't require a perfect score of 100/100 on PageSpeed Insights. The obsession with a perfect score can lead to time-consuming optimizations for marginal gains in actual user experience.

The process-of-elimination approach often reveals surprises: a third-party widget, a poorly coded plugin, or even redirect chains can nullify all your image optimization efforts. This is why a holistic vision of performance is essential.

Practical impact and recommendations

- Create isolated test pages for each type of optimization (images, CSS, JavaScript) before deploying to production

- Measure the individual impact of each optimization with PageSpeed Insights and tools like WebPageTest

- Identify limiting factors by creating a copy of your actual page and progressively removing optimized elements

- Prioritize optimizations based on their actual impact, not on their ease of implementation

- Analyze third-party JavaScript (analytics, chat, advertising) which often represents the real bottleneck

- Check server response time (TTFB) which can neutralize all your front-end optimizations

- Don't aim for perfection: a score of 75-85 on PageSpeed with good actual Core Web Vitals is generally sufficient

- Focus your efforts on strategic high-traffic pages rather than the entire site

- Use field data (Search Console, CrUX) rather than only laboratory tests

💬 Comments (0)

Be the first to comment.