Official statement

What you need to understand

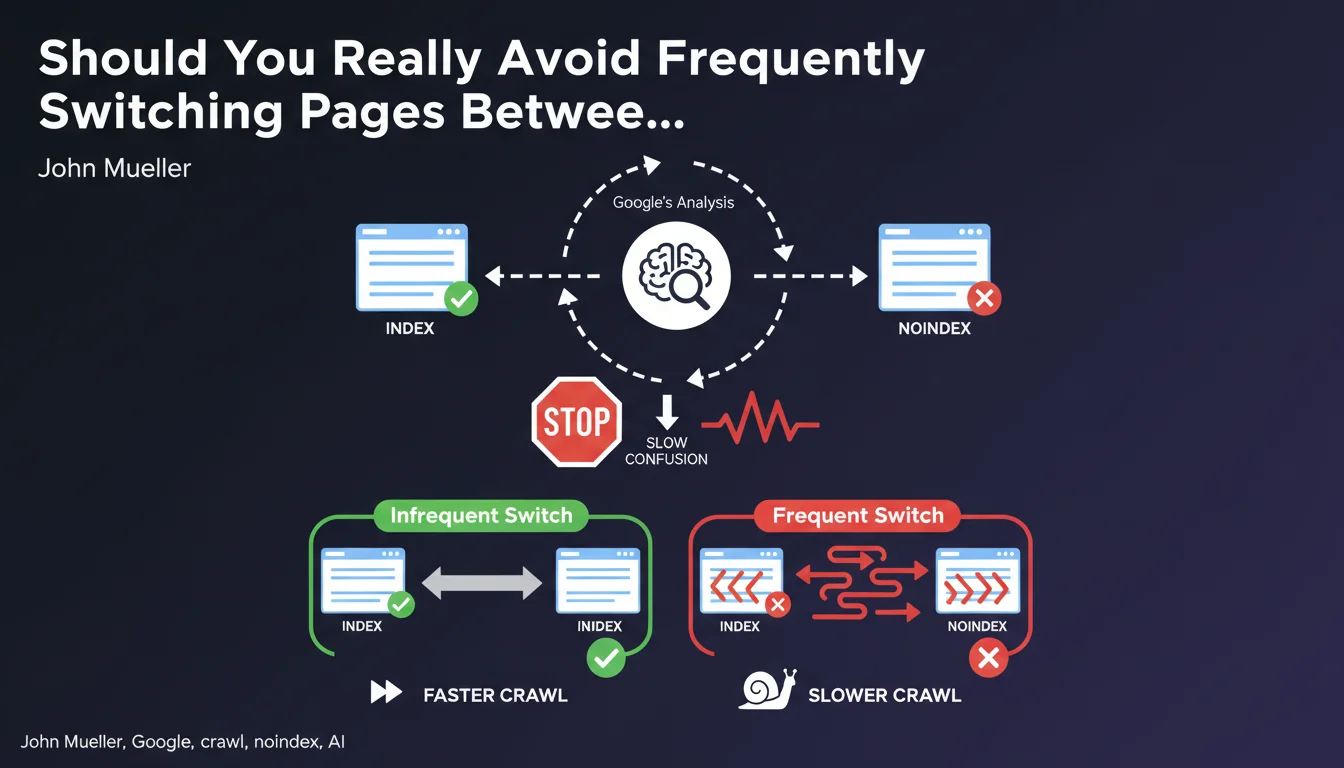

Why Does Google Warn Against Frequent Index/Noindex Status Changes?

Google explains that repeated alternation between indexed and deindexed states for the same URL creates algorithmic confusion. The search engine can no longer determine the site owner's real intention.

This uncertainty prompts Google to slow down the crawl of these pages, as it interprets these changes as a lack of editorial stability. Crawl resources are then reallocated to content deemed more stable.

What Situations Typically Cause These Switches?

The most common case involves e-commerce products that are out of stock. Some sites automatically deindex these pages, then reindex them when restocked.

Other situations include seasonal content, recurring events, or pages whose relevance varies according to temporary business criteria. These practices seem logical from an editorial standpoint but pose technical problems.

What Are the Concrete Risks for SEO?

- Crawl slowdown: Google reduces the visit frequency of these unstable URLs

- Loss of rankings: Reindexed pages don't immediately regain their previous visibility

- Crawl budget dilution: Resources are wasted on pages with uncertain status

- Degraded quality signal: Instability can be interpreted as lack of professionalism

- Slowed indexing: New versions take longer to be taken into account

SEO Expert opinion

Is This Recommendation Consistent With Field Observations?

Absolutely. Log analyses systematically show a correlation between indexable instability and crawl decline. Google places its trust in sites that maintain clear and stable directives.

E-commerce sites practicing cyclical noindex/index indeed experience abnormally long reindexing delays, sometimes several weeks. This penalty isn't manual but algorithmic: the site loses crawl priority.

What Nuances Should Be Added to This Directive?

The notion of "too often" remains deliberately vague. An occasional change (once or twice a year) generally doesn't pose a problem. It's the monthly or weekly recurrence that becomes problematic.

You must also distinguish between massive changes and isolated modifications. Switching 5,000 URLs simultaneously every month will have a much more negative impact than a few individual pages.

In Which Exceptional Cases Can This Rule Be Relaxed?

For news or event sites, Google better understands the temporary nature of content. The editorial logic justifies certain variations.

Sites with very established authority and comfortable crawl budget suffer fewer consequences. Their crawl generally remains priority despite some inconsistencies.

Practical impact and recommendations

What Should You Do Concretely to Manage Out-of-Stock Products?

The recommended solution is to keep the page indexed but modify its content. Clearly indicate the temporary stock shortage and offer alternatives (similar products, back-in-stock notification).

For permanent stock shortages, prefer a 301 redirect to a relevant category rather than a noindex. This approach preserves link equity and maintains user experience.

If noindex is truly necessary, ensure the page remains deindexed long enough (minimum 3-6 months) before any reindexing. Avoid short cycles.

What Technical Mistakes Should You Absolutely Avoid?

- Never automate index/noindex switches based solely on available stock

- Avoid combining noindex with a canonical tag pointing to another URL (contradictory signals)

- Don't use noindex as a temporary solution to hide low-quality content

- Verify that JavaScript scripts don't dynamically modify the robots tag according to sessions

- Ensure the robots.txt file doesn't block noindexed URLs (Google must be able to crawl them to see the directive)

- Regularly audit logs to detect pages with unstable status

How Can You Check and Fix Existing Issues on Your Site?

Start with a historical audit of indexation directives. Analyze your crawl logs over 6-12 months to identify URLs that have frequently changed status. Search Console can also reveal pages oscillating between "indexed" and "excluded".

Create a clear editorial policy defining when to use noindex, redirects, or maintaining index with content modification. Document these rules for the entire technical and editorial team.

For complex sites with thousands of products or dynamic content, establishing a coherent strategy and monitoring its proper application can prove particularly tricky. These optimizations often affect multiple systems (CMS, ERP, scripts) and require precise technical coordination. In this context, engaging a specialized SEO agency allows you to benefit from cross-functional expertise and personalized support to implement robust and sustainable processes.

💬 Comments (0)

Be the first to comment.