Official statement

What you need to understand

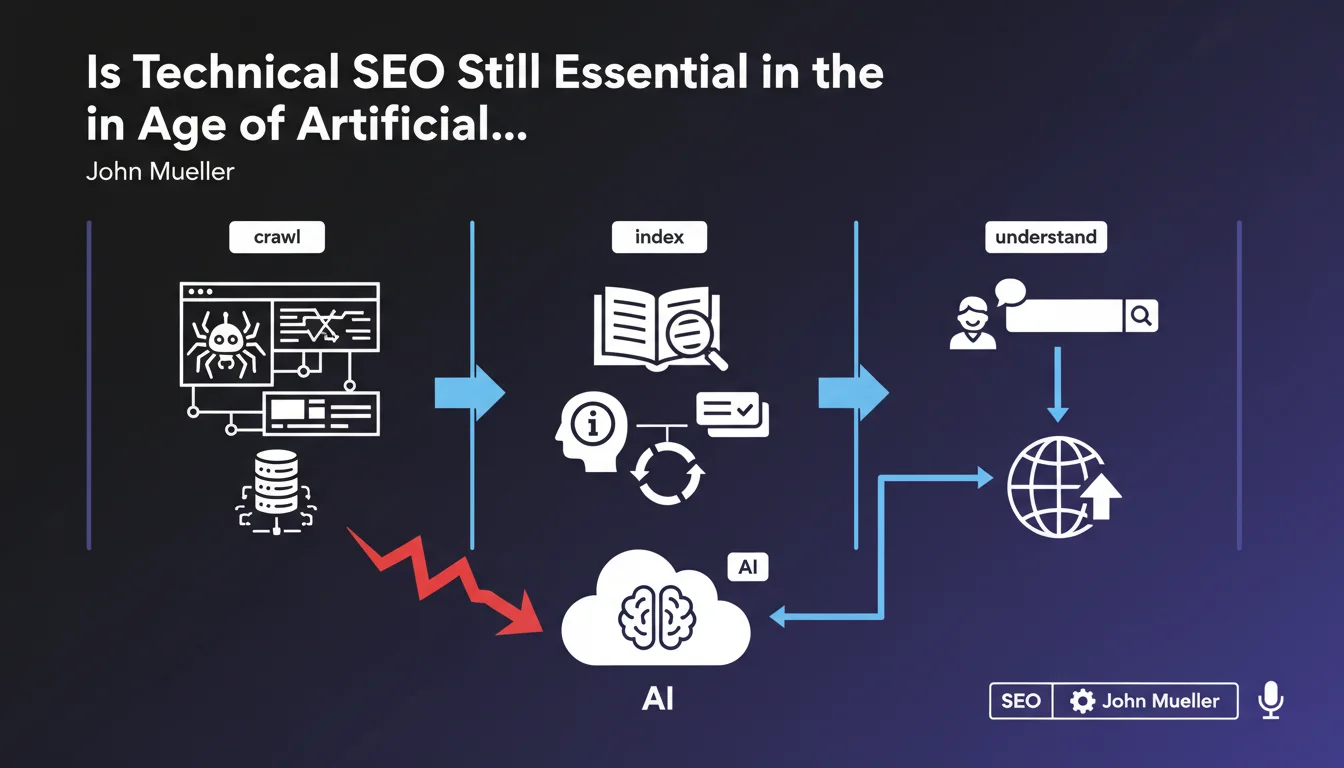

John Mueller provides an important clarification by distinguishing two often confused concepts: programmatic SEO (automated page creation) and technical SEO (site structure optimization).

His statement confirms that technical SEO remains fundamental, even with the evolution of AI-based algorithms. The argument is simple but powerful: no artificial intelligence, however sophisticated, can access content that is not technically accessible.

Concretely, this means that technical fundamentals are not obsolete. Google's AI does not replace the need to have a properly structured site.

- Crawling: robots must be able to browse your pages

- Indexing: your content must be storable in Google's index

- Understanding: the structure must facilitate content interpretation

- Accessibility: an inaccessible site remains invisible, regardless of the AI used

This statement comes in a context where some professionals were wondering if generative AI would make technical SEO obsolete. Google's answer is clear: no.

SEO Expert opinion

This position is totally consistent with what we observe in the field. Sites with excellent content but deficient technical architecture continue to underperform in search results.

Google's AI, particularly with algorithms like BERT or MUM, does effectively improve semantic understanding of content. But these systems still rely on successful crawling and indexing. AI analyzes what is accessible, it does not guess what is hidden.

An important nuance: AI can sometimes compensate for certain minor semantic markup weaknesses. For example, it will understand that content is about "running shoes" even without perfect schema.org markup. But it will not bypass a blocking robots.txt file, nor index a noindex page.

Practical impact and recommendations

This confirmation from Google should encourage you to maintain your investments in the technical infrastructure of your sites, without letting up.

- Regularly audit your crawlability: check robots.txt files, meta robots tags, and internal link structure

- Optimize your crawl budget: eliminate unnecessary pages, fix redirect chains, resolve 404 errors

- Ensure controlled indexing: use indexing directives correctly and monitor coverage reports in Search Console

- Improve loading speed: Core Web Vitals remain important signals, AI does not compensate for a slow site

- Structure your data: semantic markup (schema.org) helps AI better understand your content

- Guarantee mobile accessibility: mobile-first indexing requires impeccable technical experience on smartphones

- Monitor server logs: analyze how Googlebot actually explores your site

- Maintain a logical architecture: a clear hierarchy facilitates understanding by AI algorithms

💬 Comments (0)

Be the first to comment.