Official statement

What you need to understand

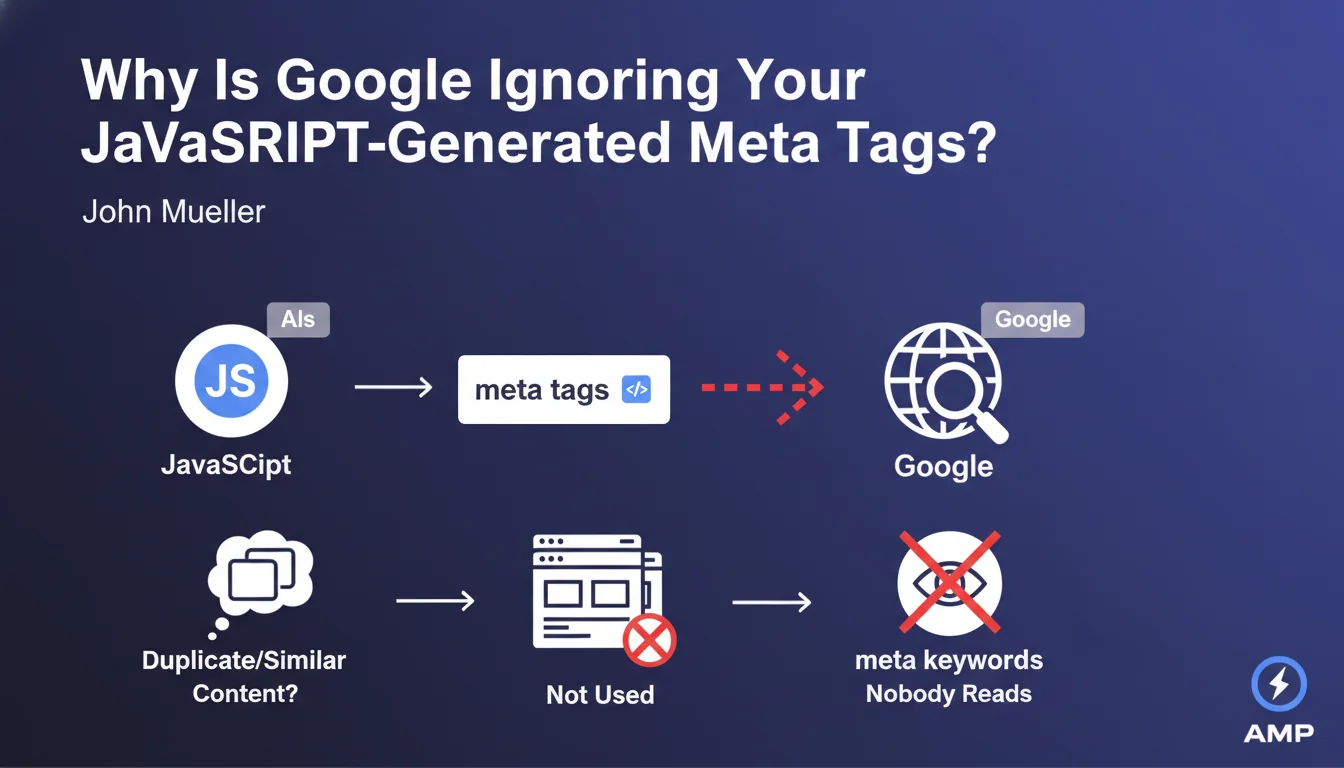

John Mueller answered a question about indexing dynamic meta tags generated via JavaScript. His response highlights two common problems: identical meta descriptions across all pages of a site, or variations too minimal to be relevant.

This statement reminds us that while Google does manage to index JavaScript content, the problem is often not technical. Rather, it's a matter of quality and uniqueness of the meta tag content itself.

Mueller takes this opportunity to reiterate a long-established fact: the meta keywords tag has no value whatsoever for Google SEO. Nobody reads it, neither search engines nor users.

- Meta descriptions must be unique for each page

- The generation method (static or JavaScript) is not the main issue

- Google can crawl JavaScript, but prioritizes content quality

- The meta keywords tag is completely obsolete and useless

- Meta descriptions that are too similar are considered duplicate

SEO Expert opinion

This statement is consistent with what we observe daily on our clients' websites. Google often generates its own snippets when it detects duplicate or irrelevant meta descriptions, even if they are technically present in the code.

It's interesting to note that Mueller shifts attention away from the technical issue (JavaScript) to the editorial problem (content uniqueness). This reflects Google's evolution: the ability to index JavaScript has improved, but SEO fundamentals remain paramount.

Regarding meta keywords, it's a useful reminder. Yet, we still observe in 2024 that many CMSs continue to generate it by default, wasting time and resources for nothing.

Practical impact and recommendations

- Audit your existing meta descriptions to identify duplications or excessive similarities

- Create unique meta descriptions for each important page (minimum 120-150 characters, maximum 160)

- Remove the meta keywords tag from all your templates and generation systems

- If you dynamically generate your metas, verify that the logic produces substantial variations, not just cosmetic ones

- Test the JavaScript rendering of your pages with the URL Inspection tool in Search Console to confirm that Google actually sees your tags

- Prioritize strategic pages: better to have 100 perfect meta descriptions than 10,000 mediocre automatically generated ones

- Monitor your snippets in the SERPs: if Google systematically ignores your meta descriptions, they're not relevant enough

- Document your templates to prevent future updates from reintroducing obsolete tags

These optimizations often require a redesign of your site's editorial and technical architecture. If you manage a site with several thousand pages or if your technical stack is complex (JavaScript frameworks, headless CMS), implementing a coherent strategy can prove challenging. Working with an experienced SEO agency allows you to precisely audit your situation, prioritize actions based on their potential ROI, and implement intelligent automated processes that guarantee the uniqueness and relevance of your meta tags in the long term.

💬 Comments (0)

Be the first to comment.