Official statement

What you need to understand

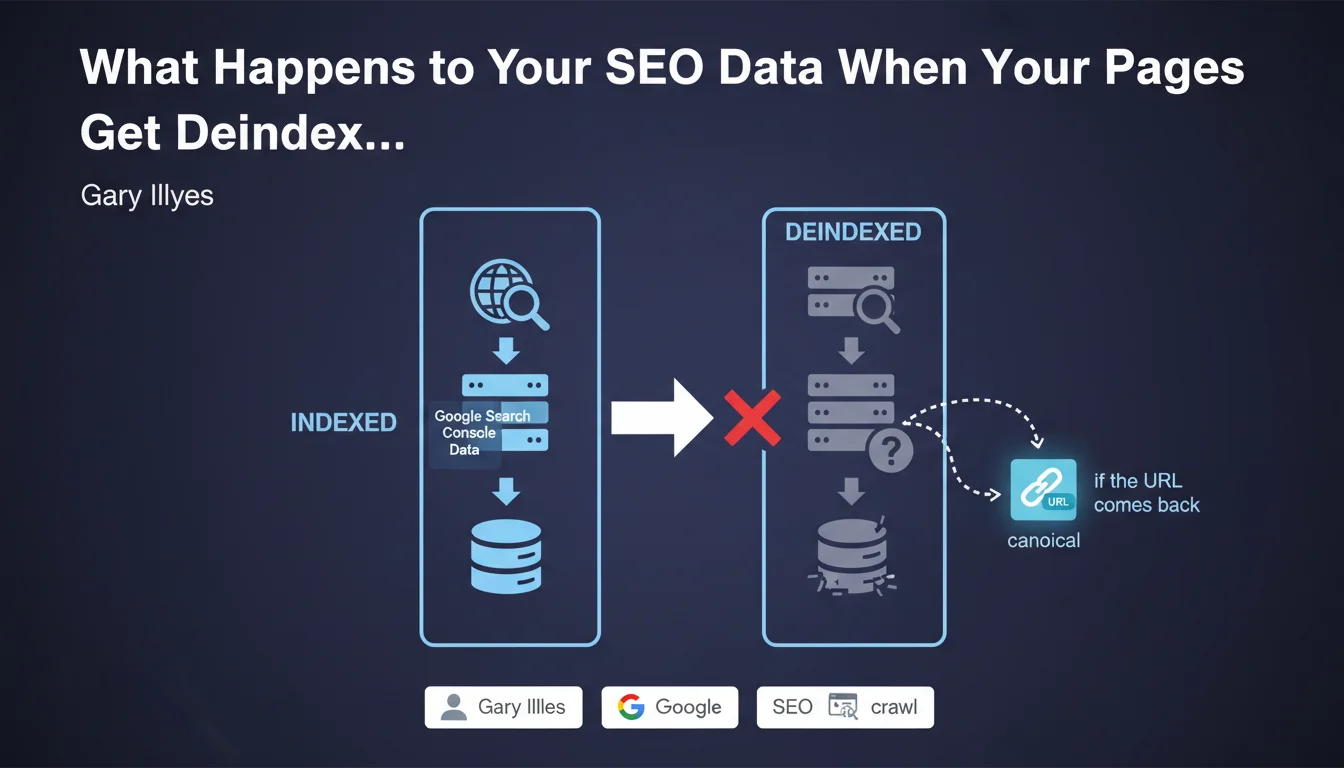

Google Search Console is the primary tool for monitoring a site's SEO health. But what happens to the data when your pages disappear from the index?

According to Google's Gary Illyes, Search Console retains virtually no data on deindexed or non-indexed pages. This means that when a URL transitions from "indexed" status to "Crawled - currently not indexed" status, you lose the history of its performance.

However, there is one notable exception: Google retains certain information "if the URL comes back." This clarification suggests that a minimal traceability mechanism persists to recognize a URL that would be reindexed later.

- Performance data disappears when a page is no longer indexed

- Google does not store complete history for URLs in "not indexed" status

- A residual mechanism allows recognition of URLs that return to the index

- This logic also applies to canonical URL changes

SEO Expert opinion

This statement indeed corresponds to what we observe in the field. Click and impression data evaporates quickly as soon as a page leaves the index, which complicates post-mortem diagnosis.

The important nuance concerns temporarily deindexed pages. If your page is deindexed due to a technical issue that you fix quickly, Google appears capable of "recognizing" the URL upon its reindexation. This explains why some pages recover their rankings relatively quickly.

For sites with massive indexation problems, this makes historical auditing particularly difficult. You must capture the data at the moment the problem occurs, not after the fact.

Practical impact and recommendations

- Export your GSC data regularly (at minimum monthly) to maintain a complete history of all your indexed URLs

- Set up automatic alerts for drops in indexed URLs to react immediately before data loss occurs

- Systematically document the indexation status of your important pages in an external dashboard

- In case of deindexation, immediately capture all available data in GSC before it disappears

- Use third-party monitoring tools (crawlers, rank trackers) to maintain a history independent of Google

- Prioritize prevention: regularly audit your site to identify deindexation risks before they occur

- Create backups of your coverage reports to be able to compare evolution over time

These monitoring and archiving processes represent a significant technical and organizational investment. Setting up a robust monitoring infrastructure requires specific skills in data extraction, automation, and advanced SEO analysis. For companies managing medium or large-scale sites, working with a specialized SEO agency provides access to proven systems and in-depth expertise, while freeing your internal teams to focus on content strategy and optimization.

💬 Comments (0)

Be the first to comment.