Official statement

What you need to understand

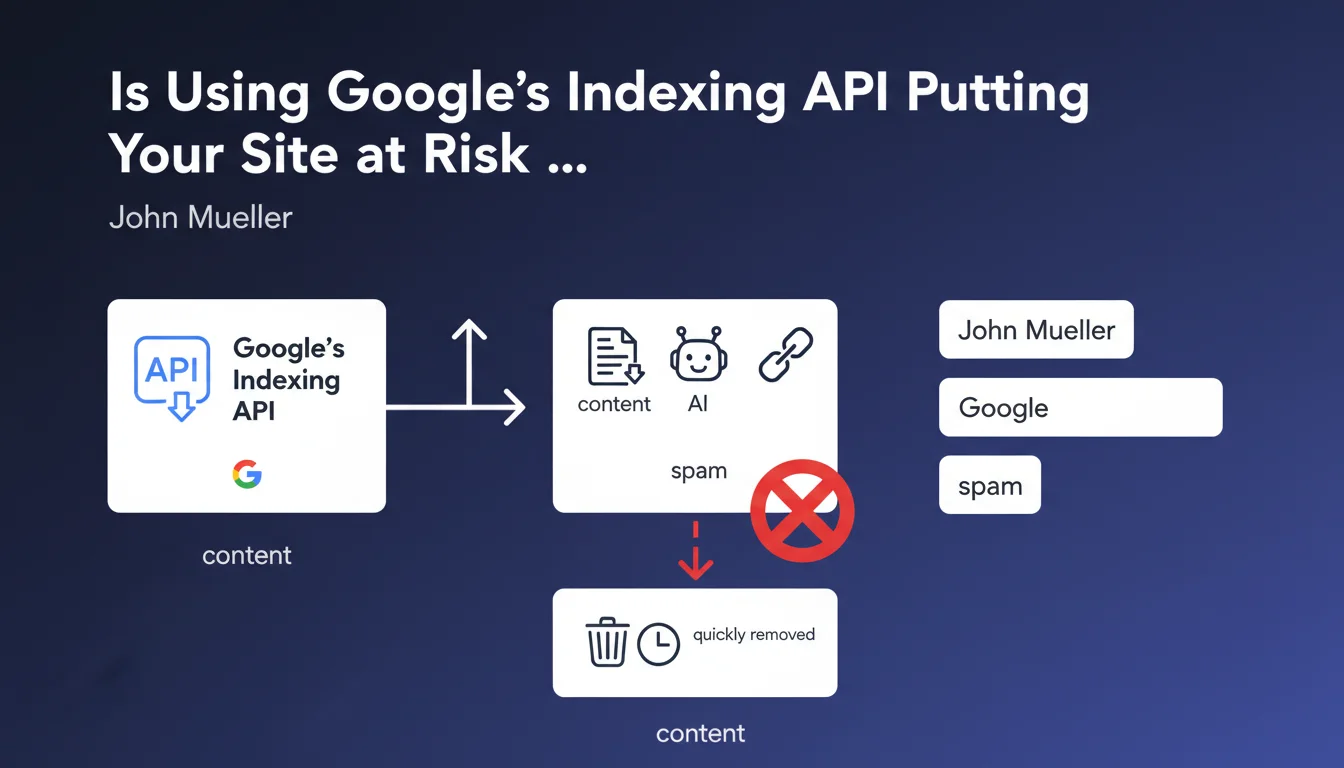

Google's Indexing API is a technical tool originally designed for very specific use cases. It allows you to notify Google of content that requires rapid indexing.

According to the official documentation, this API is intended exclusively for two very specific types of content: job postings (JobPosting) and live broadcast events (BroadcastEvent). These contents have a limited lifespan and require immediate visibility.

However, many SEO professionals have hijacked this tool to accelerate the indexing of standard pages. This practice has spread massively, particularly to quickly index low-quality or automatically generated content.

The essential points to remember:

- The API is not a shortcut for indexing any type of content

- Using it outside of intended cases is considered non-compliant

- Google quickly identifies and removes spam content submitted via the API

- This massive misuse damages the tool's reputation

- Natural indexing remains the recommended method for 99% of content

SEO Expert opinion

This statement from John Mueller is perfectly consistent with field observations over the past several months. We are indeed seeing an explosion of API abuse, particularly by sites generating mass AI content.

The important nuance to add concerns legitimate sites with job postings. If you are genuinely publishing job openings, using the API remains relevant and compliant. The problem lies in the misuse for blog pages, product sheets, or standard editorial content.

There is also an interesting paradox: sites that genuinely need rapid indexing (quality content, well-structured sites) generally don't need the API. Google naturally and quickly indexes relevant content from well-optimized sites.

Practical impact and recommendations

- Stop immediately any use of the Indexing API for content other than JobPosting or BroadcastEvent

- Audit your current usage: if you're using the API for standard pages, cease this practice

- Prioritize the XML sitemap file properly configured and regularly updated to signal your new content

- Optimize your internal linking to facilitate the natural discovery of your pages by Googlebot

- Improve your crawl budget by eliminating low-value pages and optimizing your technical structure

- Work on content quality: relevant and unique pages are indexed faster naturally

- Monitor Search Console to identify any non-indexed pages and understand the real reasons

- Respect legitimate use cases: continue using the API only if you publish job postings or livestreams

Bringing your indexing strategy into compliance often requires a complete technical and strategic overhaul. Between crawl budget optimization, internal linking restructuring, and content quality improvement, these adjustments can prove complex.

For large sites or projects requiring a rapid transition to compliant practices, support from a specialized SEO agency allows you to secure this evolution. Expert insight ensures that every technical aspect is properly handled, without risking penalties or loss of visibility during the transition.

💬 Comments (0)

Be the first to comment.