Official statement

What you need to understand

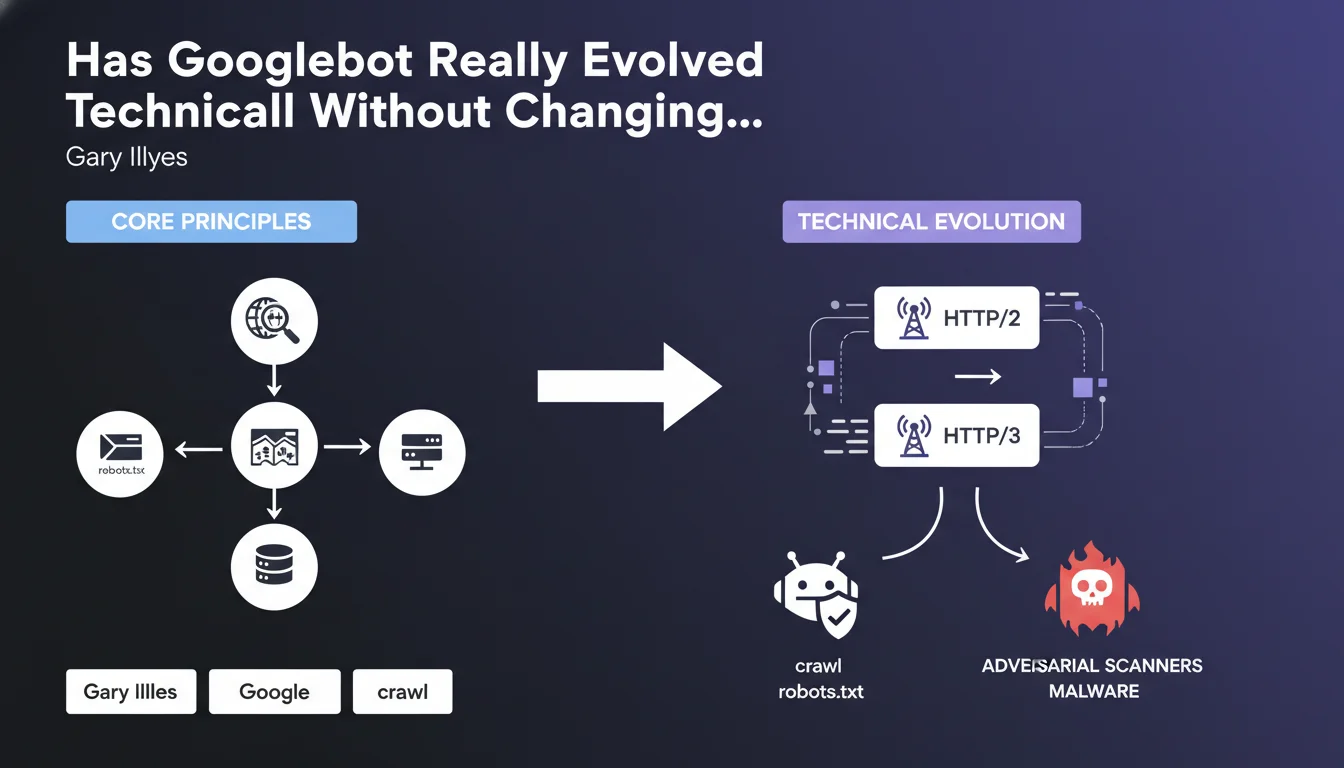

This official statement reveals that the fundamentals of Googlebot crawling have remained stable, but that major technical evolutions have occurred behind the scenes. This is reassuring information for SEO practitioners: historical best practices remain valid.

The adoption of HTTP/2 and soon HTTP/3 protocols by Googlebot represents a significant improvement in crawling efficiency. These protocols enable faster connections, better data compression, and the ability to load multiple resources simultaneously over a single connection.

Google also makes a clear distinction between respectful crawlers like Googlebot that obey robots.txt, and "adversarial" crawlers such as malware scanners or certain aggressive bots. This nuance is important for understanding the different types of bot traffic on your site.

- The basic principles of crawling (discovery, prioritization, indexing) remain unchanged

- Improvements focus on technical infrastructure and communication protocols

- HTTP/2 and HTTP/3 make crawling more efficient and less resource-intensive on servers

- Not all bots behave the same way when faced with robots.txt

- The technical modernization of Googlebot continues progressively

SEO Expert opinion

This statement is perfectly consistent with field observations from recent years. Indeed, experienced SEO professionals have not witnessed upheavals in how Google discovers and crawls pages, but have observed faster and more efficient crawls on well-optimized sites.

Googlebot's adoption of HTTP/2 was already documented, and the transition to HTTP/3 is a logical evolution to further improve performance. However, this needs to be nuanced: if your server infrastructure doesn't properly support these modern protocols, you won't fully benefit from these improvements.

The distinction between respectful and adversarial crawlers deserves attention. In your server logs, you'll likely notice that Google scrupulously respects your robots.txt, while other bots completely ignore it. Don't confuse a Google crawl issue with malicious bot activity that can saturate your bandwidth.

Practical impact and recommendations

- Enable HTTP/2 or HTTP/3 on your server if not already done - contact your hosting provider to check compatibility and activate these modern protocols

- Verify that your SSL certificate is valid and up to date - HTTP/2 and HTTP/3 absolutely require HTTPS to work with Googlebot

- Optimize your robots.txt file to effectively guide Googlebot toward your priority content and block sections without SEO value

- Regularly analyze your server logs to distinguish Googlebot traffic from other bots and identify potential adversarial crawlers consuming your resources

- Clean your site of low-quality pages - with more efficient crawling, Google will discover mediocre content more quickly, which could dilute your authority

- Monitor your crawl budget in Search Console - even though crawling is more efficient, a poorly structured site can still waste precious resources

- Don't block CSS and JavaScript resources - with modern protocols, Googlebot can load them more efficiently in parallel

- Implement well-structured XML sitemaps to facilitate the discovery of your strategic content by a now more performant Googlebot

In summary: Focus on adopting modern protocols (HTTP/2, HTTP/3) and on the quality of your site architecture. SEO fundamentals remain valid, but technical infrastructure takes on increased importance.

These technical optimizations, particularly the migration to HTTP/3 and fine-grained analysis of crawl behaviors, can represent a complex challenge requiring specialized expertise in web infrastructure and technical SEO. To ensure optimal and secure implementation of these evolutions, support from a specialized SEO agency can prove judicious, particularly to audit your server configuration, analyze your logs in depth, and prioritize actions according to your specific context.

💬 Comments (0)

Be the first to comment.