Official statement

What you need to understand

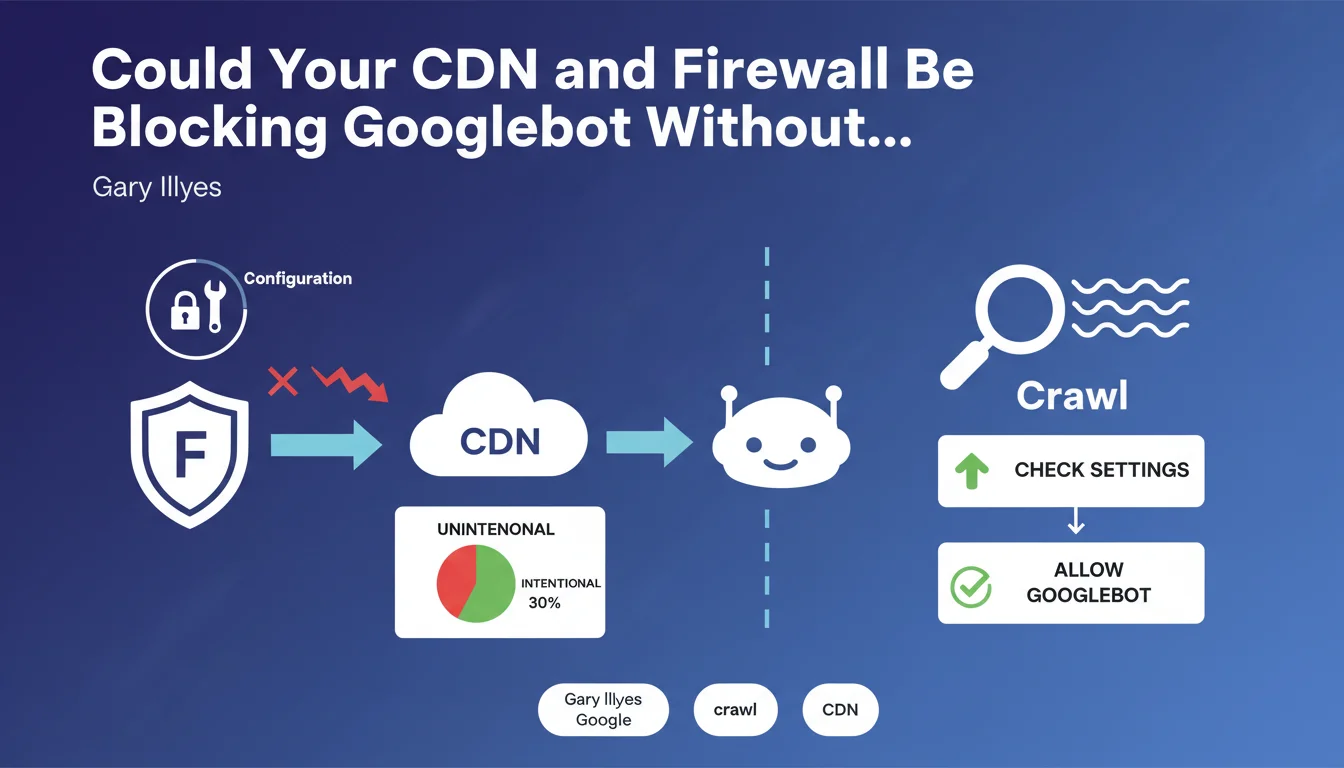

Security infrastructures like CDNs (Content Delivery Networks) and firewalls have become essential for protecting modern websites. Yet these same tools can constitute a major obstacle to organic search performance.

According to this official statement, poor CDN and firewall configurations represent the two most frequently encountered obstacles for Googlebot when crawling sites. The problem is that these blockages are generally unintentional: technical teams don't realize they're preventing Google's bot from accessing the content.

This situation creates a paradox for SEO practitioners. On one hand, strict security measures protect against scraping, DDoS attacks and content theft. On the other, they can literally make a site invisible to Google if they're not properly configured.

- Misconfigured CDNs and firewalls are the two main technical barriers to crawling

- Googlebot blockages are mostly unintentional and undetected by teams

- Regular verification of filtering rules is essential to avoid visibility losses

- The security/accessibility balance must be a priority in any technical SEO strategy

SEO Expert opinion

This observation corresponds perfectly with what we observe in the field during technical audits. Professional crawling tools regularly encounter overly restrictive security configurations, and if our crawlers face these obstacles, Googlebot encounters them as well.

A particularly concerning point involves popular CDN solutions like Cloudflare that have very aggressive anti-bot protection modes. When these modes are activated at "Under Attack" level or with overly strict WAF (Web Application Firewall) rules, even Googlebot can be temporarily blocked or slowed down, especially during crawl spikes.

Context also matters: some e-commerce sites with thousands of filtered pages may intentionally limit crawling of certain sections to optimize crawl budget. In these cases, the blocking is intentional and strategic. Distinguishing between intentional and accidental blocking requires in-depth analysis of server logs and Search Console data.

Practical impact and recommendations

- Immediately audit your CDN and firewall rules to identify potential Googlebot blockages (user-agent, rate limiting, geolocation)

- Explicitly whitelist Google's user-agents in your security rules (Googlebot, Googlebot-Image, Googlebot-News, etc.)

- Verify Googlebot IP addresses and ensure they're not blocked by your geographical or IP reputation rules

- Regularly analyze your server logs to detect 403, 429 or 503 error codes returned to Googlebot

- Set up alerts in Search Console to be notified immediately if crawl errors increase

- Test with the "URL Inspection" tool in Search Console to verify actual accessibility of your critical pages

- Document all security rules and establish an SEO validation process before any modifications

- Avoid systematic JavaScript challenges that can delay or prevent crawling of certain pages

- Configure intelligent rate limiting rules that distinguish Googlebot from malicious scrapers

- Establish regular communication between SEO, DevOps and security teams to maintain optimal balance

In summary: Proper configuration of CDNs and firewalls represents a major technical challenge for modern SEO. Unintentional Googlebot blockages can go unnoticed for months and cause significant traffic losses.

These technical optimizations require specialized expertise at the intersection of SEO, IT security and web architecture. The complexity of multi-layered configurations (server, CDN, WAF, DDoS protection) makes intervention delicate and risky without a proven methodology.

For high-stakes sites, support from an SEO agency specialized in technical issues can prove valuable. This expertise enables in-depth auditing of existing configurations, identification of invisible blockages and implementation of continuous monitoring, while preserving the security level essential to your business.

💬 Comments (0)

Be the first to comment.