Official statement

Other statements from this video 5 ▾

- □ Faut-il vraiment maîtriser le développement web pour faire du SEO ?

- □ Faut-il vraiment maîtriser le développement web pour faire du SEO technique ?

- □ Pourquoi Google insiste-t-il autant sur l'accessibilité, la vitesse et l'expérience utilisateur du web ?

- □ Pourquoi appliquer les mêmes recommandations SEO à tous les sites est-il une erreur stratégique ?

- □ Pourquoi l'ajout massif d'URLs peut-il paralyser votre budget de crawl ?

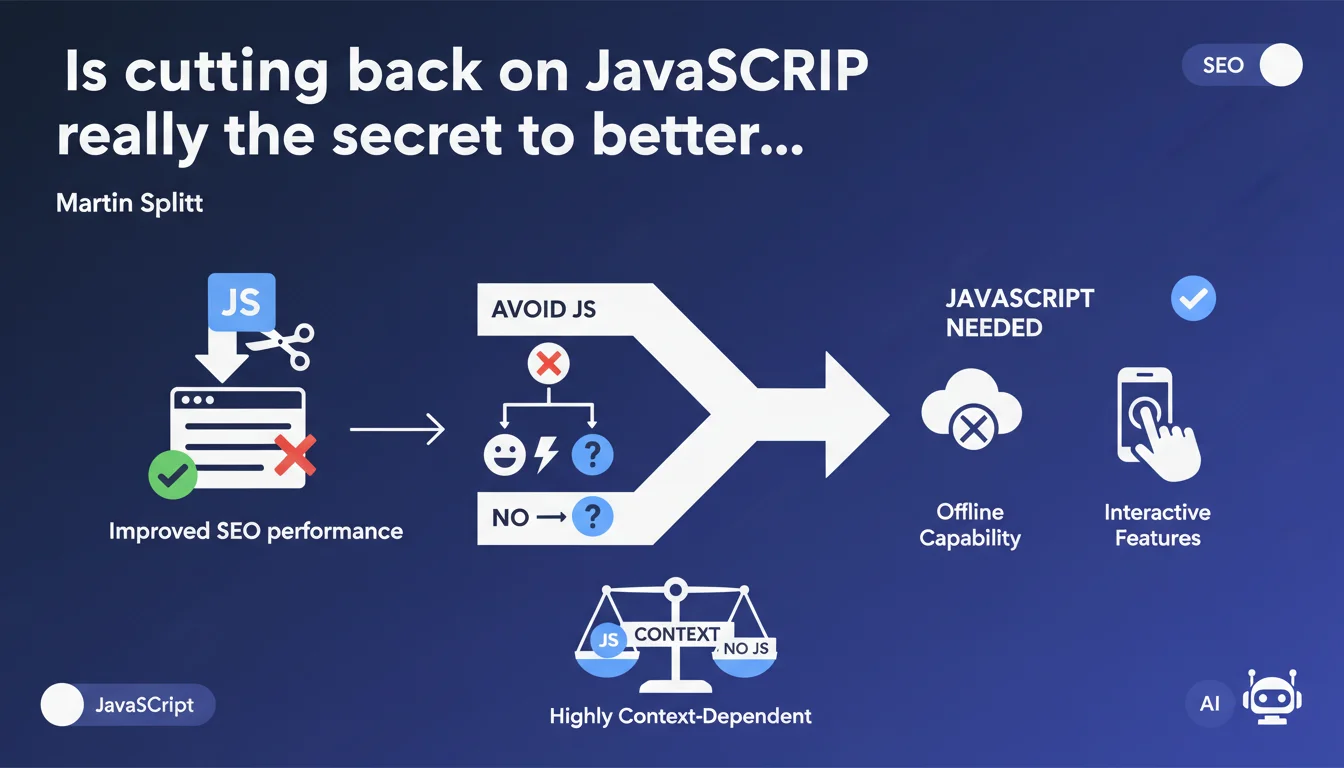

Google recommends avoiding JavaScript when possible to optimize performance, but with important nuance: certain features (offline functionality, advanced interactivity) make it indispensable. The key takeaway? Make case-by-case decisions rather than banning JS outright.

What you need to understand

Why does Google emphasize JavaScript's impact on performance?

JavaScript increases client-side processing time: parsing, compilation, and execution. Every kilobyte of JS increases Time to Interactive and can degrade Core Web Vitals, particularly FID (First Input Delay) and INP (Interaction to Next Paint).

Google's crawlers must also render JavaScript-heavy pages, consuming more resources and delaying indexation. On large-scale sites or those with limited crawl budgets, every millisecond matters.

What functionality justifies using JavaScript according to Splitt?

Splitt explicitly mentions Progressive Web Apps and offline functionality. You can extend this to anything requiring client-side interactivity: real-time filters, data visualizations, conversational interfaces.

The trap? Many sites load heavy frameworks for tasks achievable with pure HTML/CSS or a touch of vanilla JS. This is where deliberate trade-offs become critical.

How do you balance performance against functionality?

Google's recommendation is context-dependent: there's no absolute rule. You must measure each script's real impact on your field metrics (CrUX, PageSpeed Insights) and business objectives.

A heavy animated carousel that doesn't convert? Remove it. An interactive product configurator that boosts sales? Optimize it, but keep it.

- Prioritize static HTML/CSS for all crawlable and indexable content (navigation, text, images)

- Reserve JS for user interactions that deliver measurable business value

- Measure impact on INP, TBT, FID before and after each script addition

- Prioritize server-side rendering or static generation when possible

- Regularly audit your JS bundles to identify dead or redundant code

SEO Expert opinion

Is this statement consistent with real-world observations?

Absolutely. Sites that shift from monolithic JavaScript stacks (React/Angular in pure CSR) to SSR or SSG consistently see Core Web Vitals improvements and increased indexation rates.

But Splitt remains cautious — which is rare at Google. He doesn't say "ban JavaScript," he says "make deliberate choices." It's a nuanced position reflecting reality: some sites cannot function without advanced JS.

What nuances should you apply to this recommendation?

The problem isn't JavaScript itself, but how you use it. A site can be blazingly fast with 200 KB of well-optimized JS (code splitting, lazy loading, tree shaking) and catastrophic with 50 KB of poorly-placed blocking scripts.

[To verify] Google provides no quantitative threshold: how many KB of JS is "too much"? What's the exact impact on crawl budget? These gray areas force you to test rather than follow rigid rules.

In what cases does this rule not apply?

Complex web applications (SaaS, dashboards, interactive tools) require JavaScript. The challenge isn't avoiding it, but cleanly separating indexable content (server-rendered) from the application interface.

Same recommendation for e-commerce sites with product configurators, real-time filters, or personalization experiences. Again: optimize the JS, don't blindly eliminate it.

Practical impact and recommendations

What concrete steps reduce JavaScript dependency?

Start with a JS bundle audit: which scripts are critical, which are optional? Tools like Webpack Bundle Analyzer or Lighthouse show you what's heavyweight.

Next, favor progressive approaches: baseline HTML functioning without JS, then enhancement through scripts. Content stays accessible even if JS fails or takes time to load.

What mistakes should you avoid with JavaScript management?

Never block initial rendering with synchronous JS in the <head>. Use defer or async depending on your needs, and place non-critical scripts at the end of <body>.

Avoid loading full frameworks (React, Vue) for mostly static pages. If you must use a framework, opt for SSR (Next.js, Nuxt) or SSG (Astro, Eleventy).

- Audit your scripts with Lighthouse and identify unused code

- Implement code splitting to load only necessary JS per page

- Enable lazy loading for components below the fold

- Test your Core Web Vitals before/after each JS change (CrUX, PageSpeed Insights)

- Switch to SSR or SSG if your content is mostly static or indexable

- Remove redundant libraries (multiple jQuery versions, unnecessary polyfills)

- Minify and compress (Brotli/Gzip) all JS files

How do you verify your site isn't abusing JavaScript?

Use the CrUX report in Search Console to see your real-world metrics. If your INP/FID are in the red while your content is simple, JS is likely the culprit.

Test by disabling JS in your browser: if the page becomes completely unusable when it should display static content, you have an architecture problem.

❓ Frequently Asked Questions

Google pénalise-t-il les sites qui utilisent beaucoup de JavaScript ?

Le rendu côté serveur (SSR) est-il obligatoire pour bien ranker ?

Peut-on utiliser React ou Vue.js sans pénaliser son SEO ?

Comment mesurer l'impact du JavaScript sur mon crawl budget ?

Faut-il supprimer tous les scripts tiers (analytics, ads, chatbots) ?

🎥 From the same video 5

Other SEO insights extracted from this same Google Search Central video · published on 12/06/2025

🎥 Watch the full video on YouTube →

💬 Comments (0)

Be the first to comment.