Official statement

What you need to understand

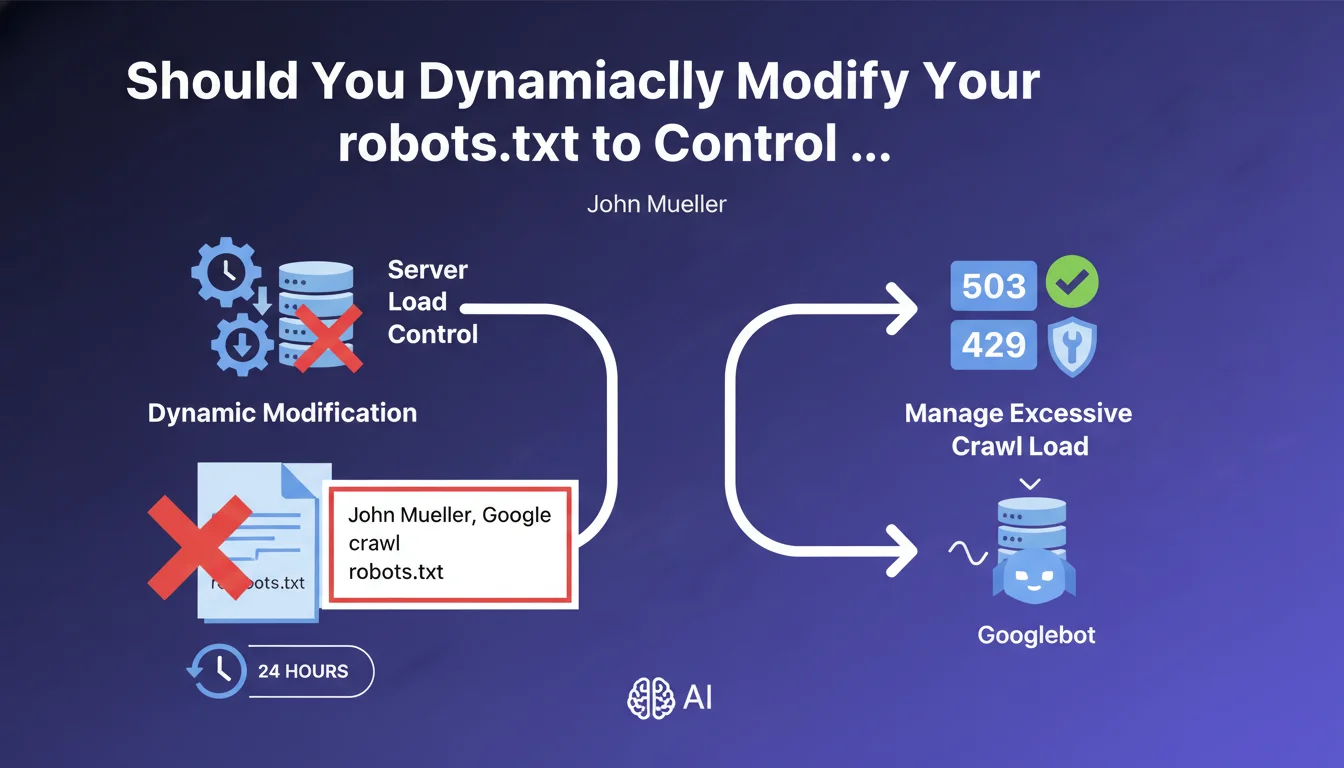

The robots.txt file is cached by Google for approximately 24 hours. This technical information is crucial to understanding why frequent modifications to this file have no immediate impact on crawler behavior.

Some technicians attempt to modify the robots.txt file several times a day to block Googlebot during peak hours and then re-authorize it later. This approach stems from good intentions: protecting the server from excessive crawl load.

This strategy is completely ineffective because Google does not see these changes in real time. The bot continues to follow the cached version of the file, completely ignoring the temporary modifications made in the meantime.

- The robots.txt is cached by Google for approximately 24 hours

- Dynamic modifications are not taken into account immediately

- This method does not allow for real-time server load management

- Alternatives exist to control crawling in a reactive manner

SEO Expert opinion

This recommendation is perfectly consistent with field observations. Many sites have attempted this approach without success, finding that Googlebot continued to crawl despite modifications to the robots.txt.

HTTP codes 503 and 429 are indeed the appropriate solutions for managing temporary load. Code 503 (Service Unavailable) indicates temporary unavailability, while 429 (Too Many Requests) explicitly signals rate limiting. These codes are interpreted in real time by crawlers.

An important nuance: if your infrastructure requires recurring hourly blocks of Googlebot, this is probably a symptom of a deeper architectural problem. It would be wiser to optimize your crawl budget via Search Console or improve server capacity.

Practical impact and recommendations

- Never modify robots.txt more than once per day - Frequent changes are useless and create confusion

- Implement dynamic management via HTTP codes - Use 503 or 429 at the server level for temporary load spikes

- Monitor your crawl load in Search Console to identify problematic patterns

- Optimize your crawl budget by permanently blocking unnecessary URLs in the robots.txt

- Properly size your infrastructure if crawl spikes regularly pose problems

- Use crawl rate settings in Search Console (when available) rather than temporary blocks

- Avoid recurring temporary solutions - If you're blocking Googlebot daily, it's a sign of a structural problem

In summary: The robots.txt is a permanent directive tool, not a real-time switch. To manage server load reactively, prioritize HTTP codes 503/429.

Optimal crawl budget management requires a comprehensive approach combining server infrastructure, technical configuration, and continuous monitoring. These technical optimizations require specialized expertise in web architecture and crawler behavior. If your site encounters recurring load issues related to crawling, support from a specialized SEO agency can prove valuable in establishing a complete diagnosis and implementing a sustainable strategy tailored to your specific context.

💬 Comments (0)

Be the first to comment.