Official statement

What you need to understand

What is this special form for requesting a crawl from Google?

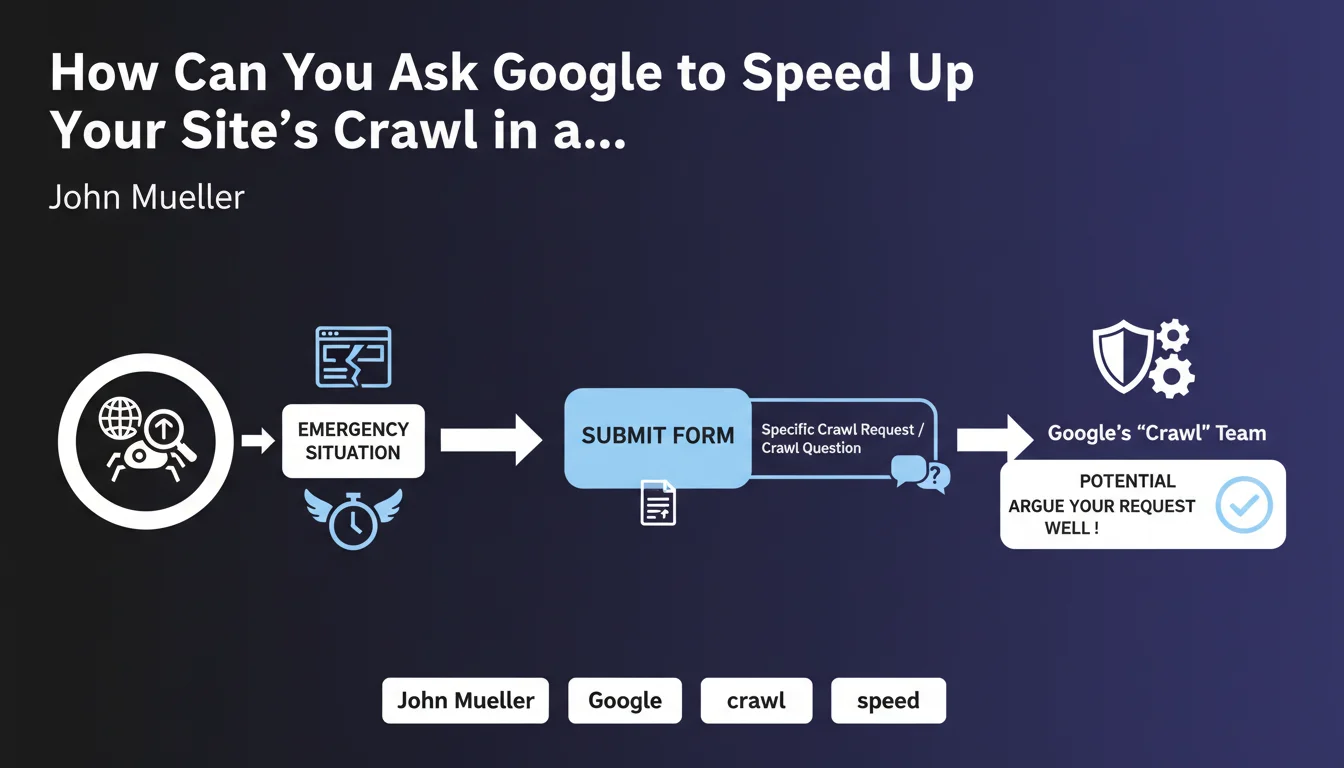

Google has a confidential form that allows webmasters to directly contact the crawl team for exceptional situations. This communication channel is not publicly documented because Google wants to avoid its misuse.

This form allows you to ask specific technical questions about how Googlebot crawls your site, or to request a targeted crawl in very particular cases. It is not an on-demand crawl tool, but a problem-solving mechanism for critical situations.

What extreme situations justify using this form?

Legitimate use cases involve major technical problems affecting indexation. For example: an entire site that is no longer being crawled following a migration, massive unexplained crawl errors, or critical crawl budget issues for a large-scale site.

It may also involve urgent situations with strong business impact: launch of strategic products requiring rapid indexation, correction of critical content already indexed, or complex technical problems that standard tools cannot resolve.

Why is it necessary to argue your request particularly well?

Google's crawl team receives a considerable volume of requests and must prioritize truly exceptional cases. A poorly argued request will simply be ignored or redirected to standard tools like Search Console.

- Reserved for exceptional situations that are technically complex

- Requires detailed argumentation with technical evidence

- Does not replace standard tools (Search Console, URL inspection)

- Allows direct contact with Google's crawl team

- Intended for critical problems affecting the site's overall indexation

SEO Expert opinion

Is this option really accessible to all webmasters?

In practice, this form is rarely necessary for the majority of sites. Google has considerably improved its diagnostic tools through Search Console, and most crawl problems can be solved through these standard channels.

The existence of this form mainly reveals that Google recognizes that its system is not perfect and that exceptional situations require human intervention. However, real access to the crawl team remains an implicit privilege for large-scale sites or truly problematic cases.

What are the risks of using this form inappropriately?

Abusive or unjustified use may lead to your future legitimate requests being ignored. Google might also consider that you do not master the basics of technical SEO, which discredits your site.

Additionally, contacting Google for problems that fall under your own technical responsibility (misconfigured robots.txt, server response time too slow, poorly designed architecture) will only expose your shortcomings without providing a solution.

In which cases could this procedure really make a difference?

Situations where this intervention can prove decisive mainly concern very large sites (millions of pages) with complex crawl budget problems that standard adjustments do not resolve.

It may also be relevant for obvious Googlebot bugs (aberrant behavior that is documented and reproducible), or during major technical migrations where despite all precautions, indexation does not resume normally after several weeks.

Practical impact and recommendations

What should you check before even considering using this form?

Start with a complete technical audit of your site. Verify that all technical files (robots.txt, XML sitemap) are correctly configured and that your server responds quickly to all requests.

Analyze the Search Console reports in detail: coverage statistics, crawl errors, site performance. Use the URL inspection tool to verify that Googlebot can access and index your priority pages.

Make sure the problem has persisted for at least 2-3 weeks and that it is not a normal processing delay by Google. Precisely document all your findings with screenshots and quantified data.

How do you write a request that has a chance of being considered?

Your request must be extremely structured and factual. Present the context (type of site, size, sector), precisely describe the observed problem with quantified data (pages affected, observed crawl drop).

List all corrective actions already undertaken and their results. Attach technical evidence: server logs showing Googlebot requests, Search Console screenshots, examples of problematic URLs.

Clearly explain the critical business impact of the problem and why it requires exceptional intervention. Remain professional, concise, and technical in your formulation.

What concrete alternatives should be prioritized first?

- Optimize your internal linking architecture to facilitate natural crawling

- Reduce server response time to less than 200ms for Googlebot

- Submit an optimized XML sitemap with only priority URLs

- Use the URL inspection tool for the most critical pages

- Correct all problems reported in the coverage report

- Eliminate redirect chains and 5xx/4xx errors

- Implement server log monitoring to analyze Googlebot behavior

- Increase the update frequency of strategic content

💬 Comments (0)

Be the first to comment.