Official statement

What you need to understand

Why does Google slow down its crawl after a migration?

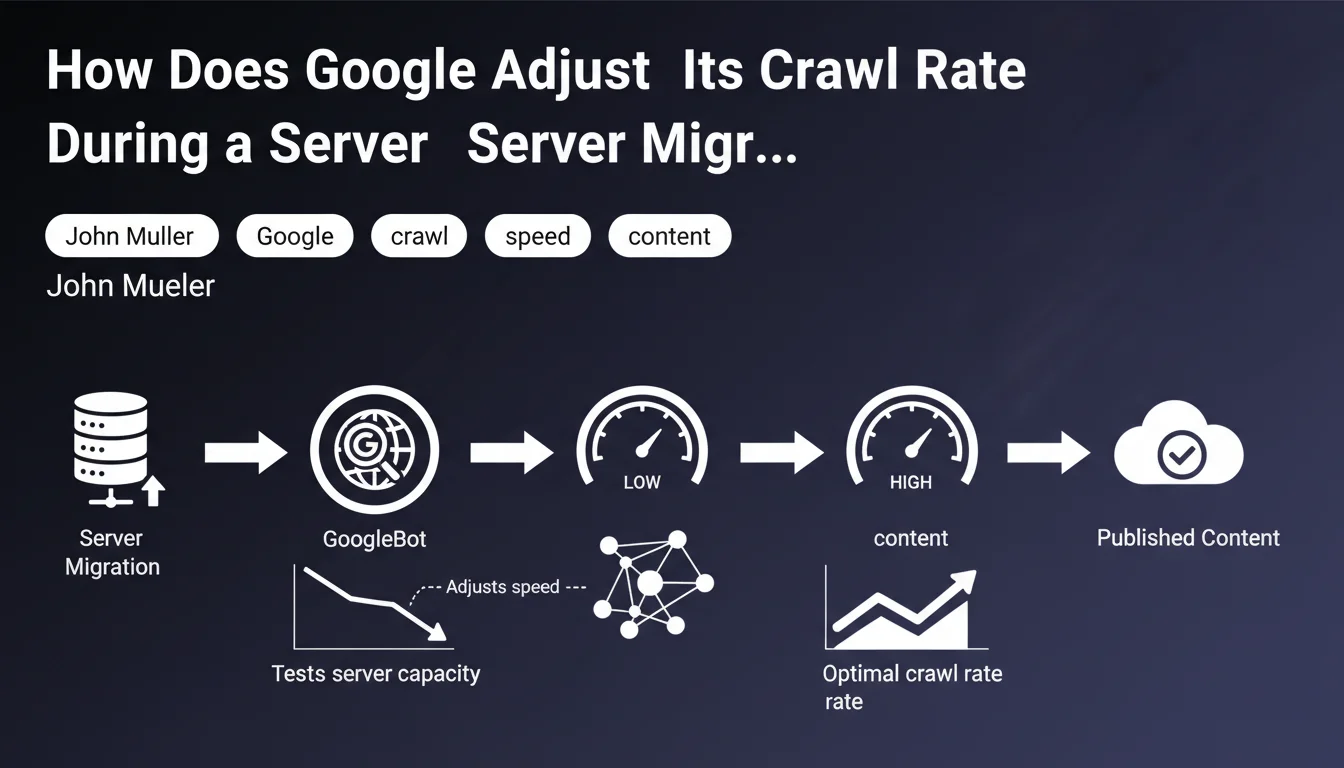

When you move your site to a new server, Google doesn't know the technical capabilities of this new infrastructure. The observed behavior is therefore an automatic calibration phase: GoogleBot deliberately reduces its initial crawl rate.

This cautious approach allows the search engine to progressively test the server's capacity without risking overloading it. It's a protective measure to avoid degrading your site's user experience during the critical post-migration phase.

What actually happens during this period?

Google performs a progressive learning process. The bot sends requests in waves, analyzes response times and server stability. If everything goes well, the crawl gradually accelerates until it reaches an optimal pace.

This adjustment phase can last several days, or even a few weeks depending on the site's size. During this period, you'll observe fluctuations in the crawl statistics in Search Console.

What are the key indicators to monitor?

- The crawl stats report in Search Console shows the evolution of pages crawled per day

- Average response times indicate whether your server is properly handling the load

- Server errors (5xx) are a warning signal for Google, which will slow down its crawl even further

- Crawl volume should gradually increase to reach and then exceed pre-migration levels if the new server is more performant

SEO Expert opinion

Is this statement consistent with practices observed in the field?

After 15 years of supporting migrations, I can confirm that this behavior is systematically observed. Google does indeed adopt a conservative approach that can frustrate SEOs eager to see their pages reindexed quickly.

However, the extent and duration of this slowdown vary considerably. I've seen sites recover their crawl rate within 48 hours, while others took several weeks. The difference largely depends on the quality of the target infrastructure and the site's reliability history.

What important nuances should be added?

Mueller's statement is correct but incomplete on one crucial point: it's not just server speed that matters. Google also evaluates HTTP response consistency, DNS stability, and even the overall network configuration.

Furthermore, if you're simultaneously migrating to a new domain or changing your URL architecture, the stakes go far beyond simple crawl adaptation. In that case, redirect management and the change of address signal in Search Console become priorities.

In which cases does this rule not fully apply?

For very large sites (several million pages), Google often maintains substantial crawling even during the calibration phase, because the need for content freshness takes priority. News sites also benefit from faster treatment.

Conversely, small sites with limited crawl budget may see their crawl stagnate for a long time if the new server shows latency, even minimal. Their margin for error is narrower.

Practical impact and recommendations

What should you prepare before migration to optimize crawl?

The key to a successful migration lies in preparing the target infrastructure. Make sure your new server has capacity well above your current needs, with a margin of at least 200% to absorb crawl spikes.

Conduct load testing before switching over. Simulate simultaneous request volumes higher than what GoogleBot could generate. A server that fails under load will considerably delay the return to normal crawling.

- Set up real-time server monitoring (CPU, RAM, response times) before migration

- Optimize web server configuration (cache, compression, keep-alive)

- Verify that the robots.txt file and XML sitemap are immediately accessible on the new server

- Prepare a quick rollback plan in case problems are detected

How should you monitor and react after migration?

During the first 72 hours, check the crawl stats report in Search Console daily. Verify that response times remain stable and low (ideally under 200ms).

If you notice an increase in server errors or degrading response times, intervene immediately. Google remembers these negative signals and will take longer to trust your infrastructure again.

- Monitor the 5xx error rate which should remain close to zero

- Analyze server logs to identify pages that are slowing down the crawl

- Adjust crawl frequency if necessary via crawl settings (although Google does this automatically)

- Document crawl evolution to compare with subsequent weeks

What critical mistakes must absolutely be avoided?

The most common mistake is migrating to an undersized server for budgetary reasons. This creates a vicious cycle: the server struggles, Google slows down crawling, indexing stagnates, and SEO performance deteriorates.

Never block Google's crawl during or immediately after migration, even temporarily. Some webmasters think they're doing the right thing by limiting the load, but this considerably delays the process of recognizing the new infrastructure.

In summary, a server migration systematically triggers a Google crawl adaptation period. This phase is normal and temporary, but its duration directly depends on the quality of your technical preparation.

The technical challenges of a migration are multiple: server infrastructure, DNS configuration, cache management, response time optimization, log monitoring, Search Console metrics analysis. These operations require specialized technical expertise and precise coordination between teams.

For high-stakes business migrations, support from a specialized SEO agency helps secure each step, anticipate technical problems, and significantly reduce the transition period. This structured approach minimizes traffic loss risks and optimizes the return to optimal crawling.

💬 Comments (0)

Be the first to comment.