Official statement

What you need to understand

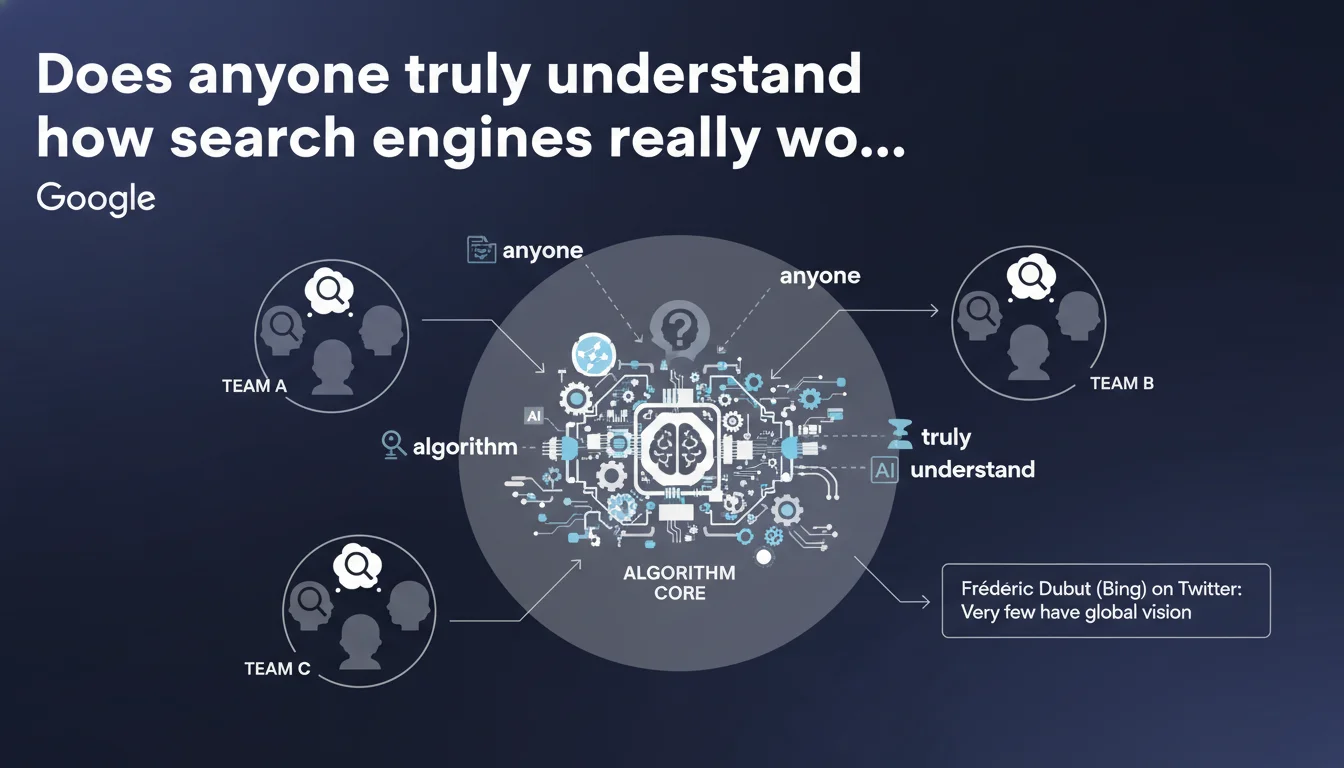

Why don't internal search engine teams understand the entire algorithm?

Frédéric Dubut from Bing's statement reveals an overlooked reality: very few people within the teams possess a global vision of the relevance algorithm. This situation is explained by the extreme complexity of modern systems.

Each engineer works on specialized components (crawling, indexing, ranking, quality) without necessarily understanding how everything fits together. This compartmentalization is similar at Google, where hundreds of signals interact.

What impact does Machine Learning have on this complexity?

The integration of Machine Learning and AI has considerably increased the opacity of algorithms. Machine learning models create correlations that even their designers cannot precisely explain.

This "black box" makes exhaustive understanding impossible, even for internal teams. Results are observable and measurable, but the precise decision-making mechanisms remain obscure.

Why does this information matter for SEO professionals?

This revelation has major implications for our daily SEO practice. It confirms that it's unrealistic to try to completely decode Google's algorithm.

- No SEO expert can claim to know all the details of how Google works

- Google employees themselves only have a partial vision of the overall system

- Testing and empirical observations remain the best approach to understanding how it works

- Bing's increased transparency offers valuable insights applicable to all search engines

- Fundamental principles (relevance, quality, user experience) remain universal

SEO Expert opinion

Does this statement align with what we're observing in the field?

Absolutely. In 15 years of SEO practice, I've observed that Google's behaviors are often contradictory between different verticals or query types. This is perfectly explained if different teams are optimizing different components.

Core Updates trigger unpredictable effects precisely because they modify the balance between hundreds of interconnected signals. Even Google cannot anticipate all the side effects of these major modifications.

What implications does this complexity have on official SEO guidance?

This reality explains why Google's official communications remain deliberately general and principle-focused. Spokespersons cannot detail what even their technical teams don't fully master.

Recommendations focus on universal concepts (quality content, user experience, E-E-A-T) because these are the only comprehensible points of convergence. Technical details escape exhaustive documentation.

Why is Bing's transparency valuable for understanding Google?

Bing's recent communications are indeed more detailed and technical than Google's. This increased transparency offers us a window into the general functioning of modern search engines.

Although Google and Bing differ in their implementations, they share common architectural principles. Bing's insights allow us to formulate valid hypotheses about Google, while keeping in mind the differences in market share and approaches.

Practical impact and recommendations

What SEO strategy should we adopt in the face of this algorithmic complexity?

The most effective approach consists of focusing on timeless fundamentals rather than trying to exploit algorithmic loopholes. Solid foundations withstand fluctuations and updates.

Favor a holistic and qualitative strategy: content answering search intents, optimal technical architecture, topical authority, and exemplary user experience. These pillars transcend algorithmic modifications.

How can we test and measure effectively without knowing all the parameters?

Adopt an observation-based scientific methodology. Document your modifications, measure impacts, and draw conclusions about your specific context.

Use advanced analysis tools (Search Console, analytics, crawlers) to identify correlations between your actions and results. Patterns emerge from data accumulation, even without understanding all underlying mechanisms.

What mistakes should we avoid in this context of uncertainty?

The main mistake would be blindly following "miracle recipes" or supposedly foolproof techniques. Each site evolves in a unique context with its own variables.

- Don't try to manipulate the algorithm with obscure techniques that can backfire

- Avoid over-optimizing for a single signal at the expense of the whole (the famous "over-optimization" trap)

- Don't blindly copy what works for competitors without understanding the context

- Reject promises of guaranteed SEO results - nobody controls the algorithm

- Don't neglect fundamentals in favor of premature advanced tactics

- Avoid taking official communications as absolute truths - they are deliberately incomplete

- Don't underestimate the importance of continuous testing and permanent adaptation

- Implement a rigorous monitoring system to quickly detect the impacts of updates

💬 Comments (0)

Be the first to comment.