Official statement

What you need to understand

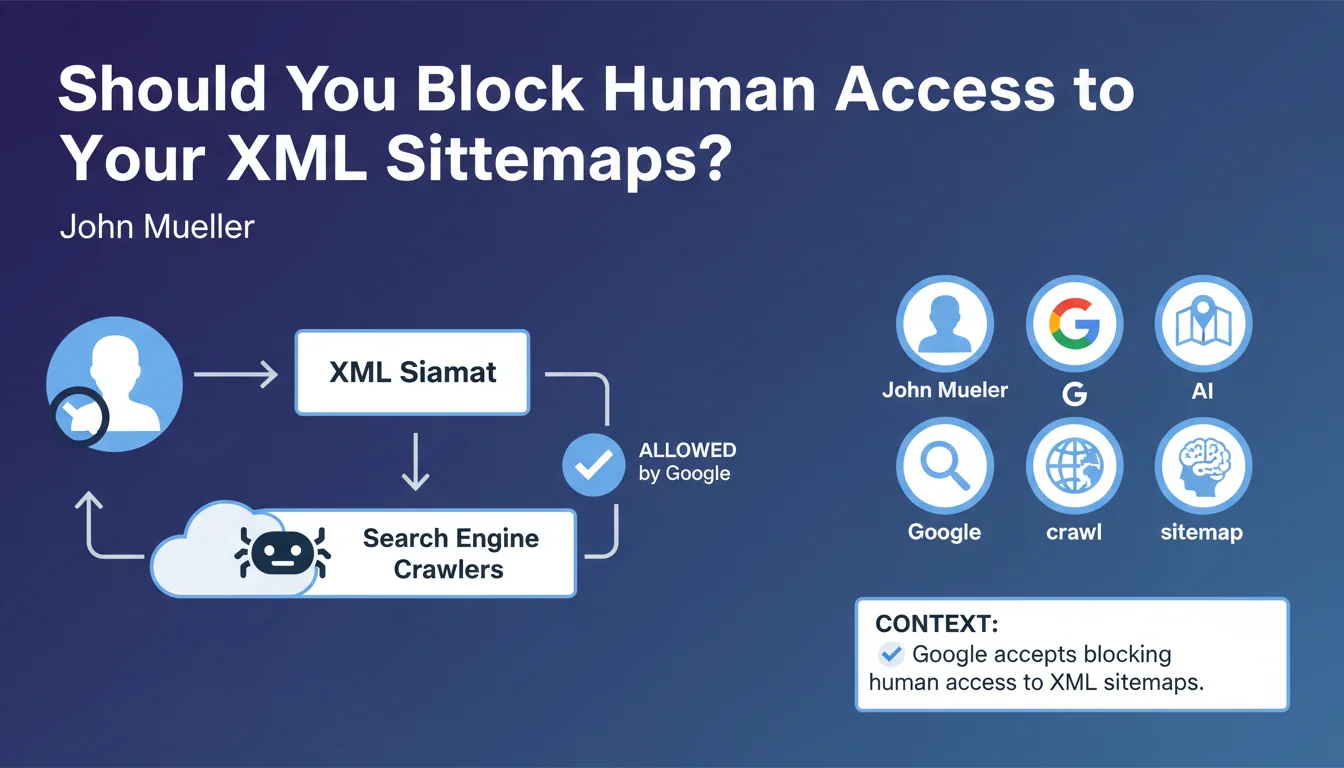

Google has just clarified a little-known practice: it is perfectly acceptable to make your XML sitemap files accessible only to search engine robots while blocking them for human visitors.

In practical terms, this means you can configure your server to deny direct access to your sitemap.xml via a browser, while still allowing crawlers like Googlebot to read and use it normally.

This statement raises a legitimate question: why would you want to hide a technical file that only contains public URLs? Several motivations may explain this choice:

- Security through obscurity: limiting exposure of the complete site structure to competitors or malicious scrapers

- Preventing mass scraping: making it harder to automatically extract all site URLs in a single request

- Hiding sensitive sections: avoiding revealing the existence of certain strategic categories or pages

- Internal compliance: adhering to strict security policies in certain organizations

SEO Expert opinion

From a pragmatic perspective, this practice remains fairly marginal in the SEO industry. Most sites leave their sitemaps freely accessible without encountering any particular problems.

The real effectiveness of this approach needs to be qualified: blocking the sitemap doesn't prevent crawling. A determined competitor can still explore your site page by page, use backlink analysis tools, or consult public archives. The sitemap merely facilitates this process.

However, this method can be justified in specific contexts: e-commerce sites with thousands of products where pricing structure is sensitive, platforms with high-value content, or environments where regulatory compliance requires access restrictions.

Practical impact and recommendations

If you decide to restrict access to your sitemaps, here are the concrete actions to take:

- Identify the user-agents of search engines you want to authorize (Googlebot, Bingbot, etc.)

- Configure your server (Apache, Nginx) to filter requests based on user-agent and block standard browsers

- Test systematically with Google Search Console that your sitemaps remain accessible and properly processed

- Maintain the declaration in robots.txt: even if the file is protected, indicate its location to guide crawlers

- Monitor your server logs to detect any access issues from legitimate bots

- Document your configuration to prevent future technical changes from breaking this restriction

For the majority of sites, keeping sitemaps public remains the best option: it's simpler to maintain, less technically risky, and the security advantage is marginal.

💬 Comments (0)

Be the first to comment.