Official statement

What you need to understand

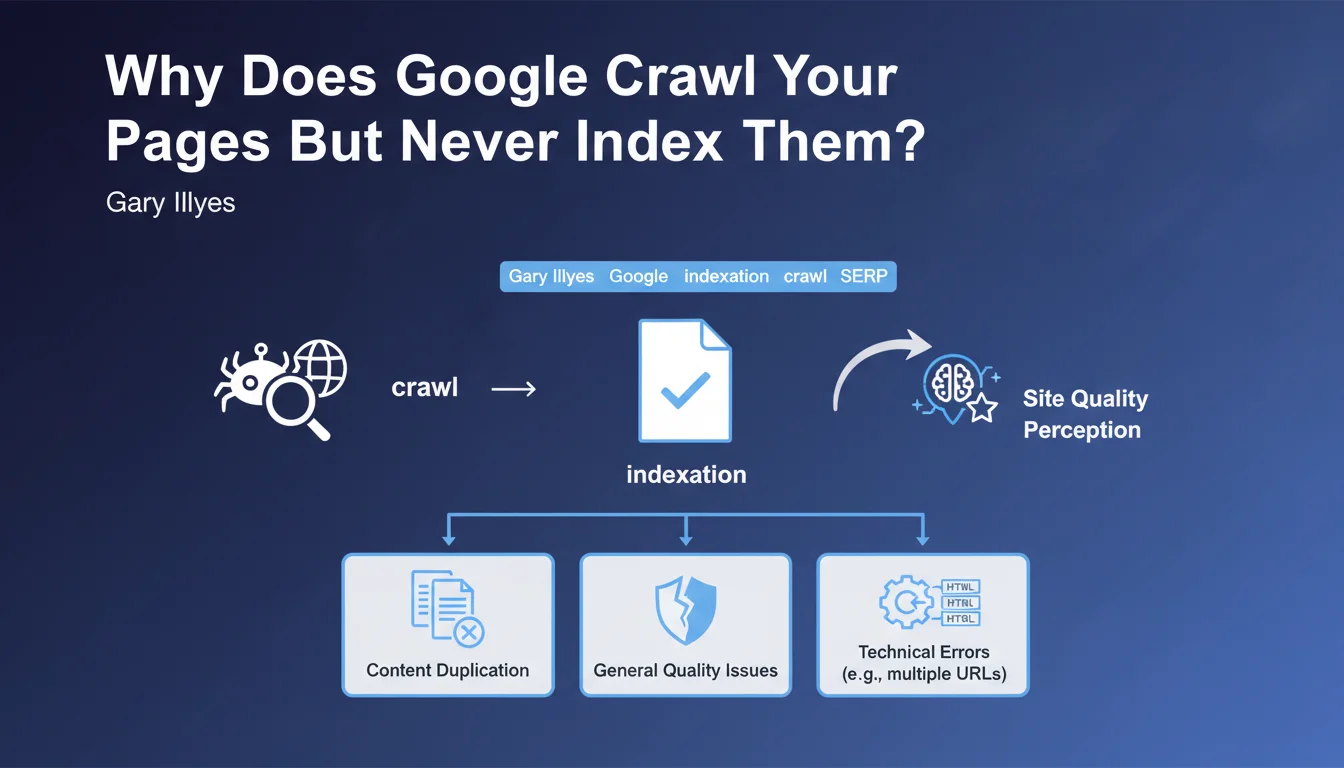

Crawling and indexing are two distinct stages in the SEO process. Google can perfectly crawl your pages without necessarily adding them to its index. This situation, frustrating for SEO practitioners, affects more pages than one might think.

Gary Illyes clarified the main reasons for this phenomenon during SERP Conf 2024. This is not a malfunction, but a conscious decision by Google not to include certain pages in its search results.

This decision is based on several criteria that Google evaluates after crawling:

- Content duplication: pages too similar to other content on the site or across the web

- General quality issues: content deemed insufficient, superficial, or of low value

- Technical errors: multiple URLs pointing to the same content (parameters, sessions, etc.)

- Overall site perception: the general quality of the domain influences the indexation of individual pages

This last point is particularly important: a site with numerous low-quality pages will see its new pages scrutinized more severely by Google before indexation.

SEO Expert opinion

This statement confirms what we observe daily in the field. Google has become much more selective in its indexation, particularly since 2022-2023. The search engine no longer wishes to systematically index everything it crawls.

A crucial point often underestimated: a site's quality affects the indexation of all its pages. If your domain contains hundreds of weak pages (archives, unoptimized tags, automated pages), even your premium content can be penalized by association. This is a quality contamination effect.

Also note that Google can change its mind: a page not indexed today may be indexed later if the site's perceived quality improves, or conversely be deindexed if it deteriorates.

Practical impact and recommendations

- Audit your crawled but not indexed pages via Google Search Console ("Coverage" or "Pages" report) to identify affected volumes

- Ruthlessly eliminate low-quality content: delete or consolidate thin, outdated, or redundant pages

- Resolve URL duplications: use canonicals, properly manage URL parameters, block unnecessary facets in robots.txt

- Invest in the depth of your priority content: substantially enrich the pages you really want indexed

- Optimize your information architecture: reduce the total number of pages, prioritize quality over quantity

- Monitor your domain's overall perception: Core Web Vitals, user experience, E-E-A-T signals

- Use the URL inspection tool to force re-evaluation of important pages after improvement

- Consolidate your relevance signals: coherent internal linking, reinforced semantic context

These optimizations touch on technical, editorial, and strategic dimensions that can prove complex to orchestrate simultaneously. Improving indexation requires a comprehensive and methodical approach, combining in-depth technical audit, architectural redesign, and content enhancement. For medium to large-scale sites, support from a specialized SEO agency enables you to establish a precise diagnosis and deploy a personalized action plan, particularly when indexation issues significantly impact your organic visibility.

💬 Comments (0)

Be the first to comment.