Official statement

What you need to understand

What does this Google statement about URLs actually mean?

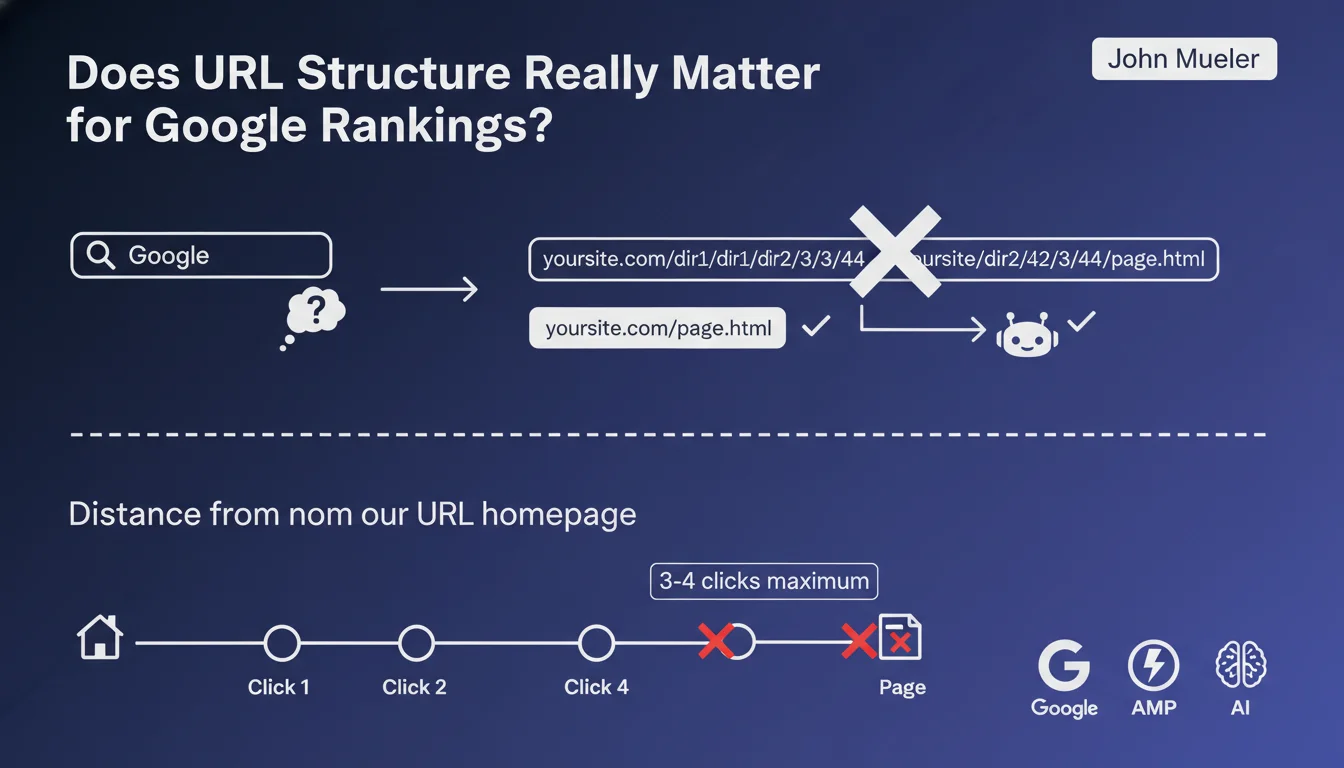

John Mueller clearly states that the depth of directories in a URL is not a ranking criterion for Google. A URL like /cat1/subcat2/subcat3/page.html is therefore not penalized compared to /page.html.

What really matters is the number of clicks required from the homepage to reach the page in question. Google favors content that is easily accessible within the site's structure.

What's the difference between URL depth and crawl depth?

URL depth corresponds to the number of slashes (/) in the address. This is purely cosmetic for Google.

Crawl depth represents the number of clicks from the homepage. This is the criterion that actually impacts SEO and exploration by Googlebot.

What rule should you follow regarding click depth?

The standard recommendation is to keep all important pages within 3-4 clicks maximum from the homepage. Beyond that, Google considers the content as less of a priority.

- URL structure (number of /) does not affect crawling

- The number of clicks from the homepage is the determining criterion

- The recommended limit is 3-4 clicks maximum

- Deep pages risk being less crawled and less well ranked

- Internal linking architecture takes precedence over URL structure

SEO Expert opinion

Is this statement consistent with real-world observations?

Absolutely, and this statement from Mueller confirms what we've been observing for years. Many high-performing sites use complex URLs with multiple directories without any negative impact on their rankings.

The reverse is also true: short URLs buried 6 or 7 clicks deep perform poorly, regardless of their elegance. Internal linking and accessibility remain the true levers.

What important nuances should be added to this rule?

Caution: while URL depth doesn't directly impact SEO, it strongly influences user experience. Readable and understandable URLs improve click-through rates in SERPs.

Additionally, a short and descriptive URL facilitates social sharing and memorization. There are therefore UX and marketing reasons to favor simple URLs, even if it's not a direct ranking factor.

In which cases does this rule require special attention?

E-commerce sites with many nested categories must be vigilant. Even if the URL contains 5 levels, ensure that products remain accessible within 3 clicks via the menu or facets.

News sites and large blogs must also optimize their pagination and tag systems to keep older content accessible despite the accumulation of articles.

Practical impact and recommendations

How can you audit and correct your site's crawl depth?

Use tools like Screaming Frog or Sitebulb to analyze the click depth of all your pages. Identify those located more than 3-4 clicks from the homepage.

For important pages poorly positioned in the architecture, create shortcuts through internal linking: add them to the menu, footer, or create thematic hubs that link to them directly.

What common mistakes should you absolutely avoid?

Don't sacrifice a logical architecture just to shorten URLs. A structure like /products/clothing/men/shirts/ remains clearer than a cryptic /p12345/, even if both work technically.

Also avoid excessive pagination that creates artificial depth. Prefer filter systems or progressive loading for large lists of products or articles.

- Audit click depth with a professional SEO crawler

- Identify all strategic pages beyond 3-4 clicks

- Optimize internal linking to reduce this depth

- Integrate important pages into navigation menus

- Create hub pages or categories to facilitate access

- Regularly monitor coverage in Google Search Console

- Prioritize UX in your URLs even if it's not a direct ranking factor

- Document your architecture to maintain consistency during updates

💬 Comments (0)

Be the first to comment.