Official statement

What you need to understand

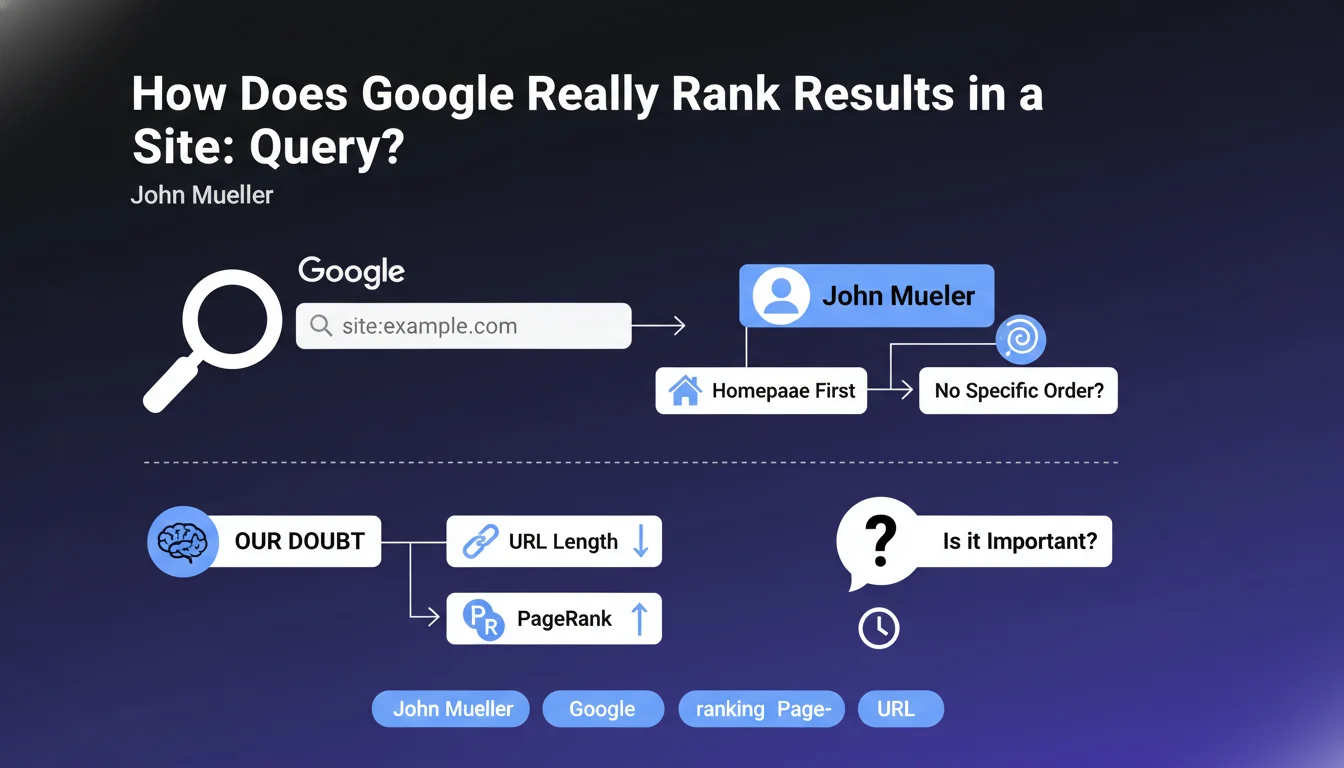

What is the site: operator and why is it crucial in SEO?

The site: operator is an advanced search command that allows you to query Google about the pages it has indexed for a specific domain. By typing "site:mysite.com" in the search bar, you get an overview of the URLs present in the engine's index.

This tool is fundamental for SEO professionals because it allows you to quickly diagnose indexation problems, detect duplicate content, or verify whether certain strategic pages are indeed present in Google's index.

Why is Google confirming the maintenance of this feature?

Gary Illyes reassured the SEO community by stating that Google is not considering removing this query syntax. This statement probably comes in response to professionals' concerns about the qualitative degradation of results.

He even mentions internal resistance among Googlers against any removal, which demonstrates the importance of this tool even within the company for monitoring and debugging purposes.

What's the current problem with the returned results?

For several months, the results from the site: operator have become less reliable and sometimes erratic. Professionals are noticing inconsistencies, missing pages, or changing results from one search to another.

This degradation would potentially be linked to the anti-spam mechanisms implemented by Google, which would collaterally affect the accuracy of this diagnostic tool that is nevertheless essential for professional audits.

- The site: operator remains available according to Google and will not be removed

- Results have become less accurate and reliable in recent months

- This degradation complicates the professional audit work of websites

- The probable cause would be linked to Google's anti-spam filters

- Despite everything, this tool remains indispensable for an initial indexation diagnosis

SEO Expert opinion

Is this statement consistent with the reality observed in the field?

There is an apparent contradiction between Gary Illyes' reassuring statement and the daily experience of SEO professionals. Yes, the site: operator is maintained, but its operational reliability has considerably degraded.

This situation creates a paradox: Google keeps the tool but makes it less usable. For professionals, this poses a real problem because strategic decisions made based on erroneous data can have significant consequences on SEO performance.

What nuances should be brought to this announcement?

It's important to remember that Google has always indicated that the site: operator only provides an approximate sample of indexation, never an exhaustive list. This limitation was already known, but the current situation goes beyond this simple approximation.

The excessive variability of results and the obvious bugs observed today far exceed the normal limits of sampling. It's no longer just a matter of simple imprecision but of problematic instability that calls into question the very usefulness of the tool.

Why would Google potentially degrade this tool to fight spam?

The anti-spam fight hypothesis is plausible because spammers used the site: operator to quickly assess the effectiveness of their index pollution techniques. By making results less reliable, Google complicates their work.

However, this approach also penalizes legitimate professionals who need accurate diagnostic tools. Google seems to have sacrificed professional utility in favor of fighting abuse, a questionable decision from a methodological point of view.

Practical impact and recommendations

How can you audit your site's indexation without relying solely on the site: operator?

Google Search Console must become your primary source of truth regarding indexation. The "Coverage" report and the new "URL Inspection" tool provide official data directly from Google's internal systems.

Complement this analysis with professional crawl tools like Screaming Frog or Oncrawl, which will allow you to compare your XML sitemap with what Google actually indexes. Server logs are also valuable for identifying pages visited by Googlebot.

What mistakes should you avoid when analyzing indexation?

Never make important strategic decisions based solely on site: operator results. A fluctuating number of results doesn't necessarily mean a real indexation problem.

Also avoid panicking if certain pages don't appear in site: results. First check in Search Console whether these pages are actually indexed before undertaking corrective actions that could be counterproductive.

What methodology should you adopt for a reliable indexation audit?

Implement a systematic multi-source approach for your audits. Cross-reference at least three data sources: Search Console, server logs, and technical crawl of your site.

Document your observations over time to identify real trends rather than one-off variations. Weekly or monthly tracking will give you a much more reliable view than a one-time analysis.

- Prioritize Google Search Console as the primary source of indexation data

- Use the site: operator only for a quick overview, never for strategic decisions

- Set up a regular crawl of your site with professional tools

- Analyze server logs to understand Googlebot's actual behavior

- Systematically cross-reference multiple data sources before any conclusion

- Document the evolution of indexation over time rather than relying on a one-time snapshot

- Train your teams on the current limitations of the site: operator

- Avoid panicking over site: result fluctuations without prior verification

💬 Comments (0)

Be the first to comment.