Official statement

What you need to understand

Why does this question keep coming up among webmasters?

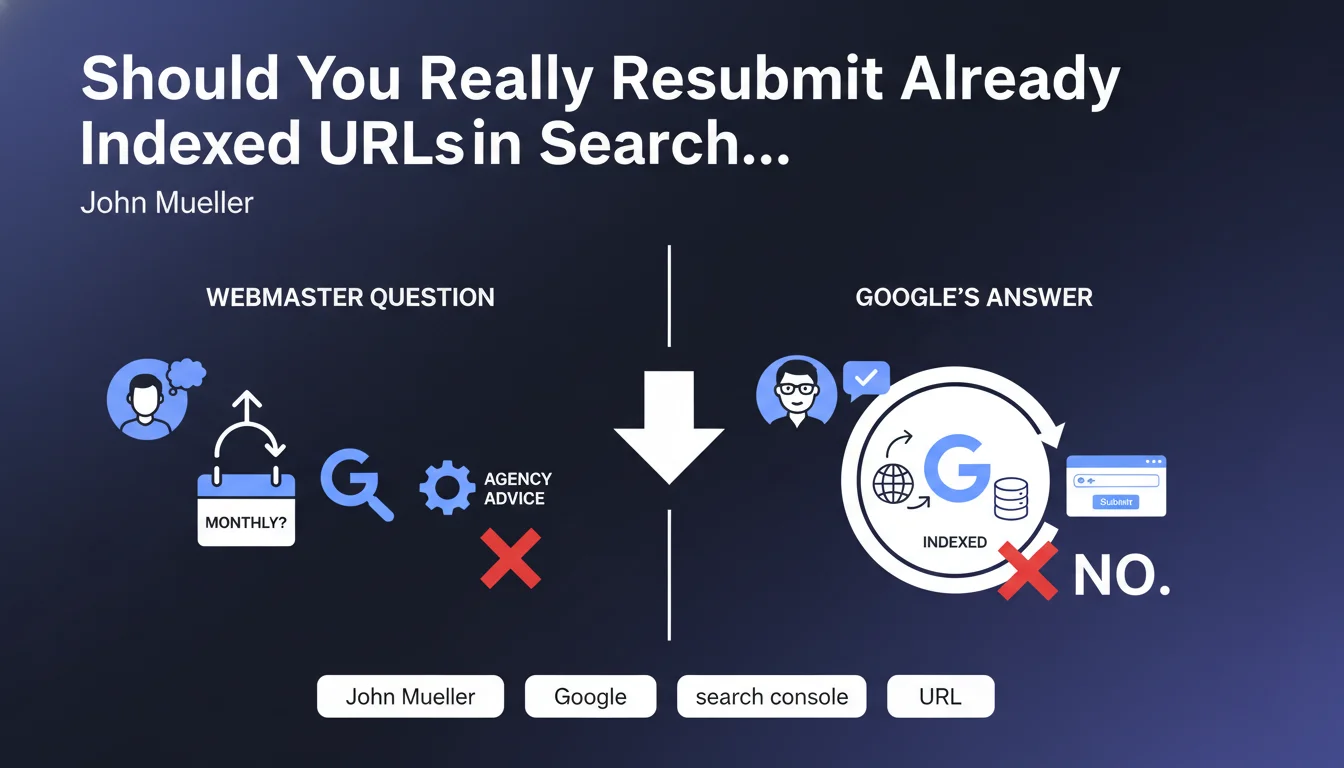

Many unscrupulous SEO agencies still recommend monthly resubmission of already indexed URLs via Search Console. This practice is presented as a necessary optimization action to "maintain" indexation.

John Mueller clearly stated that this approach is completely useless for pages already present in Google's index. Once a URL is indexed, the robots naturally return to crawl it according to a schedule determined by the algorithm.

What's the difference between indexation and reindexation?

Initial indexation concerns adding a new page to Google's database. The URL Inspection tool in Search Console is useful in this context to speed up discovery.

Reindexation, on the other hand, occurs automatically when Googlebot recrawls a page. This process depends on factors like content update frequency, site authority, and allocated crawl budget.

In which cases does manual submission still make sense?

The URL Inspection tool remains relevant for new pages you want to index quickly, or after a major modification to an existing page.

It's also useful in cases of urgent correction (resolved duplicate content, fixed technical error) where you want Google to take the changes into account without waiting for the next natural crawl.

- Monthly resubmission of already indexed URLs provides no added value

- Google automatically crawls pages according to their importance and freshness

- The inspection tool should only be used occasionally for specific cases

- Agencies that charge for this monthly service are selling hot air

SEO Expert opinion

Is this statement consistent with practices observed in the field?

Absolutely. After 15 years of observation, I confirm that recurring resubmission of indexed URLs has never had any measurable impact on ranking or indexation freshness.

The best-performing sites are those that focus on content quality and technical architecture, not on useless submission rituals. Google is sophisticated enough to manage crawling autonomously.

Why do some agencies continue to offer this service?

This practice primarily serves to justify monthly fees by creating the illusion of regular activity. It exploits clients' lack of technical knowledge.

It's a major warning sign regarding the agency's actual competence. A serious SEO service focuses on on-page optimization, content strategy, link building, and user experience, not on worthless actions.

What are the exceptions where manual action is justified?

A resubmission can be legitimate after a major site redesign, correcting a penalty, or publishing time-sensitive strategic content (news, event).

In these specific cases, occasional use of the inspection tool does effectively accelerate the process. But this remains an exceptional action, never a monthly routine.

Practical impact and recommendations

What should you actually do to optimize your site's indexation?

Focus on technical fundamentals: a properly configured XML sitemap submitted once and for all, a clear architecture with coherent internal linking, and optimized loading times.

Make sure your robots.txt file doesn't prevent crawling of important pages and that your crawl budget is used efficiently by eliminating low-value pages (filters, unnecessary pagination).

Publish fresh content regularly. That's what naturally encourages Google to crawl your site more frequently, not repetitive manual submissions.

What mistakes should you absolutely avoid?

Don't waste your time resubmitting already indexed URLs monthly. This action influences neither your ranking nor the freshness of your indexation.

Avoid excessively soliciting the URL Inspection tool. Google might consider abusive usage as spam, and it doesn't speed up the overall indexation process anyway.

Don't pay for services that consist solely of recurring manual submissions. It's wasted SEO budget that should be invested in real optimizations.

How can you verify that your indexation strategy is effective?

Use the Coverage report in Search Console to identify real issues: pages discovered but not crawled, 404 errors, canonicalization problems.

Regularly analyze your server logs to understand how Googlebot actually explores your site and identify neglected or over-crawled sections.

- Submit your XML sitemap just once in Search Console

- Only use the inspection tool for new pages or major modifications

- Monitor the Coverage report to detect real indexation problems

- Optimize your crawl budget by eliminating valueless pages

- Invest in quality content rather than useless repetitive actions

- Audit your agency's services if they're billing monthly submissions

- Analyze your server logs to understand Googlebot's actual behavior

💬 Comments (0)

Be the first to comment.